@ 20241112 & lth Start: 2025-08-07 Updated: 2025-11-19

Simple tasks are not detailed here.

1. SLDPRT to GEOJSON – 20250807

2. MAPGIS to CAD – 20250815

3. Spatial adjustment of CAD vector data to GCJ02 – 20250820

4. Excel coordinates to SHP – 20250821

5. MDB to CAD – 20250821

6. MAPGIS to SHP – 20250822

7. Excel to SHP – 20250901

8. CAD to SHP – 20250901

9. CAD to SHP – 20250901

10. CAD to SHP – 20250902

11. CAD to SHP – 20250902

12. CAD to SHP – 20250902

13. Vector merge and split – 20250904

14. CAD to SHP – 20250904

15. MAPGIS to SHP – 20250904

16. CAD to SHP – 20250904

17. CAD to SHP – 20250904

18. CAD to SHP – 20250904

19. PDF vectorization – 20250904

20. SHP to CAD – 20250905

21. STL to GLB – 20250908

22. KML to SHP – 20250908

23. Bivariate map – 20250909

24. Supervised classification – 20250910

25. Forestry polygon vector drawing – 20250911

26. CAD flute replication – 20250912

27. 3D bar chart – 20250915

28. Heavy metal content map of Anhui section of Yangtze River – 20250917

29. Location map – 20250917

30. Rainfall progression map of Yangtze and Huai rivers in 1931 – 20250918

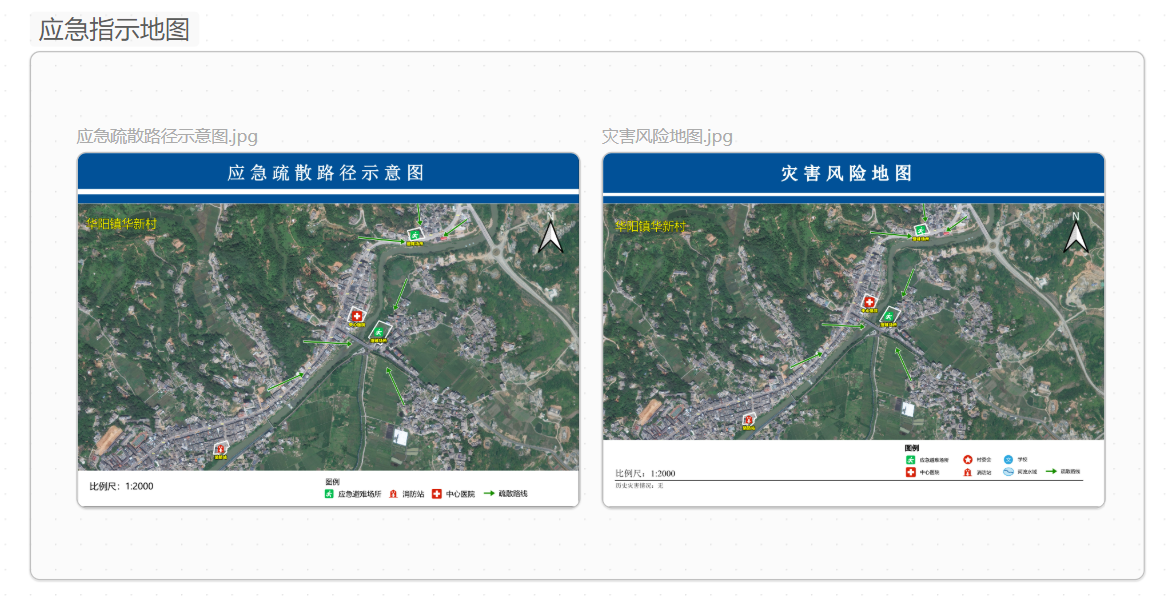

31. Emergency map – 20250919

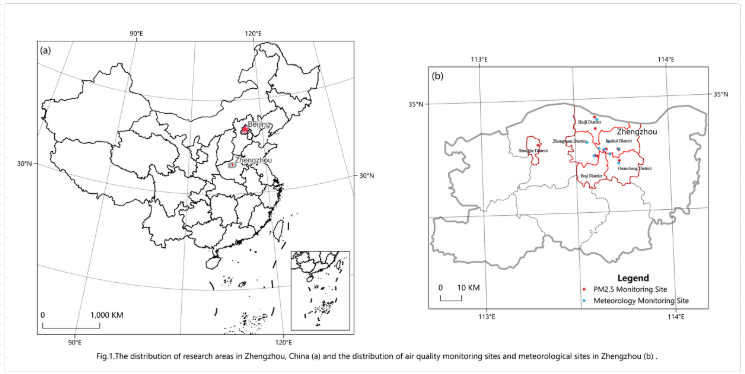

32. Zhengzhou monitoring points – 20250921

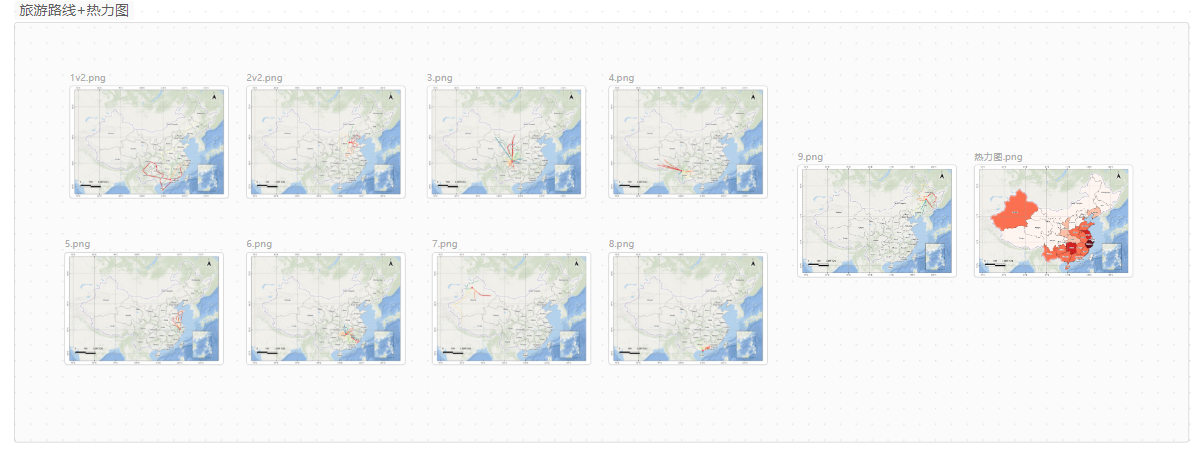

33. Tourist route + heatmap – 20250925

34. Raster gap filling – 20250926

35. Bird watching route drawing – 20250926

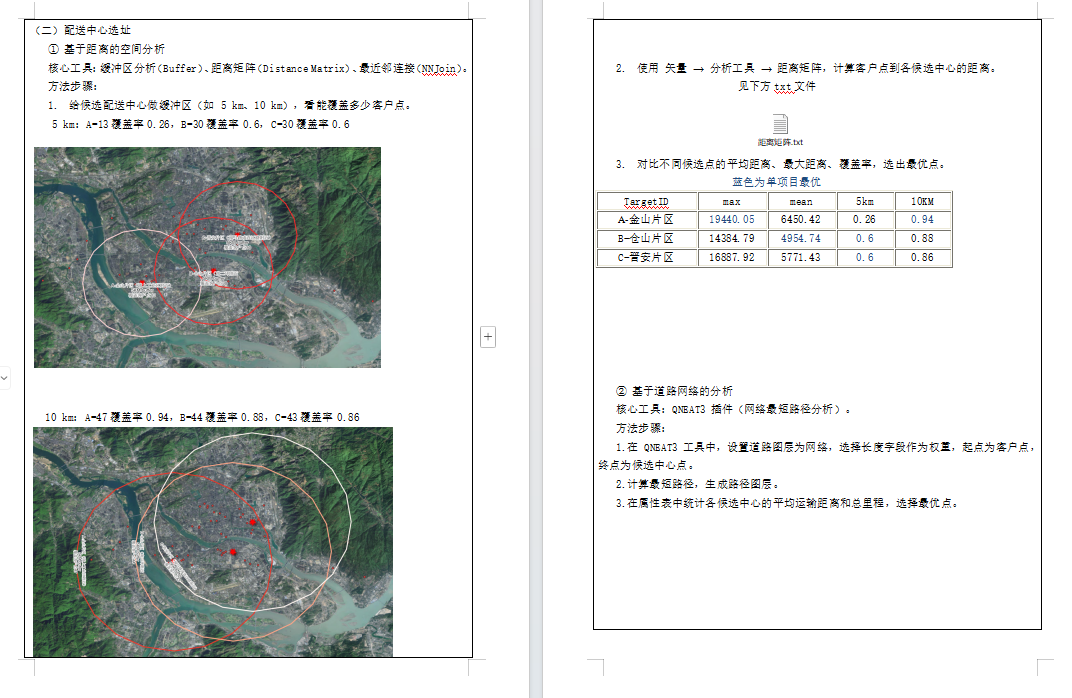

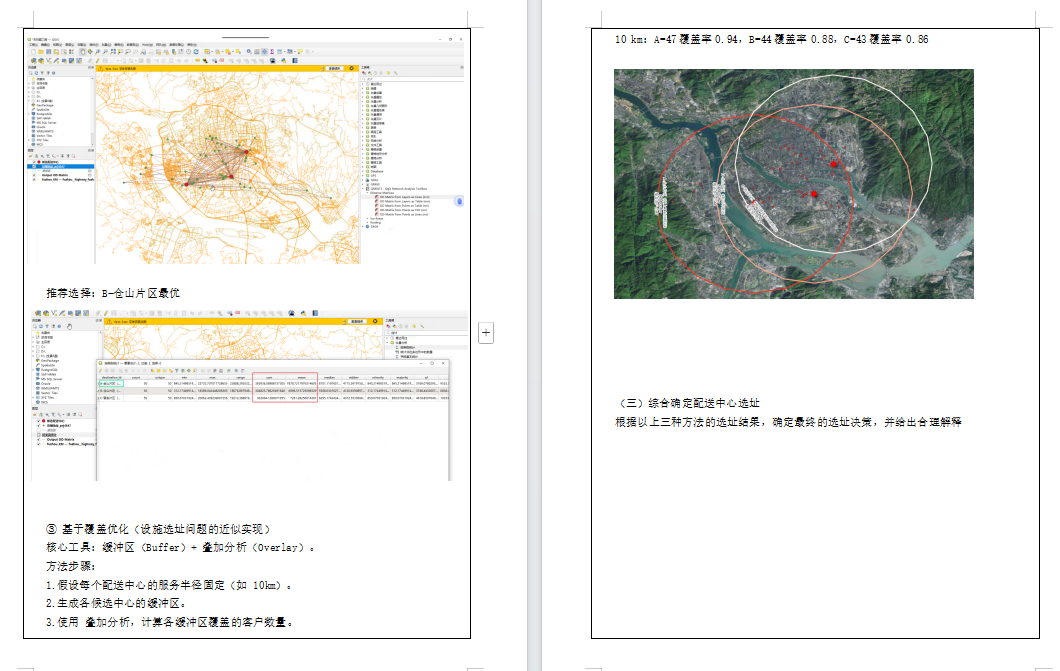

36. Site selection analysis – 20250927

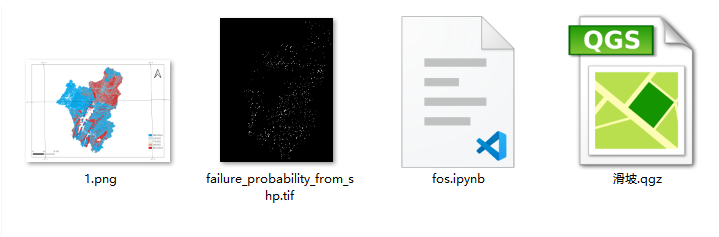

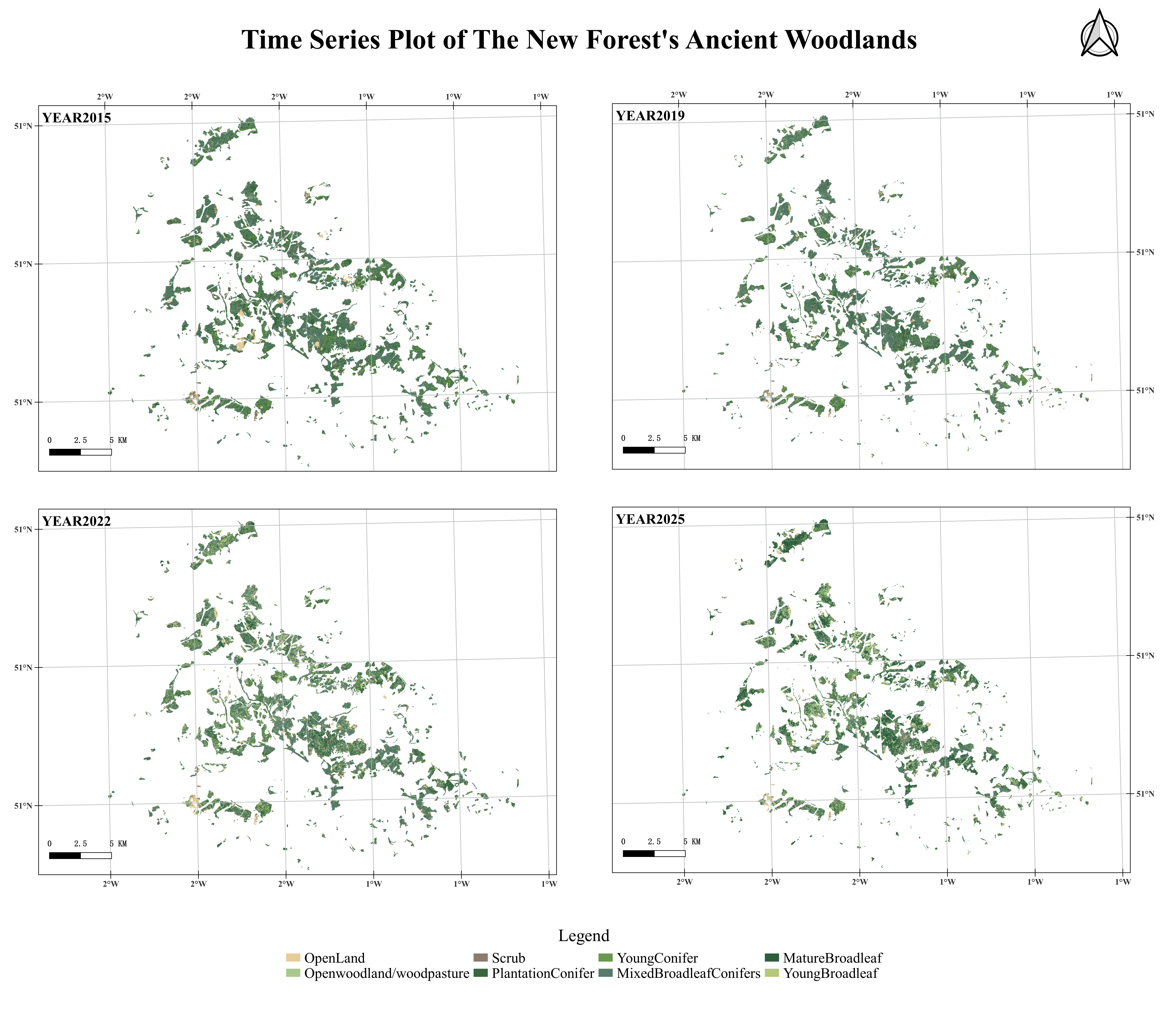

37. FOS landslide failure probability calculation – 20250927

38. Confusion matrix – 20251001

...

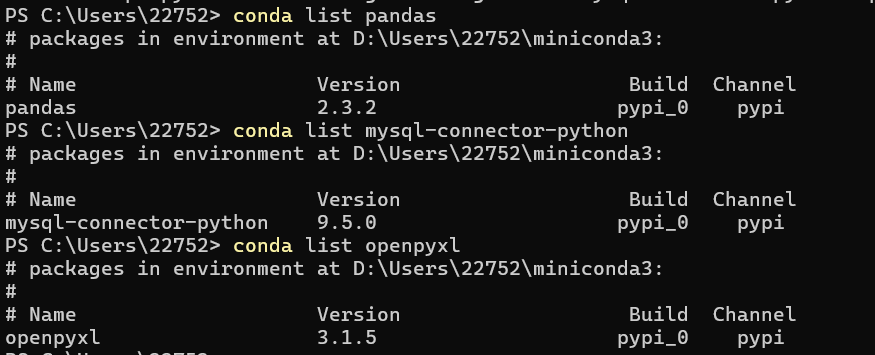

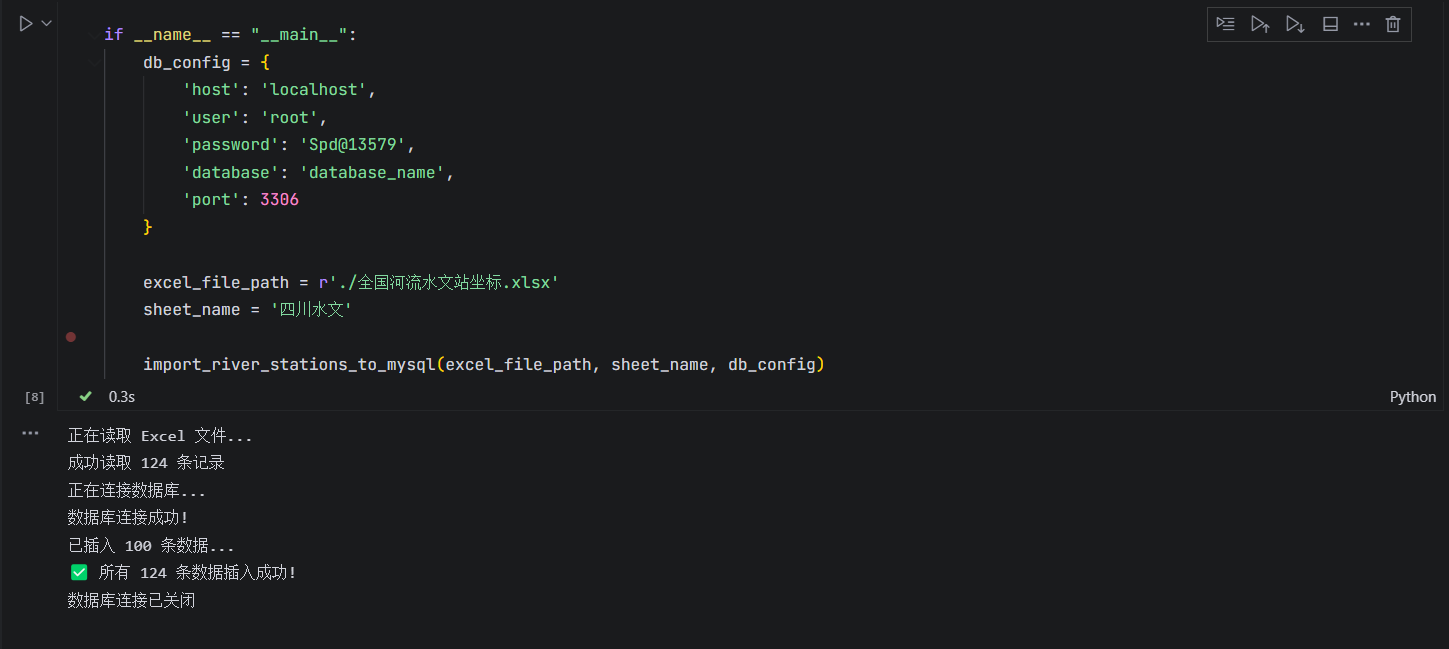

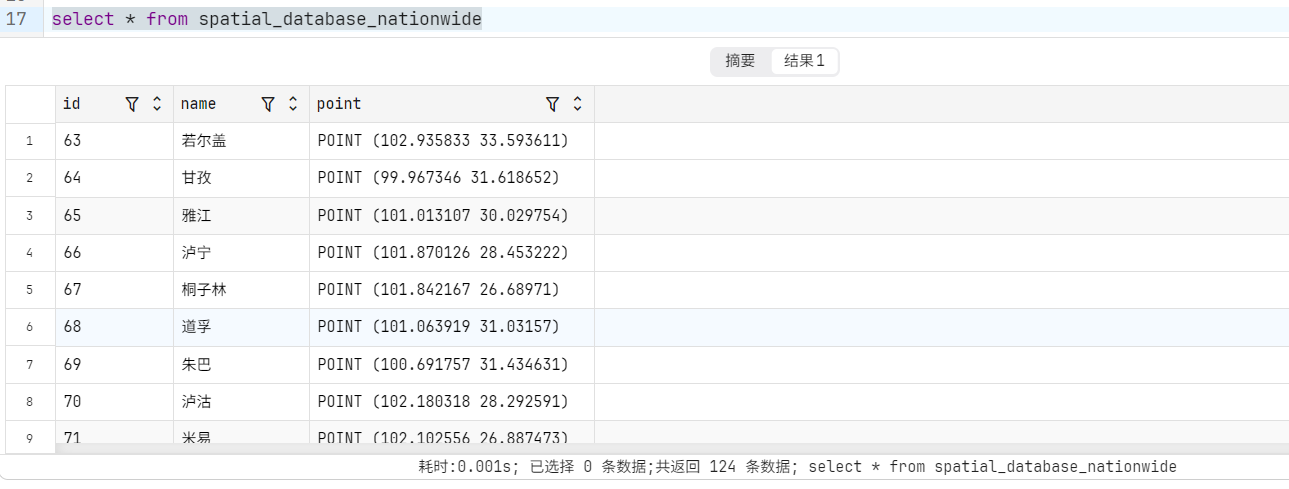

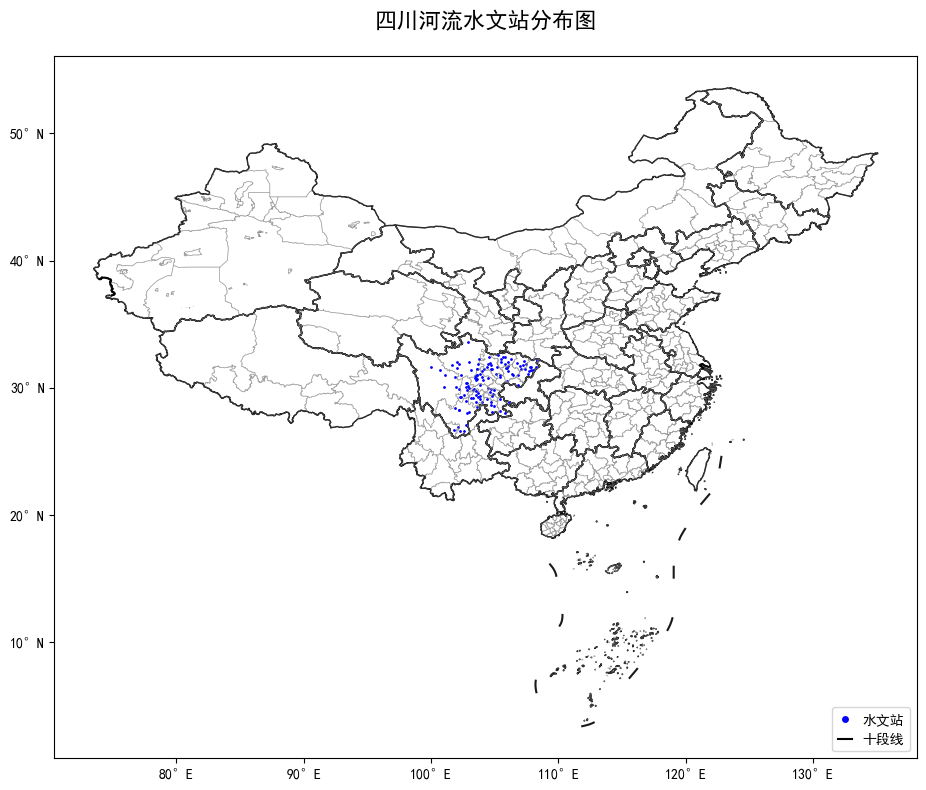

68. PostgreSQL & QGIS mapping – 20251104

69. MySQL & Python mapping – 20251104

70. GIS and Empirical Reasoning – 20251112

83. GGRA30_Assign5_IntroArcGISOnline – 20251119

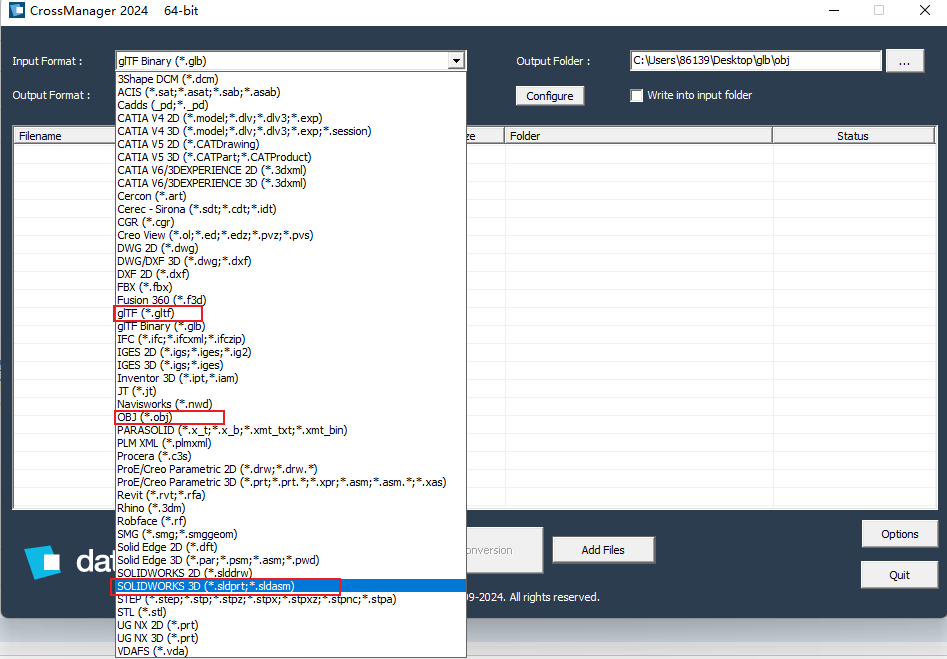

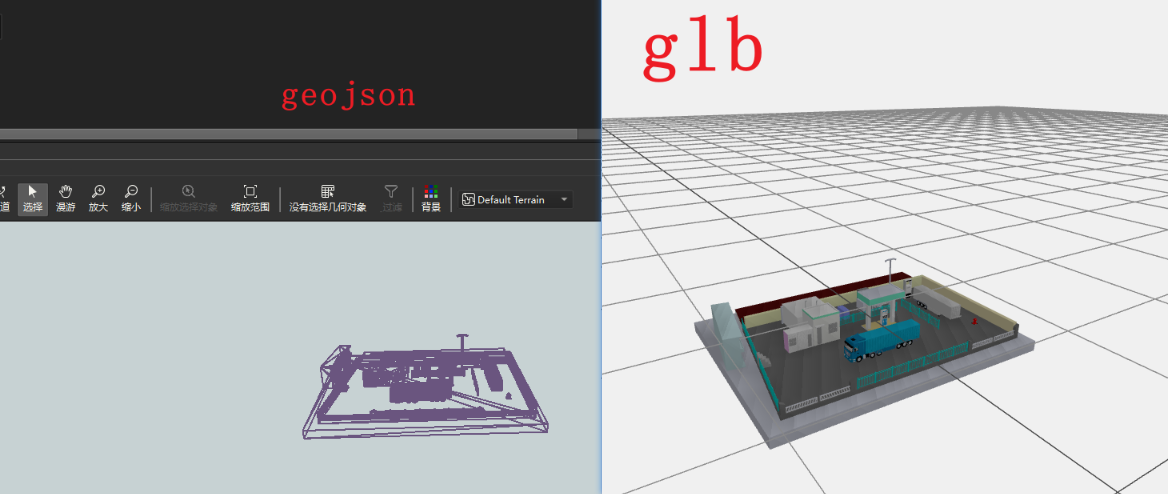

1. SLDPRT to GEOJSON

Client Requirements and Breakdown

Client wanted to convert SLDPRT to GEOJSON for web display. We recommended converting to GLB for use in three.js.

SLDPRT is a proprietary SolidWorks format for 3D models. Initially, we considered using SolidWorks (25GB) but found Crossmanager 2024 64-bit, which handles many 3D conversions.

Solution

Convert SLDPRT to OBJ using Crossmanager, then read OBJ with FME and convert to GEOJSON.

Results

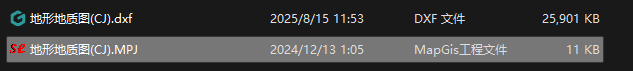

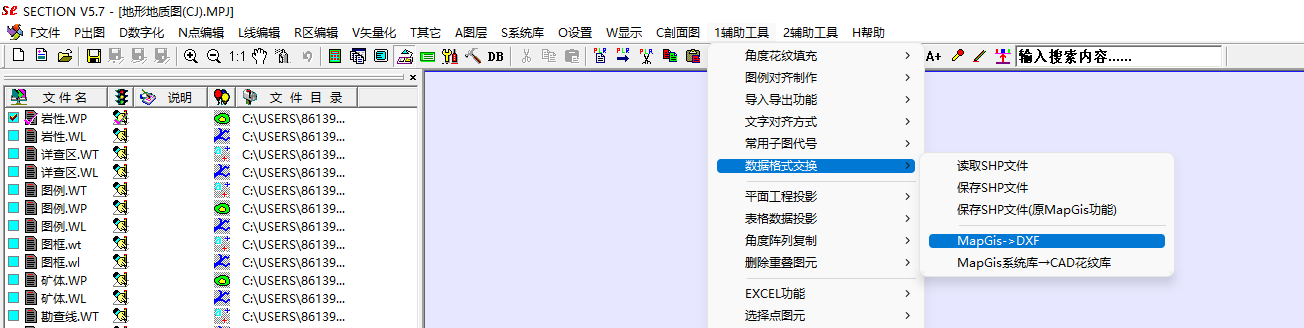

2. MAPGIS to CAD

Client Requirements and Breakdown

MAPGIS is a well-known domestic software. After some research, we found that converting requires MAPGIS and a plugin called Section.

Solution

Install MAPGIS and the Section plugin. Open the .MPJ project file in MAPGIS, use the plugin to convert, and the resulting DXF is saved in the same directory. Close any layers not needed. If the DXF appears empty in CAD, double-click the mouse wheel to reset view.

Results

3. Spatial Adjustment of CAD Vector Data to GCJ02

Client Requirements and Breakdown

Client had CAD vector data that was already converted to SHP and wanted to overlay it on Amap imagery. This is a spatial adjustment task.

Solution

Initially tried QGIS but it was slow; switched to ArcGIS Pro. Used Tianditu basemap for spatial adjustment, then used QGIS plugin to convert coordinates to GCJ02, and checked against Amap. Lessons learned: define coordinate system before converting CAD layers; use similarity transformation first, then affine for fine-tuning.

Results

4. Excel Coordinates to SHP

Notes

Simple. Pay attention to Excel encoding.

5. MDB to CAD

Notes

Simple. Convert to projected coordinate system if area/length calculation is needed.

8. CAD to SHP

Client Requirements and Breakdown

Fields: autocad_entity, autocad_layer, autocad_layer_color, autocad_layer_desc, autodesk_layer_frozen, autodesk_layer_hidden, fme_text_size, fme_text_string, fme_type.

9. CAD to SHP

Notes

Domestic CAD often uses projected coordinate systems. X and Y both 6 digits suggests independent coordinate system needing 3/7 parameters. X7/Y8 indicates zone number (first two digits of Y). X6/Y7 either requires known region or central meridian. 1:10,000 scale usually uses 3-degree zones.

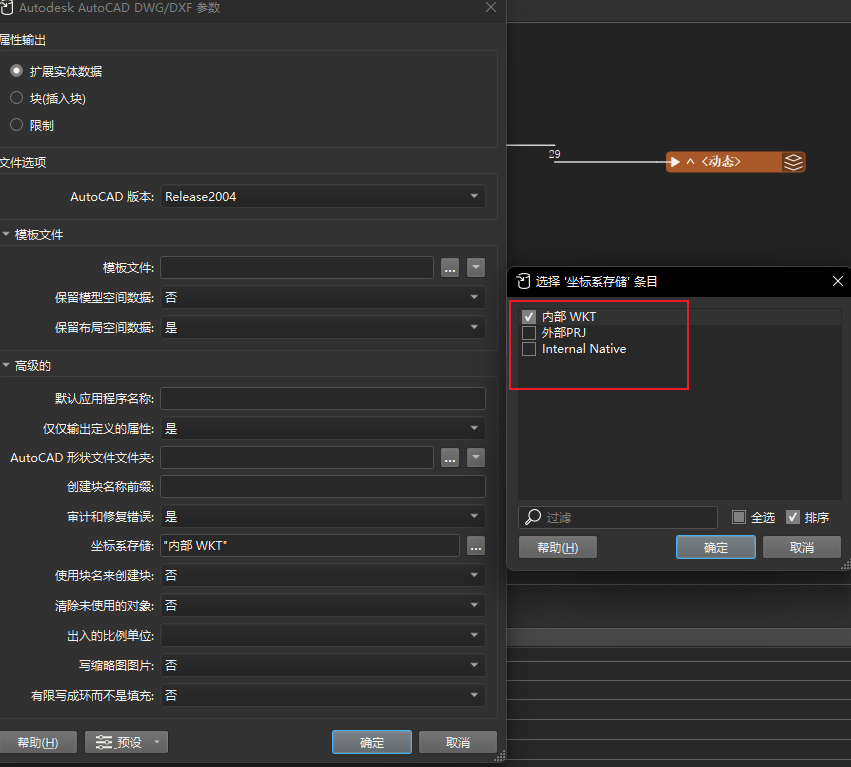

20. SHP to CAD

Notes

When writing CAD with FME, select where to store the coordinate system.

21. STL to GLB

Notes

Will add details later.

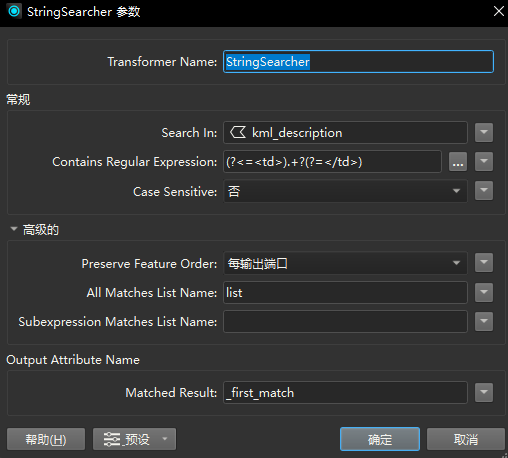

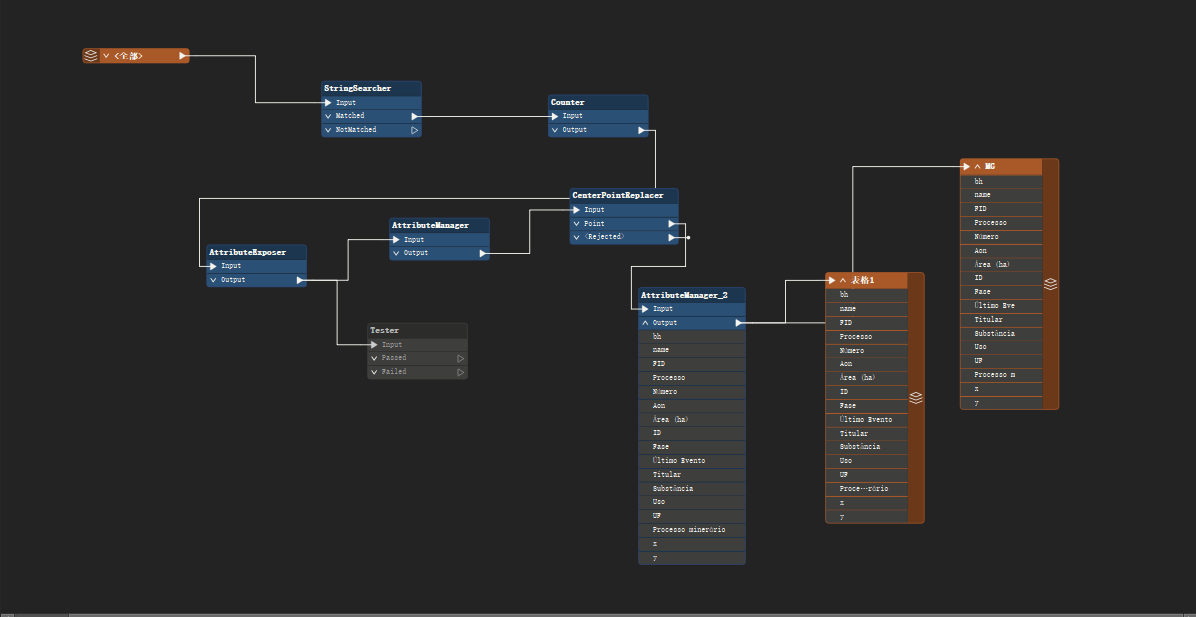

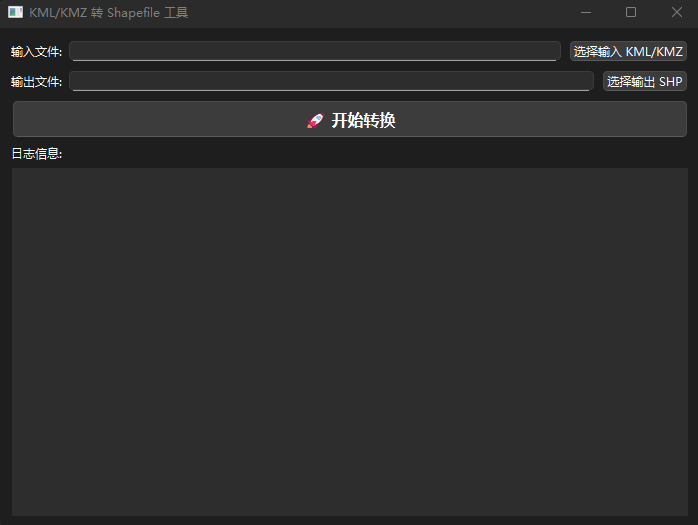

22. KML to SHP

Notes

No ready-made tool for KML to SHP with attributes. Use FME or Python. For FME, key is StringSearcher with regex (?<=<td>).+?(?=</td>), then AttributeExposer. For Python, process the description field.

import xml.etree.ElementTree as ET

import os

from osgeo import ogr

def extract_attributes_from_html(html_text):

"""

Parse HTML table from KML description field and return attribute dict.

"""

if not html_text.strip().startswith('<table'):

start = html_text.find('<table')

end = html_text.find('</table>')

if start == -1 or end == -1:

return {}

html_text = html_text[start:end + 8]

try:

root = ET.fromstring(html_text)

if root.tag != 'table':

table = root.find('.//table')

if table is not None:

root = table

else:

return {}

attr_dict = {}

for row in root.findall('.//tr'):

ths = row.findall('th')

tds = row.findall('td')

if ths and tds:

key = ths[0].text

value = tds[0].text

if key:

attr_dict[key.strip()] = (value.strip() if value else '')

return attr_dict

except ET.ParseError as e:

print(f"HTML parse error: {e}")

return {}

def convert_kml_to_shapefile(input_kml, output_shp):

ds_in = ogr.Open(input_kml)

if ds_in is None:

print(f"Cannot open {input_kml}")

return

layer = ds_in.GetLayer(0)

srs = layer.GetSpatialRef()

driver = ogr.GetDriverByName('ESRI Shapefile')

if os.path.exists(output_shp):

driver.DeleteDataSource(output_shp)

ds_out = driver.CreateDataSource(output_shp)

if ds_out is None:

print(f"Cannot create {output_shp}")

return

layer_defn = layer.GetLayerDefn()

geom_type = layer_defn.GetGeomType()

layer_out = ds_out.CreateLayer('output', srs=srs, geom_type=geom_type)

created_fields = set()

all_attributes = set()

features_data = []

for feat_in in layer:

desc = feat_in.GetField('description')

if desc:

attrs = extract_attributes_from_html(desc)

all_attributes.update(attrs.keys())

features_data.append({'geometry': feat_in.GetGeometryRef().Clone(), 'attributes': attrs})

else:

features_data.append({'geometry': feat_in.GetGeometryRef().Clone(), 'attributes': {}})

for field_name in sorted(all_attributes):

safe_name = field_name.strip()

if not safe_name.isidentifier():

safe_name = ''.join(c if c.isalnum() or c == '_' else '_' for c in safe_name)

if len(safe_name) > 10:

safe_name = safe_name[:10]

if safe_name not in created_fields:

field_defn = ogr.FieldDefn(safe_name, ogr.OFTString)

field_defn.SetWidth(254)

layer_out.CreateField(field_defn)

created_fields.add(safe_name)

feat_defn = layer_out.GetLayerDefn()

for data in features_data:

feat_out = ogr.Feature(feat_defn)

feat_out.SetGeometry(data['geometry'])

for key, value in data['attributes'].items():

safe_key = key.strip()

if not safe_key.isidentifier():

safe_key = ''.join(c if c.isalnum() or c == '_' else '_' for c in safe_key)

if len(safe_key) > 10:

safe_key = safe_key[:10]

if safe_key in created_fields:

feat_out.SetField(safe_key, value)

layer_out.CreateFeature(feat_out)

feat_out = None

ds_out = None

ds_in = None

print(f"Conversion complete. {len(features_data)} features written to {output_shp}")

Note: Nuitka is better than PyInstaller for packaging; it generates much smaller exe (about 5x smaller). PyQt5 may not be fully compatible with Nuitka.

22. Bivariate Map

Notes

First time making a bivariate map. Two different attributes (e.g., temperature and population) in a study area. Usually 3 classes for each, use orthogonal overlay. Key is legend creation using the 'bivariate legend' plugin. Save as PDF for output.

Data sources: Population from https://landscan.ornl.gov/, Temperature from https://climate.northwestknowledge.net/TERRACLIMATE/index_directDownloads.php

NC File Processing

import xarray as xr

import os

import numpy as np

import rioxarray

from typing import Optional

def select_variable_interactive(ds: xr.Dataset) -> Optional[str]:

data_vars = list(ds.data_vars)

if not data_vars:

print("No data variables found.")

return None

print("\nAvailable data variables:")

for i, var in enumerate(data_vars):

long_name = ds[var].attrs.get('long_name', 'No description')

print(f" [{i+1}] {var} ({long_name})")

while True:

choice = input("Enter variable name or number: ").strip()

if choice.isdigit():

idx = int(choice) - 1

if 0 <= idx < len(data_vars):

return data_vars[idx]

elif choice in data_vars:

return choice

print("Invalid input.")

def compute_and_export_average(nc_file: str, year: int, output_dir: str = '.') -> None:

if not os.path.exists(nc_file):

print(f"File not found: {nc_file}")

return

print(f"Processing {nc_file}...")

try:

ds = xr.open_dataset(nc_file, chunks='auto')

print(ds.coords)

var_name = select_variable_interactive(ds)

if var_name is None:

return

da = ds[var_name]

time_dim = next((dim for dim in da.dims if dim.lower() in ['time', 't', 'times']), da.dims[0])

print(f"Computing average over dimension '{time_dim}'...")

mean_da = da.mean(dim=time_dim, skipna=True)

if mean_da.rio.crs is None:

mean_da = mean_da.rio.write_crs("EPSG:4326")

print("Set CRS to EPSG:4326.")

mean_da.attrs['original_variable'] = var_name

output_filename = f'{var_name}_average_{year}.tif'

output_path = os.path.join(output_dir, output_filename)

os.makedirs(output_dir, exist_ok=True)

mean_da.rio.to_raster(output_path, dtype=np.float32, compress='LZW')

print(f"Saved to {output_path}")

except Exception as e:

print(f"Error: {e}")

if __name__ == "__main__":

input_nc = r'C:\Users\86139\Downloads\TerraClimate_tmax_2013.nc'

target_year = 2013

compute_and_export_average(input_nc, target_year)

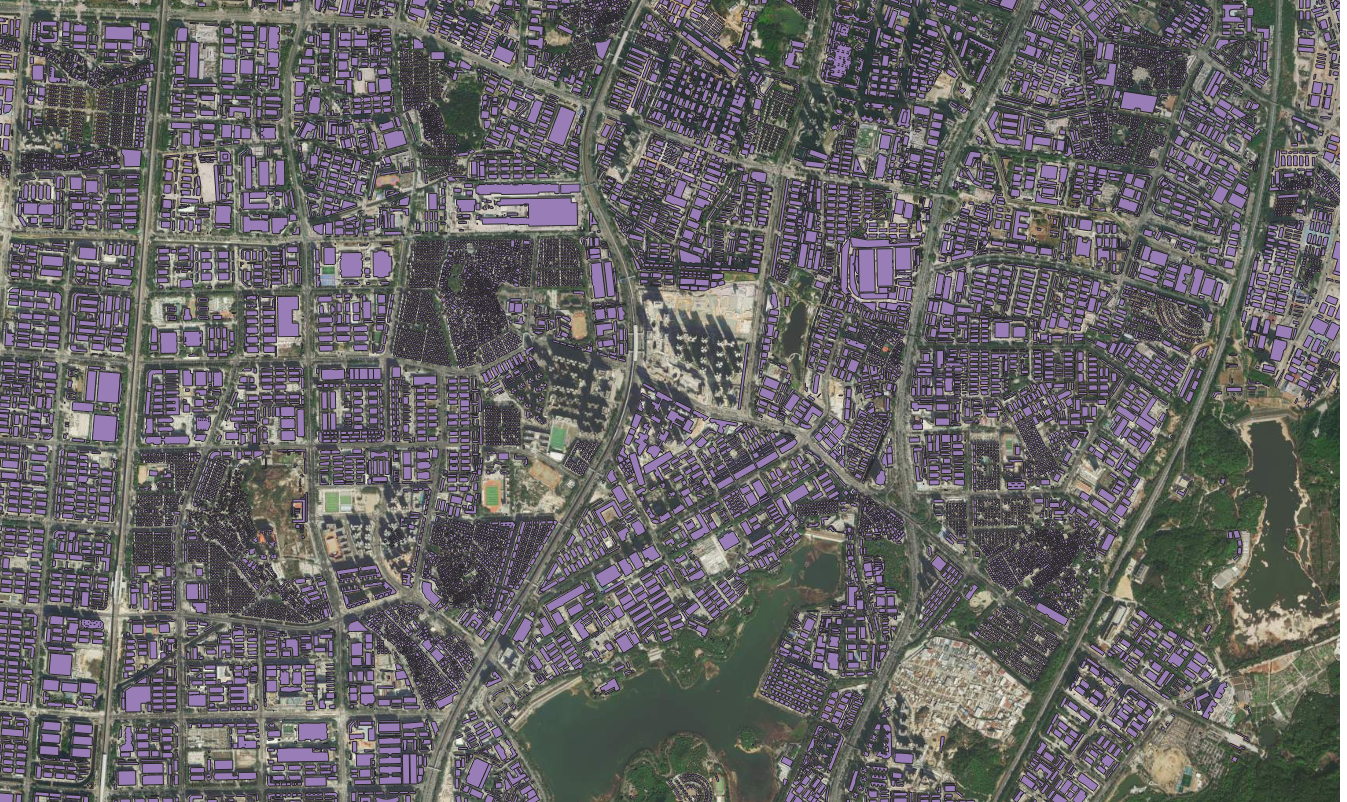

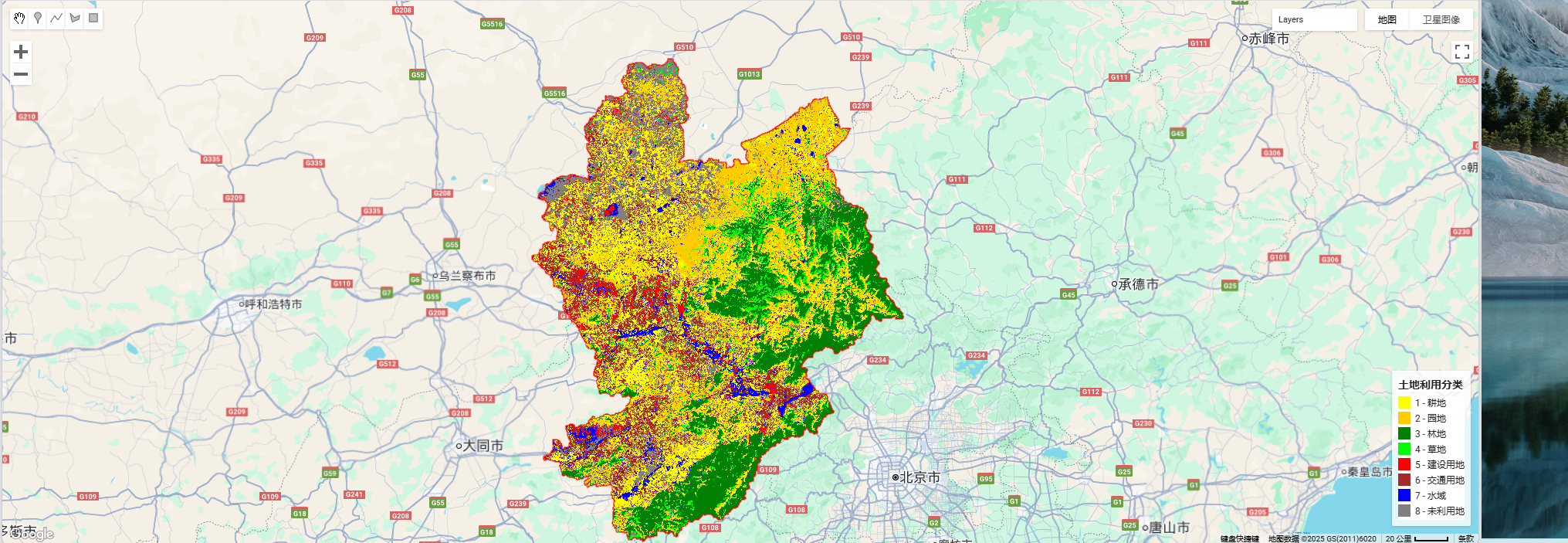

23. Supervised Classification

Notes

Used Landsat 5 data (1990, 1995, 2005) with GEE. Sample points have attributes 'landcover' (1-8) and 'lb' (class name). For Landsat 8, change bands. Sample points can be derived from open-source LUCC data.

Code

Query Area Data

// projects/ee-lth19981023/assets/zjk

var roi = ee.FeatureCollection("projects/ee-lth19981023/assets/zjk");

function maskL5sr(image) {

var cloudShadowMask = (1 << 3);

var cloudMask = (1 << 5);

var qa = image.select('QA_PIXEL');

var mask = qa.bitwiseAnd(cloudShadowMask).eq(0)

.and(qa.bitwiseAnd(cloudMask).eq(0));

return image.updateMask(mask);

}

var composite = ee.ImageCollection('LANDSAT/LT05/C02/T1_L2')

.filterDate('2005-01-01', '2005-12-31')

.filterBounds(roi)

.map(maskL5sr)

.median()

.clip(roi);

var final_image = composite.selfMask();

Map.addLayer(final_image, {bands: ['SR_B5','SR_B4','SR_B3'], min: 0, max: 20000}, 'Landsat5_2005');

var boundaryStyle = {color: 'FF0000', fillColor: '00000000', width: 2};

Map.addLayer(roi.style(boundaryStyle), {}, "Boundary");

Export.image.toDrive({

image: final_image,

description: 'Landsat5_2005_ZJK',

folder: 'GEE_exports',

scale: 30,

region: roi.geometry(),

crs: 'EPSG:32650',

maxPixels: 1e13

});

Classification Code

var roi = ee.FeatureCollection("projects/ee-lth19981023/assets/zjk");

var samplePoints = ee.FeatureCollection("projects/ee-lth19981023/assets/ls2005");

function maskL5sr(image) {

var qaMask = image.select('QA_PIXEL').bitwiseAnd(parseInt('11111', 2)).eq(0);

var saturationMask = image.select('QA_RADSAT').eq(0);

var mask = qaMask.and(saturationMask);

return image.updateMask(mask).divide(10000);

}

var image = ee.ImageCollection('LANDSAT/LT05/C02/T1_L2')

.filterDate('1990-01-01', '1990-12-31')

.filterBounds(roi)

.map(maskL5sr)

.median()

.clip(roi);

var mndwi = image.normalizedDifference(['SR_B3', 'SR_B5']).rename('MNDWI');

var ndbi = image.normalizedDifference(['SR_B5', 'SR_B4']).rename('NDBI');

var ndvi = image.normalizedDifference(['SR_B4', 'SR_B3']).rename('NDVI');

var imageWithIndices = image.addBands([ndvi, ndbi, mndwi]);

var bands = ['SR_B2', 'SR_B3', 'SR_B4', 'SR_B5', 'SR_B7', 'MNDWI', 'NDBI', 'NDVI'];

var training = imageWithIndices.select(bands).sampleRegions({

collection: samplePoints,

properties: ['landcover'],

scale: 30,

geometries: true

});

var withRandom = training.randomColumn('random');

var split = 0.7;

var trainSet = withRandom.filter(ee.Filter.lt('random', split));

var testSet = withRandom.filter(ee.Filter.gte('random', split));

var classifier = ee.Classifier.smileRandomForest(50).train({

features: trainSet,

classProperty: 'landcover',

inputProperties: bands

});

var classified = imageWithIndices.select(bands).classify(classifier);

var testResult = testSet.classify(classifier);

var cm = testResult.errorMatrix('landcover', 'classification');

print('Confusion Matrix', cm);

print('Overall Accuracy', cm.accuracy());

print('Kappa', cm.kappa());

Map.centerObject(roi, 8);

var style = {color: 'FF0000', fillColor: '00000000', width: 2};

Map.addLayer(roi.style(style), {}, "Boundary");

var palette = ['#ffff00', '#ffcc00', '#008000', '#00ff00', '#ff0000', '#a52a2a', '#0000ff', '#808080'];

Map.addLayer(classified, {min: 1, max: 8, palette: palette}, 'Land Cover');

var legendDict = {

1: {label: 'Cropland', color: '#ffff00'},

2: {label: 'Garden', color: '#ffcc00'},

3: {label: 'Forest', color: '#008000'},

4: {label: 'Grassland', color: '#00ff00'},

5: {label: 'Built-up', color: '#ff0000'},

6: {label: 'Transport', color: '#a52a2a'},

7: {label: 'Water', color: '#0000ff'},

8: {label: 'Bareland', color: '#808080'}

};

var legend = ui.Panel({style: {position: 'bottom-right', padding: '8px', backgroundColor: 'ffffffcc'}});

legend.add(ui.Label({value: 'Land Use', style: {fontWeight: 'bold', fontSize: '14px', margin: '0 0 6px 0', textAlign: 'center'}}));

Object.keys(legendDict).forEach(function(key) {

var entry = legendDict[key];

var colorBox = ui.Label({style: {backgroundColor: entry.color, padding: '8px', margin: '0 6px 4px 0'}});

var desc = ui.Label({value: key + ' - ' + entry.label, style: {margin: '0 0 4px 0', fontSize: '12px'}});

legend.add(ui.Panel({widgets: [colorBox, desc], layout: ui.Panel.Layout.Flow('horizontal')}));

});

Map.add(legend);

Export.image.toDrive({

image: classified,

description: '1990_land_cover',

fileNamePrefix: '1990lc',

scale: 30,

region: roi.geometry(),

crs: 'EPSG:32650',

maxPixels: 1e13,

fileFormat: 'GeoTIFF'

});

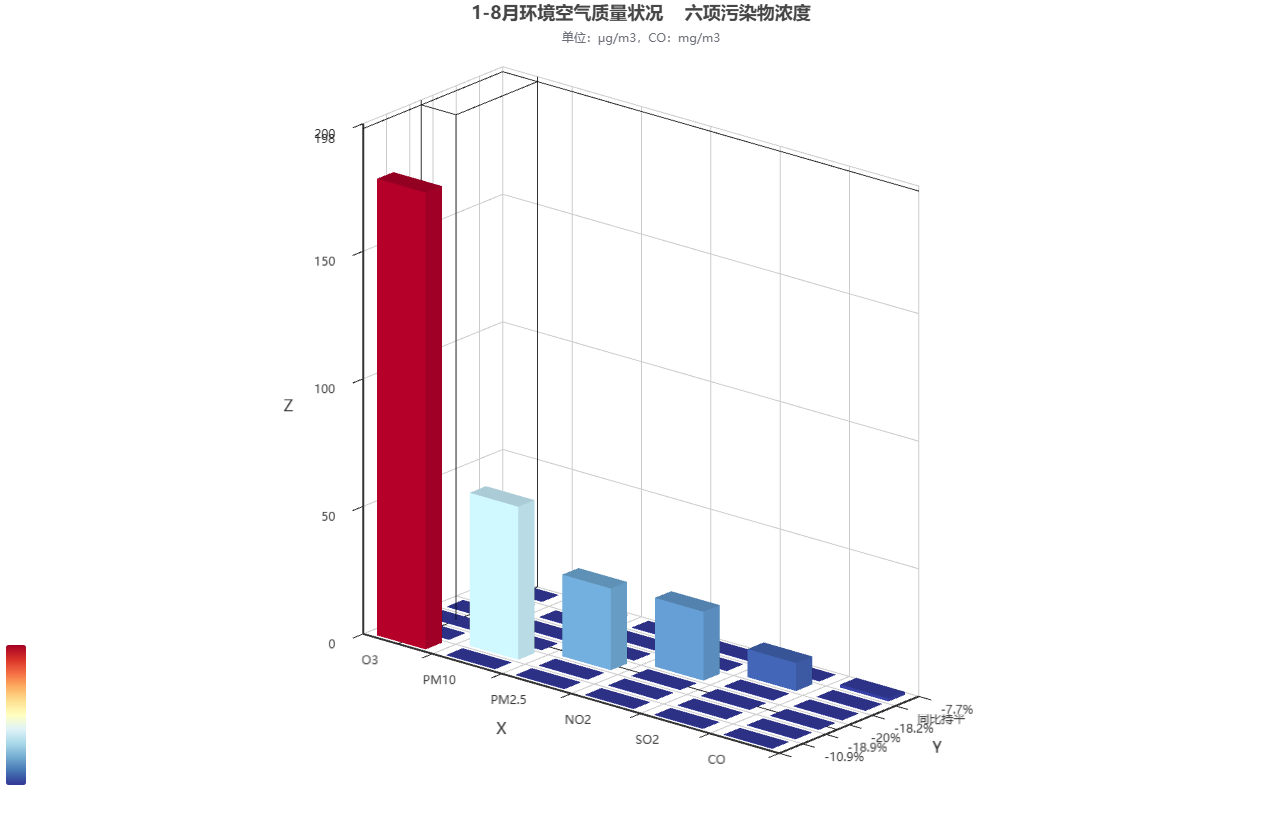

27. 3D Bar Chart

Tool: https://www.hasgg.com/bar3d-chart-creation

28. Heavy Metal Content Map of Anhui Section of Yangtze River

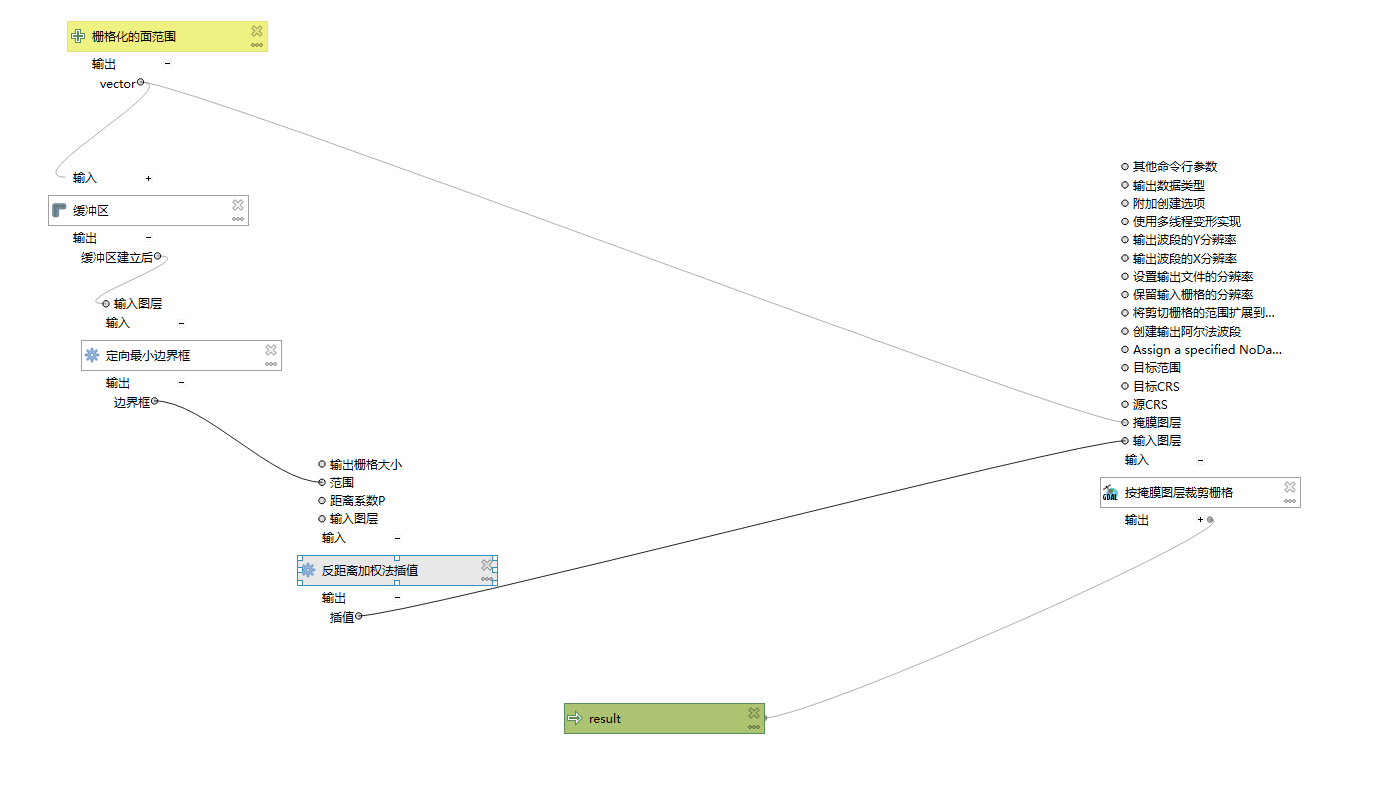

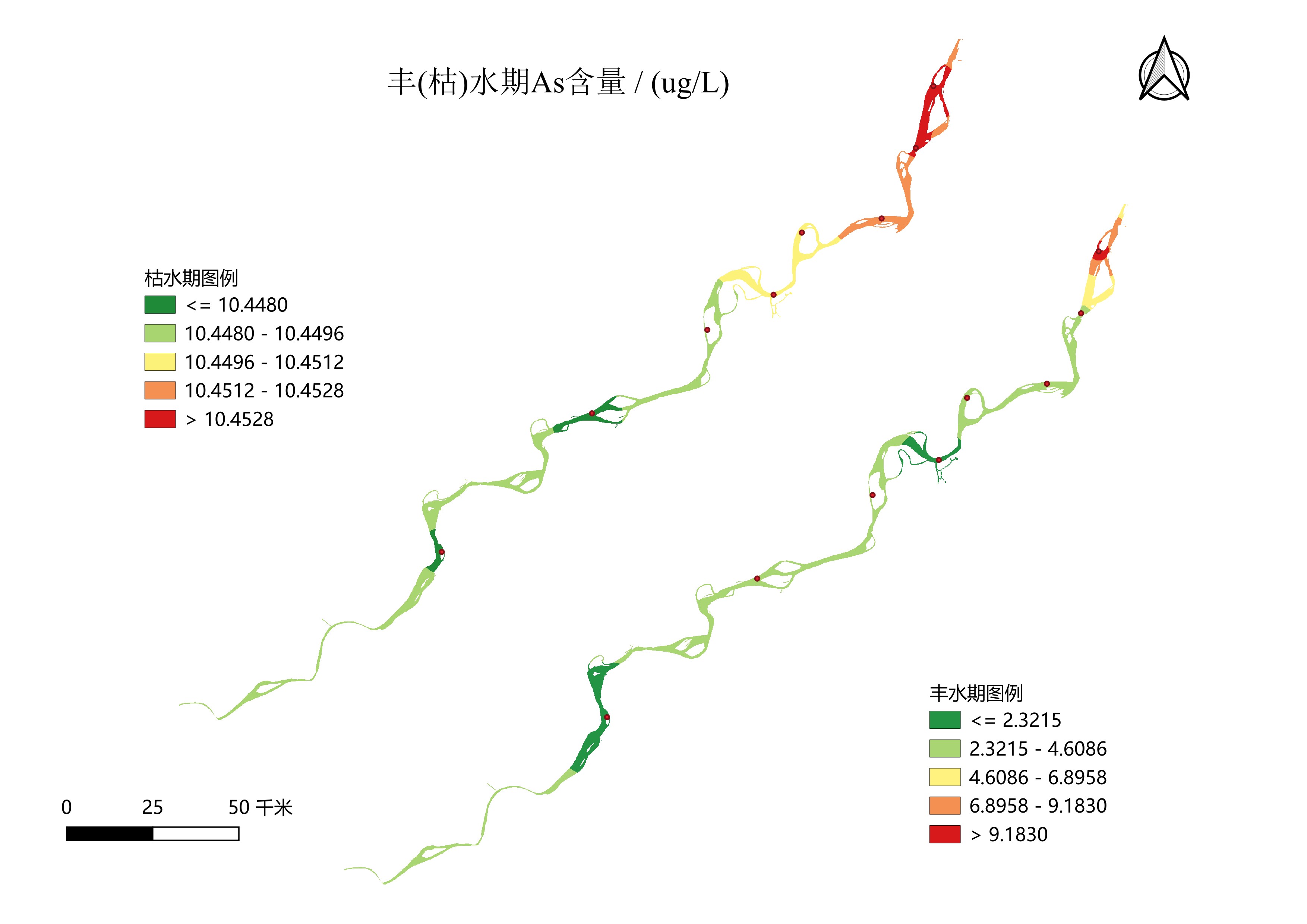

QGIS mapping: vectorize sampling points, interpolation, mask, raster symbology, then map layout. Also built a QGIS model for batch processing.

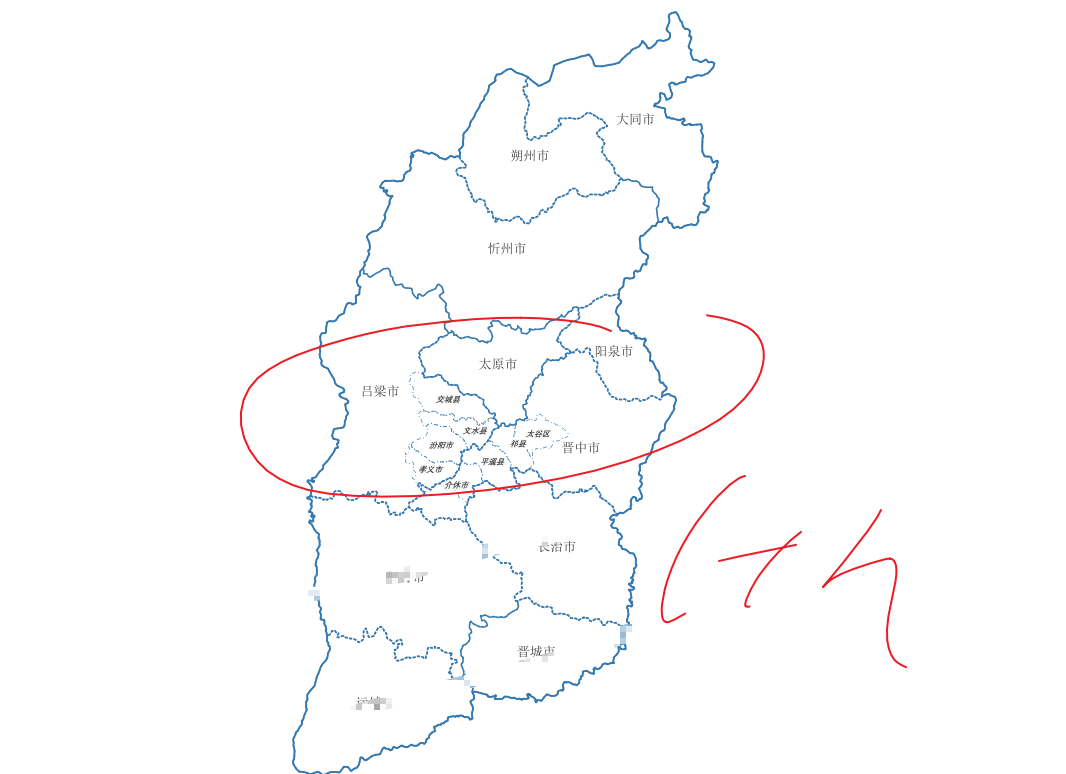

29. Location Map

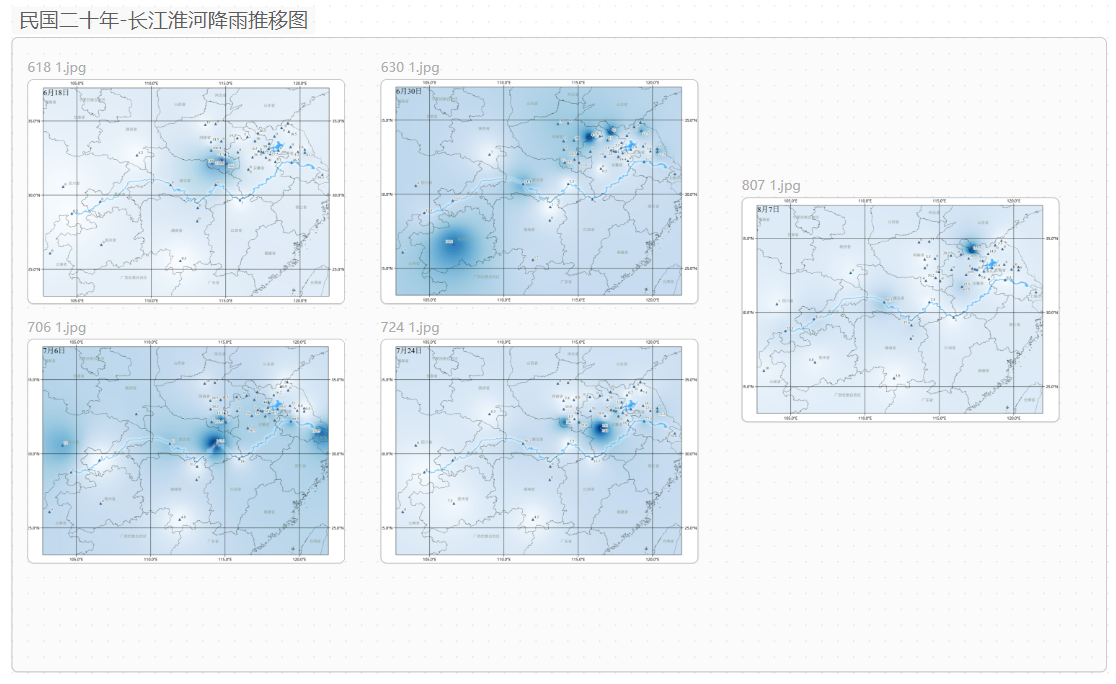

30. Rainfall Progression Map of Yangtze and Huai Rivers in 1931

31. Emergency Map

32. Zhangzhou Monitoring Points

33. Tourist Route + Heatmap

34. Raster Gap Filling

Client needs: Two rasters (large and small). Fill NoData in small raster with a custom value, using large raster extent.

Logic:

| small has value | any large | keep small |

| small NoData | large has value | fill 0 |

| both NoData | NoData |

Answer:

ArcGIS Pro: Con(IsNull("small"), Con(IsNull("large"), "large", 0), "small")

QGIS: ( ("small" IS NULL) * 0 ) + ( ("small" IS NOT NULL) * "small" ) + ( ("small" IS NULL) * "large" )

36. Site Selection Analysis

Client needed site selection for distribution center.

37. FOS Landslide Failure Probability Calculation

Safety factor in slope engineering.

38. Confusion Matrix

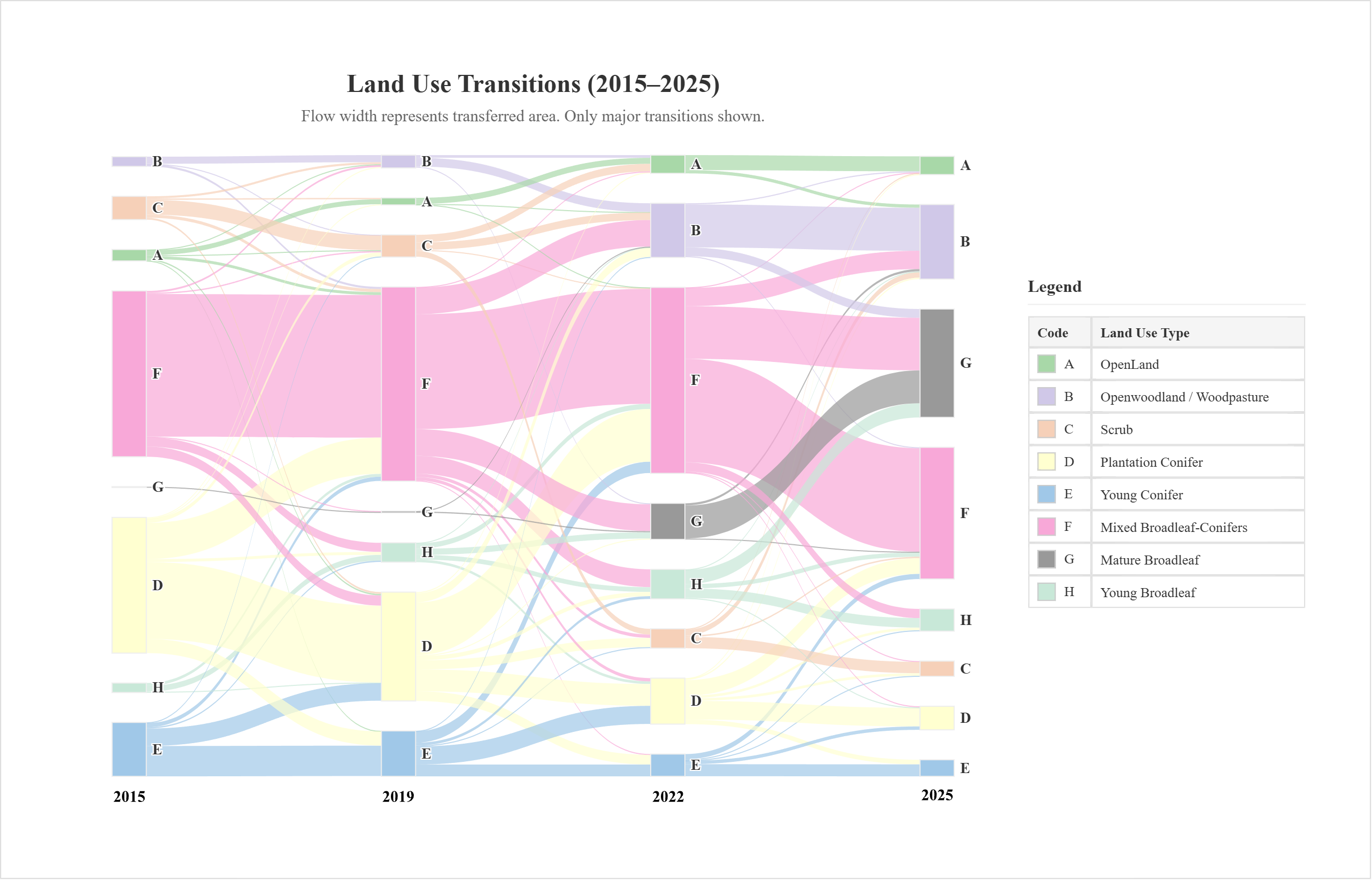

Land use map, confusion matrix with OA & UA, and Sankey diagram for land use transitions.

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<title>Land Use Transitions (2015–2025)</title>

<script src="https://cdn.jsdelivr.net/npm/echarts@5.4.3/dist/echarts.min.js"></script>

<script src="https://cdn.jsdelivr.net/npm/papaparse@5.4.1/papaparse.min.js"></script>

<script src="https://cdnjs.cloudflare.com/ajax/libs/html2canvas/1.4.1/html2canvas.min.js"></script>

<style>

body { font-family: 'Times New Roman', Times, serif; background-color: #f0f0f0; margin: 0; color: #333; text-align: center; }

#export-wrapper { background-color: #ffffff; box-sizing: content-box; margin: 20px auto; width: 1200px; }

.wrapper { padding: 20px; background-color: #ffffff; box-shadow: 0 2px 8px rgba(0,0,0,0.1); }

.content-container { display: flex; align-items: center; gap: 25px; }

#main { flex: 1; height: 700px; }

#legend-container { flex-basis: 260px; flex-shrink: 0; text-align: left; }

#legend-container h4 { margin-top: 5px; margin-bottom: 10px; font-size: 16px; border-bottom: 1px solid #eee; padding-bottom: 8px; }

.legend-table { width: 100%; border-collapse: collapse; font-size: 13px; }

.legend-table th, .legend-table td { border: 1px solid #e0e0e0; padding: 6px 8px; text-align: left; }

.legend-table th { background-color: #f5f5f5; }

.color-sample { display: inline-block; width: 15px; height: 15px; margin-right: 8px; border: 1px solid #ccc; vertical-align: middle; }

#export-btn { margin-top: 15px; padding: 10px 20px; font-size: 16px; font-family: 'Times New Roman', Times, serif; cursor: pointer; border: 1px solid #3d85c6; background-color: #4a86e8; color: white; border-radius: 4px; transition: background-color 0.2s; }

#export-btn:hover { background-color: #3d85c6; }

</style>

</head>

<body>

<div id="export-wrapper">

<div class="wrapper" id="capture-area">

<div class="content-container">

<div id="main"></div>

<div id="legend-container">

<h4>Legend</h4>

<table class="legend-table">

<thead><tr><th>Code</th><th>Land Use Type</th></tr></thead>

<tbody>

<tr><td><div class="color-sample" style="background-color:#a8d8a8;"></div>A</td><td>OpenLand</td></tr>

<tr><td><div class="color-sample" style="background-color:#d0c8e8;"></div>B</td><td>Openwoodland / Woodpasture</td></tr>

<tr><td><div class="color-sample" style="background-color:#f6d0b8;"></div>C</td><td>Scrub</td></tr>

<tr><td><div class="color-sample" style="background-color:#ffffd0;"></div>D</td><td>Plantation Conifer</td></tr>

<tr><td><div class="color-sample" style="background-color:#a0c8e8;"></div>E</td><td>Young Conifer</td></tr>

<tr><td><div class="color-sample" style="background-color:#f8a8d8;"></div>F</td><td>Mixed Broadleaf-Conifers</td></tr>

<tr><td><div class="color-sample" style="background-color:#999999;"></div>G</td><td>Mature Broadleaf</td></tr>

<tr><td><div class="color-sample" style="background-color:#c8e8d8;"></div>H</td><td>Young Broadleaf</td></tr>

</tbody>

</table>

</div>

</div>

</div>

</div>

<button id="export-btn">Export as PNG</button>

<script>

const colorMap = {

'A': '#a8d8a8', 'B': '#d0c8e8', 'C': '#f6d0b8', 'D': '#ffffd0',

'E': '#a0c8e8', 'F': '#f8a8d8', 'G': '#999999', 'H': '#c8e8d8'

};

const labelMap = {0:'A',1:'B',2:'C',3:'D',4:'E',5:'F',6:'G',7:'H'};

const years = ['2015', '2019', '2022', '2025'];

const fixedOrder = ['A','B','C','D','E','F','G','H'];

const myChart = echarts.init(document.getElementById('main'), null, { renderer: 'svg' });

Papa.parse("land_use_transitions.csv", {

download: true,

header: true,

complete: function(results) {

const data = results.data;

const linkCounter = {};

data.forEach(row => {

const dn2015 = parseInt(row.DN2015), dn2019 = parseInt(row.DN2019),

dn2022 = parseInt(row.DN2022), dn2025 = parseInt(row.DN2025);

const area = parseFloat(row.area_last);

if (isNaN(area)) return;

const transitions = [

{ from: dn2015, to: dn2019, years: ['2015', '2019'] },

{ from: dn2019, to: dn2022, years: ['2019', '2022'] },

{ from: dn2022, to: dn2025, years: ['2022', '2025'] }

];

transitions.forEach(t => {

if (![0,1,2,3,4,5,6,7].includes(t.from) || ![0,1,2,3,4,5,6,7].includes(t.to)) return;

const source = `${labelMap[t.from]} (${t.years[0]})`, target = `${labelMap[t.to]} (${t.years[1]})`;

const key = `${source}→${target}`;

linkCounter[key] = (linkCounter[key] || 0) + area;

});

});

const nodesSet = new Set();

const links = [];

for (const [key, value] of Object.entries(linkCounter)) {

const [source, target] = key.split('→');

nodesSet.add(source);

nodesSet.add(target);

links.push({ source, target, value });

}

const gap = 70;

const nodeMap = {};

years.forEach(year => {

fixedOrder.forEach((code, index) => {

const name = `${code} (${year})`;

nodeMap[name] = { name, itemStyle: { color: colorMap[code] }, y: index * gap, fixed: true };

});

});

const existingNodes = Object.values(nodeMap).filter(n => nodesSet.has(n.name));

existingNodes.sort((a, b) => fixedOrder.indexOf(a.name[0]) - fixedOrder.indexOf(b.name[0]));

const sankeyOption = {

type: 'sankey', data: existingNodes, links,

top: '12%', bottom: '5%', left: '5%', right: '5%',

emphasis: { focus: 'adjacency' },

lineStyle: { color: 'source', opacity: 0.7, curveness: 0.5, width: 1.5 },

label: {

position: 'right', fontSize: 14, fontWeight: 'bold', fontFamily: 'Times New Roman',

formatter: params => params.name ? params.name.split(' ')[0] : ''

},

nodeWidth: 32, nodeGap: 28,

itemStyle: { borderWidth: 1, borderColor: '#eee' },

layout: 'none', progressive: false

};

const baseOption = {

title: {

text: 'Land Use Transitions (2015–2025)',

subtext: 'Flow width represents area. Only major transitions shown.',

left: 'center',

textStyle: { fontSize: 24, fontWeight: 'bold', fontFamily: 'Times New Roman', color: '#333' },

subtextStyle: { fontSize: 15.5, fontFamily: 'Times New Roman', color: '#666' }

},

series: [sankeyOption]

};

myChart.setOption(baseOption, true);

const yearLabels = [];

const maxIndex = fixedOrder.length - 1;

const labelY = maxIndex * gap + sankeyOption.nodeWidth + 25;

const series = myChart.getSeriesByType('sankey')[0];

if (series && series.data.length > 0) {

const yearX = {};

existingNodes.forEach(node => {

const pixel = myChart.convertToPixel({seriesIndex: 0}, node.name);

if (pixel) {

const year = node.name.match(/\((\d{4})\)/)[1];

yearX[year] = yearX[year] || pixel[0];

}

});

years.forEach(year => {

if (yearX[year]) {

yearLabels.push({

type: 'text', left: yearX[year], top: labelY,

style: { text: year, fill: '#333', fontSize: 16, fontWeight: 'bold', fontFamily: 'Times New Roman', textAlign: 'center' },

z: 100

});

}

});

}

myChart.setOption({

...baseOption,

tooltip: {

formatter: params => (params.data.value !== undefined)

? `<strong>${params.data.source}</strong> → <strong>${params.data.target}</strong><br/>Area: ${params.data.value.toFixed(0)} m²`

: params.name,

backgroundColor: 'white', borderColor: '#ccc', borderWidth: 1, padding: [10, 15],

textStyle: { fontSize: 12, fontFamily: 'Times New Roman' }

},

graphic: yearLabels

});

},

error: function(e) { console.error(e); alert("Could not load data."); }

});

document.getElementById('export-btn').addEventListener('click', function () {

const btn = this;

const wrapper = document.getElementById('export-wrapper');

const origPad = wrapper.style.padding;

const origBorder = wrapper.style.border;

wrapper.style.padding = '40px';

wrapper.style.border = '1px solid #ddd';

btn.style.display = 'none';

html2canvas(wrapper, { scale: 2, useCORS: true, backgroundColor: '#ffffff' }).then(canvas => {

const link = document.createElement('a');

link.download = 'Land_Use_Transitions.png';

link.href = canvas.toDataURL('image/png');

link.click();

wrapper.style.padding = origPad;

wrapper.style.border = origBorder;

btn.style.display = 'block';

}).catch(err => {

console.error(err);

wrapper.style.padding = origPad;

wrapper.style.border = origBorder;

btn.style.display = 'block';

});

});

</script>

</body>

</html>

import numpy as np

import matplotlib.pyplot as plt

import seaborn as sns

from sklearn.metrics import cohen_kappa_score

plt.rcParams['font.family'] = 'Times New Roman'

plt.rcParams['font.size'] = 12

plt.rcParams['axes.labelsize'] = 12

plt.rcParams['axes.titlesize'] = 16

plt.rcParams['xtick.labelsize'] = 11

plt.rcParams['ytick.labelsize'] = 11

plt.rcParams['legend.fontsize'] = 10

cm_2022 = np.array([

[124, 2, 4, 1, 0, 0, 0, 0],

[ 8, 117, 6, 0, 0, 2, 3, 0],

[ 5, 1, 127, 1, 0, 2, 2, 0],

[ 0, 4, 2, 133, 3, 1, 0, 4],

[ 1, 0, 2, 3, 111, 0, 0, 2],

[ 3, 18, 4, 5, 4, 120, 8, 8],

[ 2, 2, 1, 1, 0, 2, 113, 2],

[ 2, 1, 0, 0, 1, 2, 2, 129]

])

label_names = {

'A': 'OpenLand', 'B': 'Openwoodland/woodpasture', 'C': 'Scrub', 'D': 'PlantationConifer',

'E': 'YoungConifer', 'F': 'MixedBroadleafConifers', 'G': 'MatureBroadleaf', 'H': 'YoungBroadleaf'

}

labels = list(label_names.keys())

total = cm_2022.sum()

row_sums = cm_2022.sum(axis=1)

pa = [cm_2022[i,i]/row_sums[i] if row_sums[i]>0 else 0 for i in range(8)]

col_sums = cm_2022.sum(axis=0)

ua = [cm_2022[i,i]/col_sums[i] if col_sums[i]>0 else 0 for i in range(8)]

oa = np.trace(cm_2022) / total

true_labels = []

pred_labels = []

for i in range(8):

for j in range(8):

count = cm_2022[i,j]

true_labels.extend([i]*count)

pred_labels.extend([j]*count)

kappa = cohen_kappa_score(true_labels, pred_labels)

print(f"Total: {total}, OA: {oa:.4f}, Kappa: {kappa:.4f}")

print("Class\tPA\tUA")

for i, lab in enumerate(labels):

print(f"{lab}\t{pa[i]:.3f}\t{ua[i]:.3f}")

color_matrix = np.array([ua]*8).T # each column is UA

annot = np.empty_like(cm_2022, dtype=object)

for i in range(8):

for j in range(8):

if i==j:

annot[i,j] = f"{cm_2022[i,j]}\n(PA:{pa[i]:.3f}\nUA:{ua[j]:.3f})"

else:

annot[i,j] = str(cm_2022[i,j])

def get_text_color(val, vmin=0.8, vmax=1.0):

norm = (val - vmin)/(vmax - vmin)

return 'black' if norm < 0.6 else 'white'

text_colors = np.array([[get_text_color(ua[j]) for j in range(8)] for _ in range(8)])

fig, ax = plt.subplots(figsize=(14,10))

hm = sns.heatmap(color_matrix, annot=annot, fmt="", cmap="binary", cbar=True,

cbar_kws={"shrink":0.8, "label":"User's Accuracy (UA)", "pad":0.02},

square=True, linewidths=0.5, linecolor='white',

xticklabels=labels, yticklabels=labels, annot_kws={"size":10},

ax=ax, vmin=0.8, vmax=1.0)

for i in range(8):

for j in range(8):

hm.texts[i*8+j].set_color(text_colors[i,j])

ax.set_title(f"Confusion Matrix with PA and UA\nOA: {oa:.4f} Kappa: {kappa:.4f}", fontsize=16, fontweight='bold', pad=20)

ax.set_xlabel("Predicted Label")

ax.set_ylabel("True Label")

legend_text = "Legend: " + " ".join([f"{k}={v}" for k,v in label_names.items()])

plt.figtext(0.5, 0.02, legend_text, ha='center', fontsize=10)

plt.tight_layout(rect=[0,0.08,1,1])

plt.savefig("confusion_matrix.png", dpi=300, bbox_inches='tight')

plt.show()

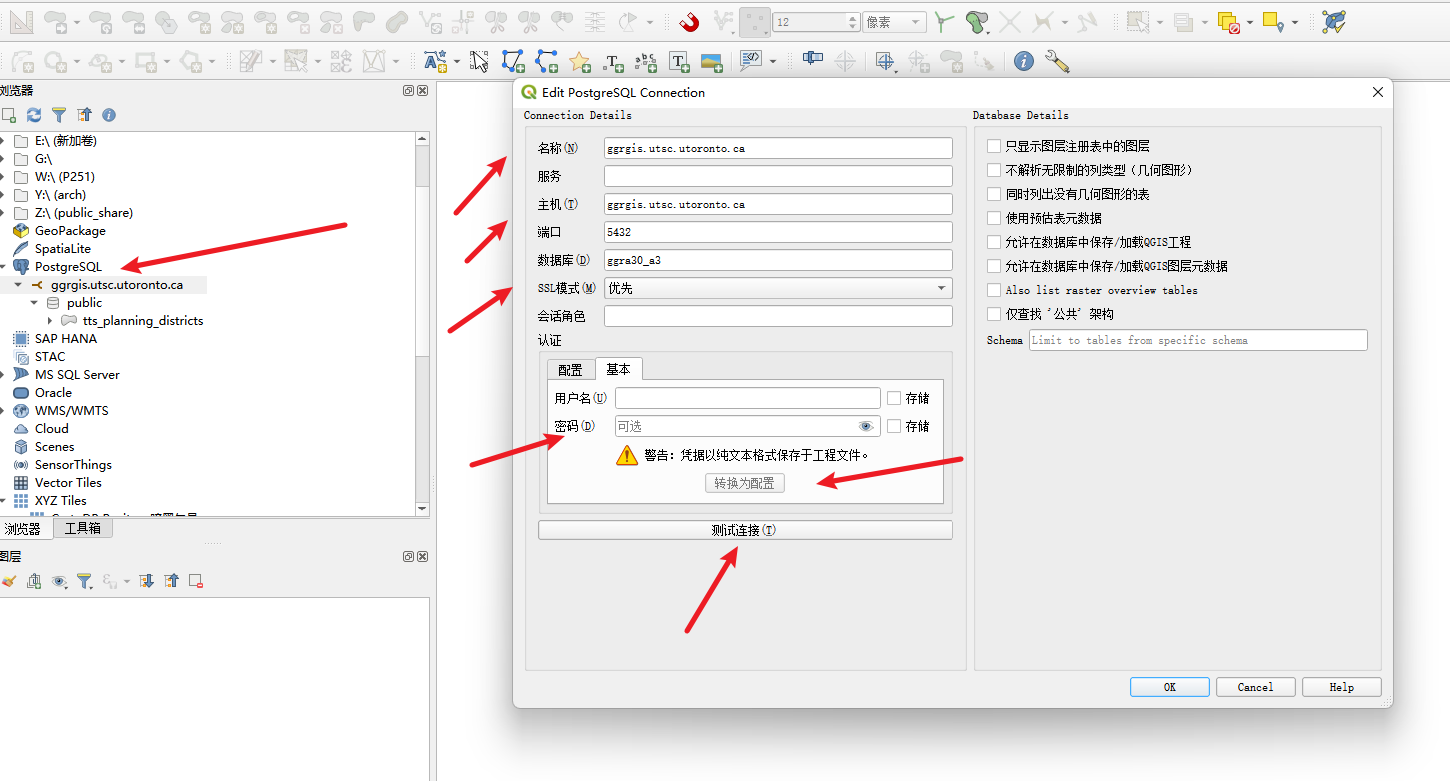

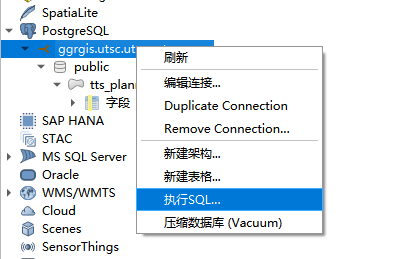

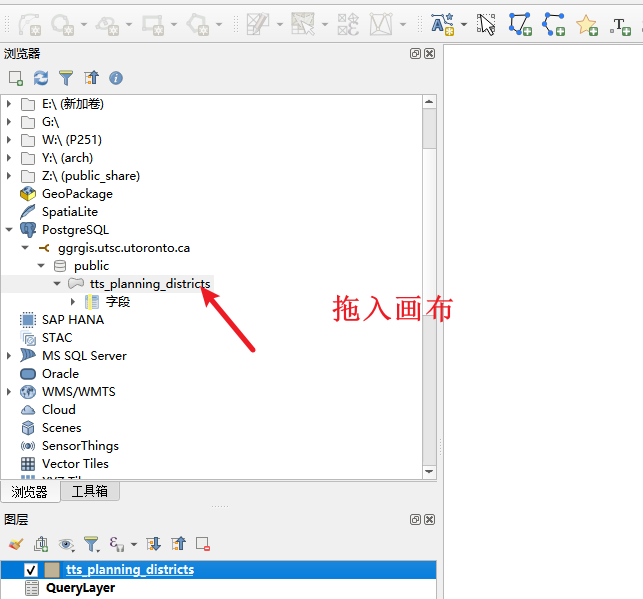

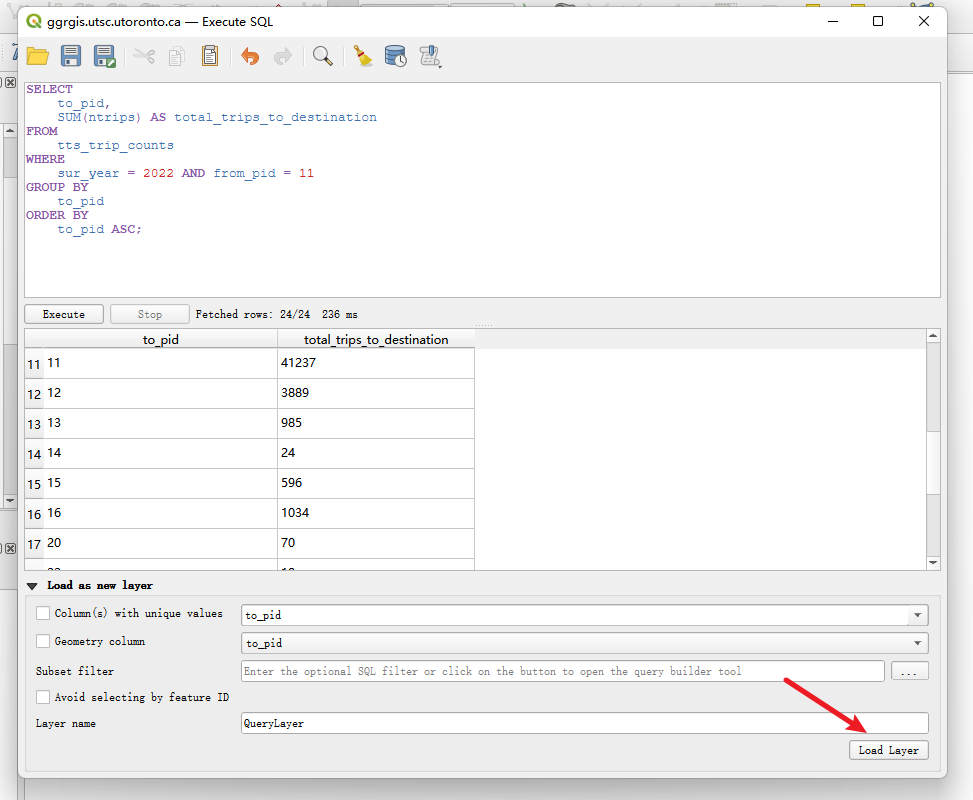

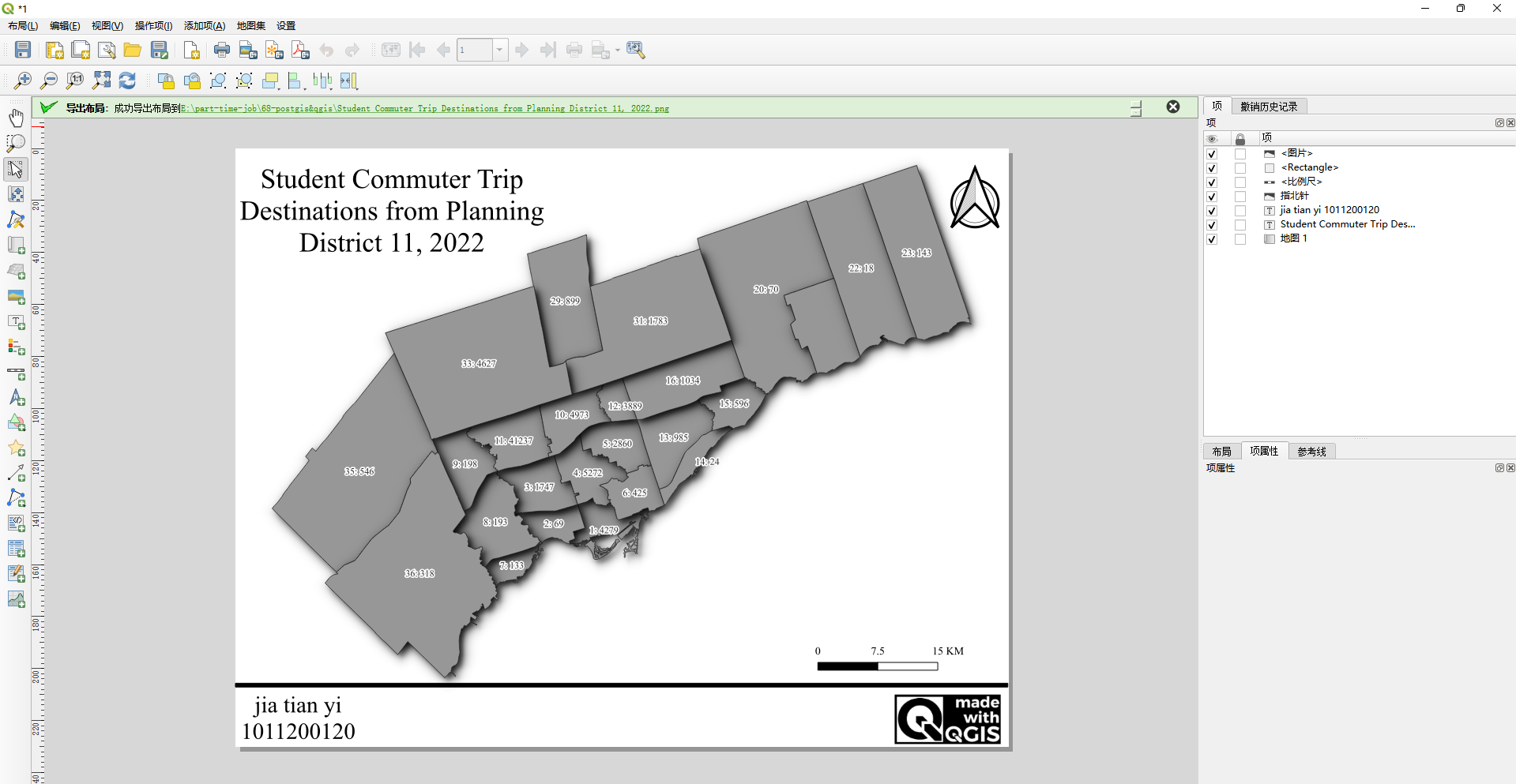

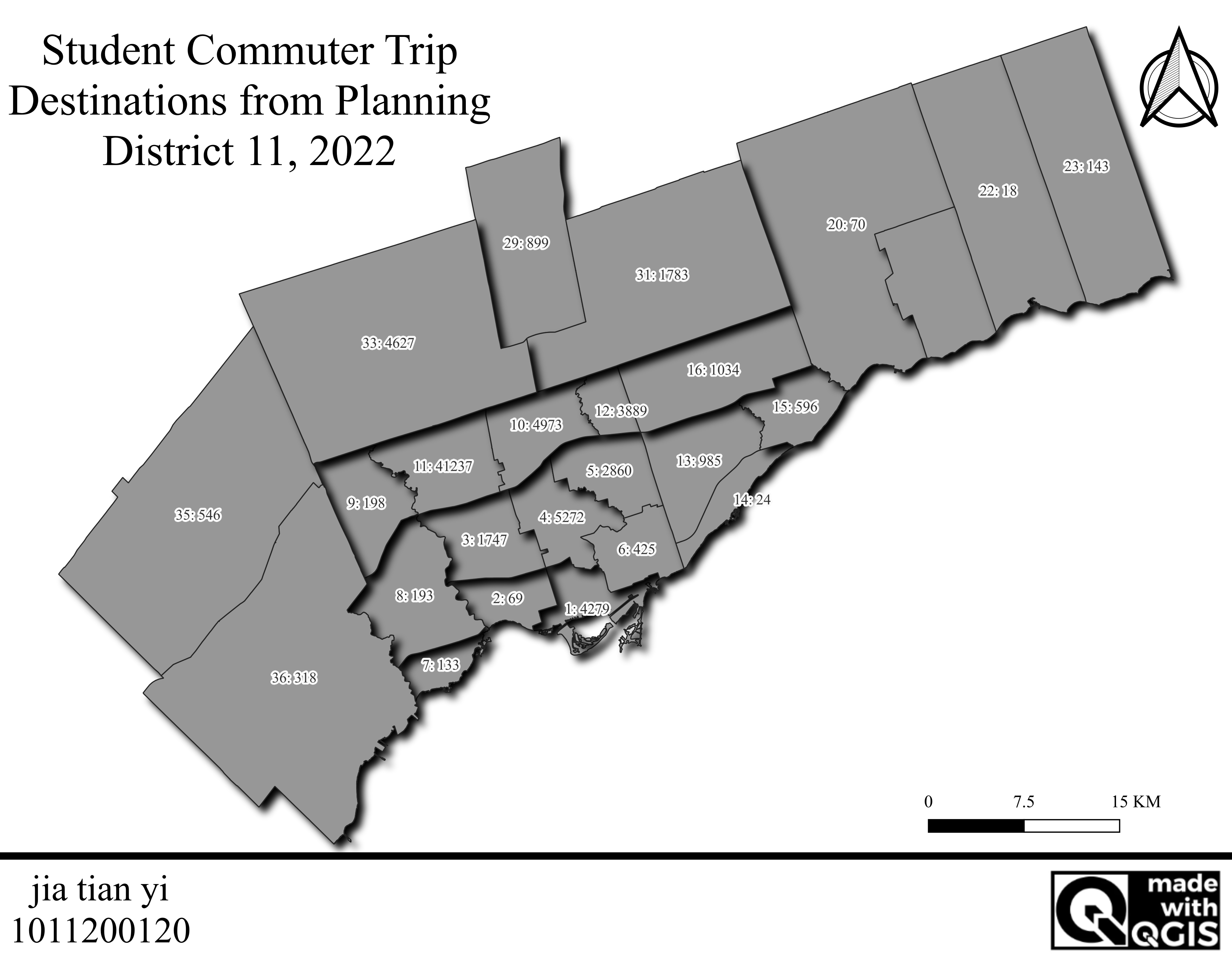

68. Working with PostgreSQL in QGIS

Preparation: Define District

All work based on a specific TTS Planning District (PD). Student ID last digit 0 corresponds to PD=11.

Preparation: Connect Database

Data from Transportation Tomorrow Surveys (TTS). Tables:

- tts_planning_districts: geographic data for PDs. Fields: pid, name, pop_pt_student, pop_ft_student, etc.

- tts_trip_counts: daily commute trips for part-time and full-time students. Fields: sur_year, from_pid, to_pid, mode, ntrips.

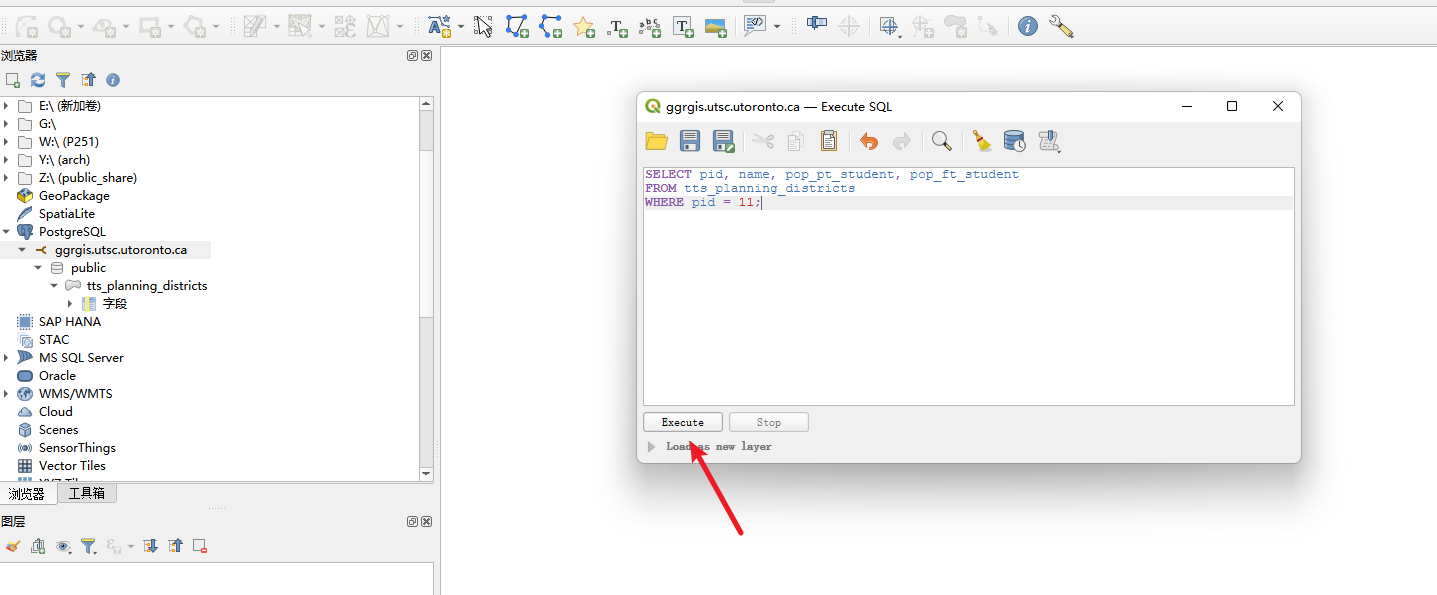

Part 1: SQL Queries (Section 3.2)

Right-click database connection, execute SQL.

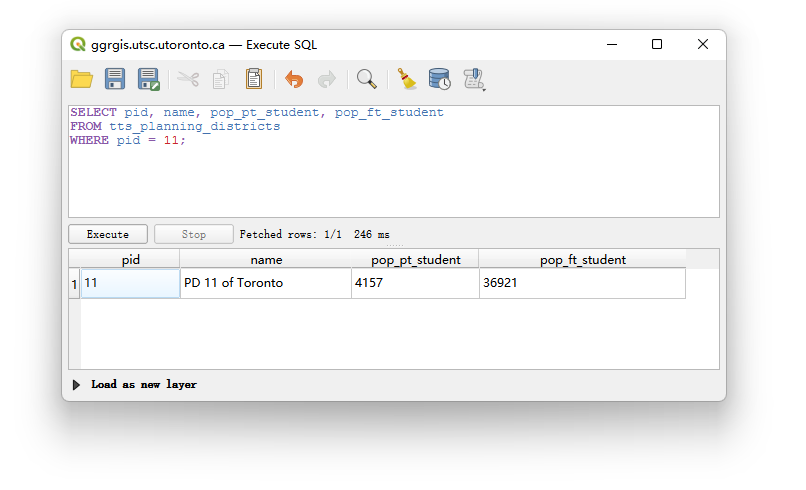

Question 1 (1 pt): List pid, name, pop_pt_student, pop_ft_student for PD 11.

SELECT pid, name, pop_pt_student, pop_ft_student

FROM tts_planning_districts

WHERE pid = 11;

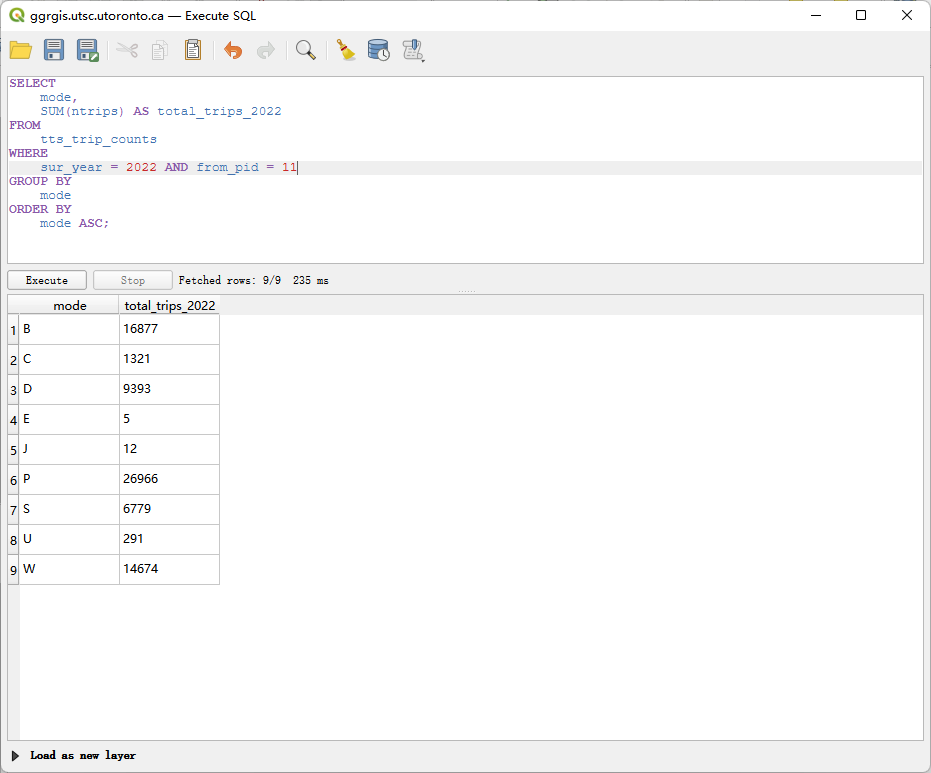

Question 2 (2 pt): Count trips from PD 11 in 2022, grouped by mode.

SELECT mode, SUM(ntrips) AS total_trips_2022

FROM tts_trip_counts

WHERE sur_year = 2022 AND from_pid = 11

GROUP BY mode

ORDER BY mode;

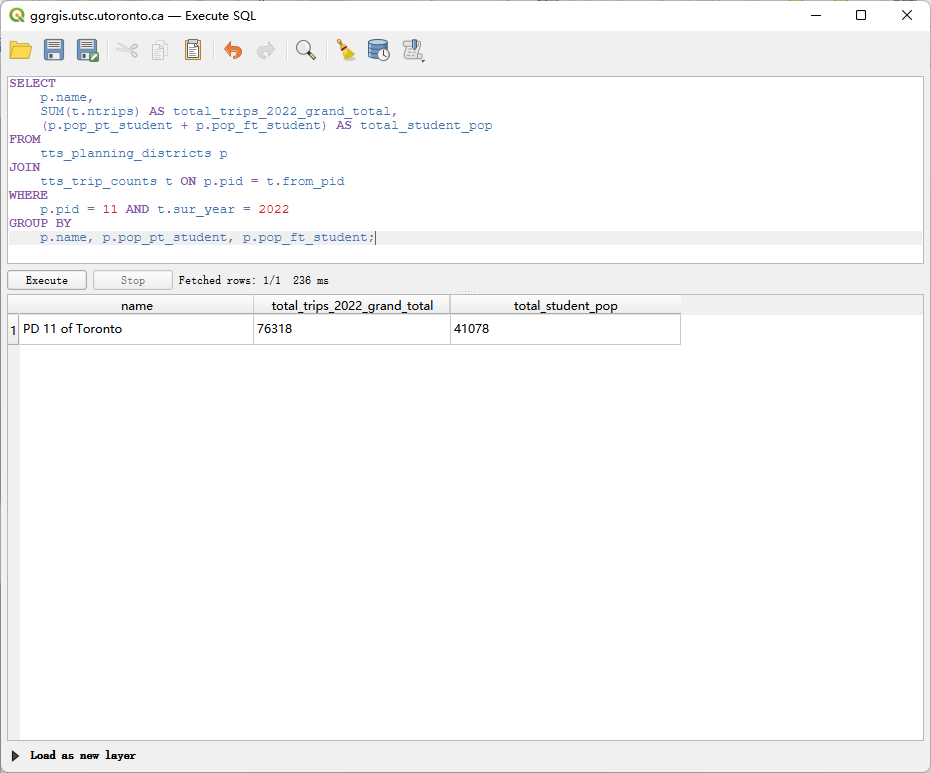

Question 3 (3 pt): Join tables to show name, total trips from PD 11 in 2022, and total student population. Explain consistency with Q2.

SELECT p.name,

SUM(t.ntrips) AS total_trips_2022,

(p.pop_pt_student + p.pop_ft_student) AS total_student_pop

FROM tts_planning_districts p

JOIN tts_trip_counts t ON p.pid = t.from_pid

WHERE p.pid = 11 AND t.sur_year = 2022

GROUP BY p.name, p.pop_pt_student, p.pop_ft_student;

Explanation: Results are consistent. Sum of all mode trips in Q2 equals total_trips_2022 in Q3 (160,042). Both queries use same base data.

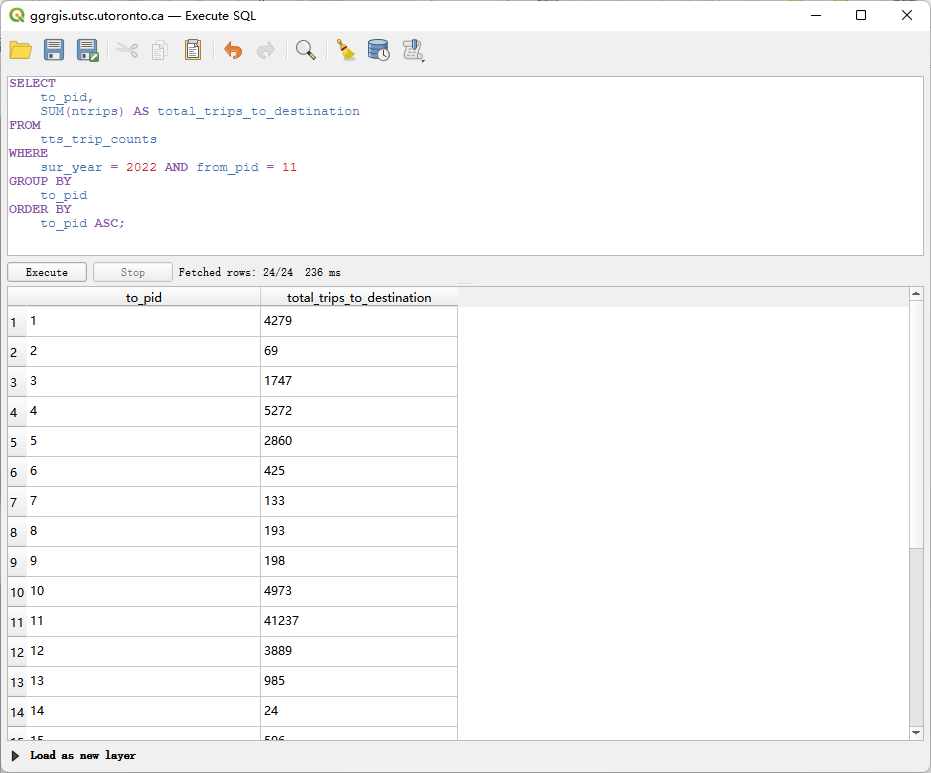

Question 4 (2 pt): Count trips from PD 11 to each destination PD in 2022.

SELECT to_pid, SUM(ntrips) AS total_trips

FROM tts_trip_counts

WHERE sur_year = 2022 AND from_pid = 11

GROUP BY to_pid

ORDER BY to_pid;

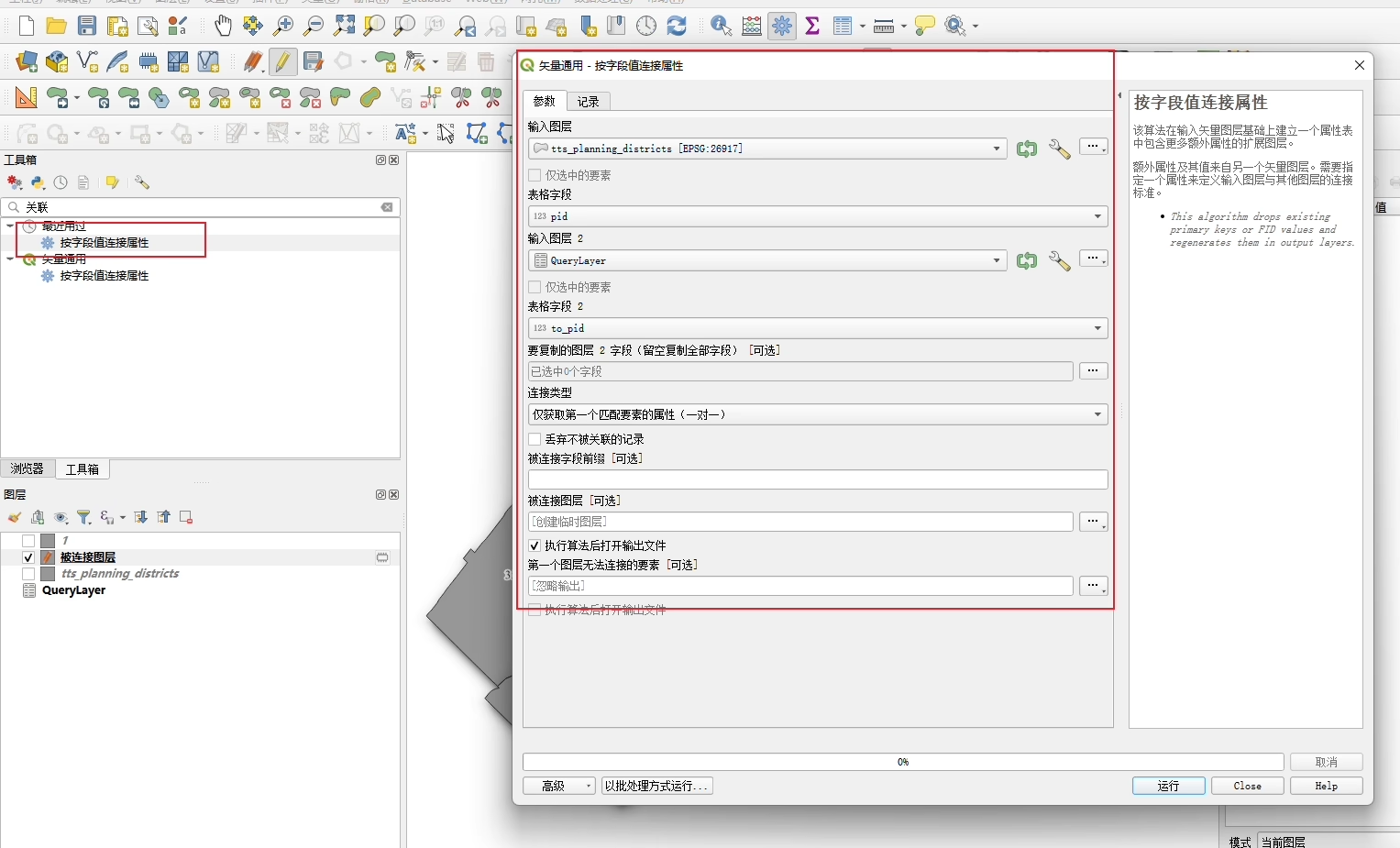

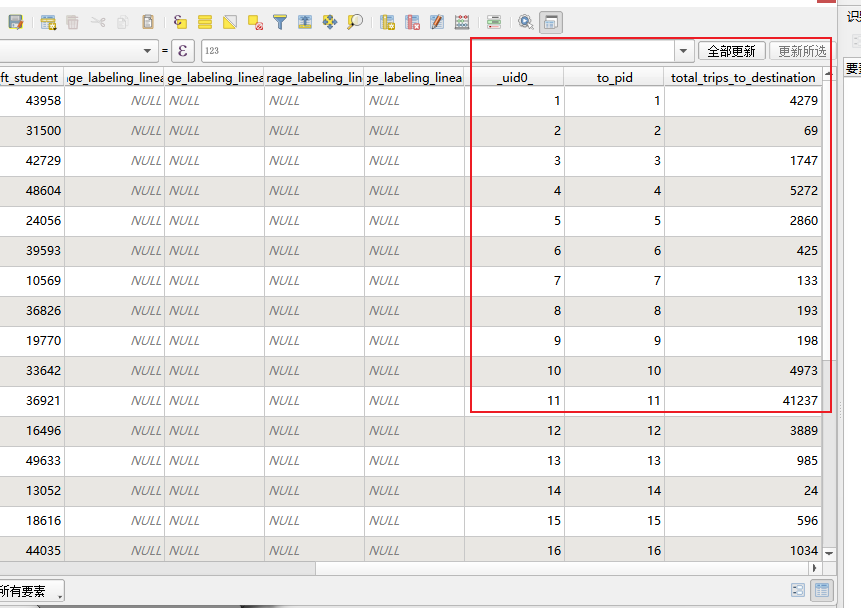

Part 2: Create Query Layer and Join (Section 3.3) (3 pts)

Use Q4 result as a QueryLayer, join to tts_planning_districts, then map.

Load query result as vector layer from PostgreSQL.

After join, tts_planning_districts attribute table gains a field like querylayer_total_trips.

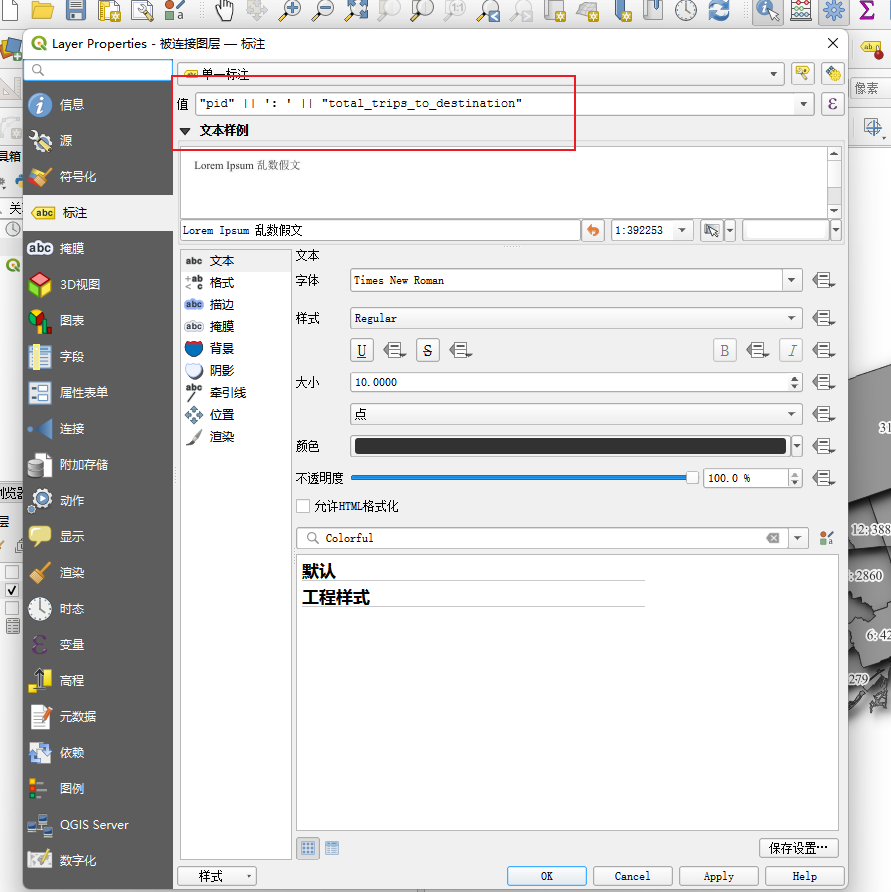

Map only the tts_planning_districts layer. Label each district with "pid: total_trips". Add title and text box with name and student ID.

Label expression: "pid" || ': ' || "total_trips"

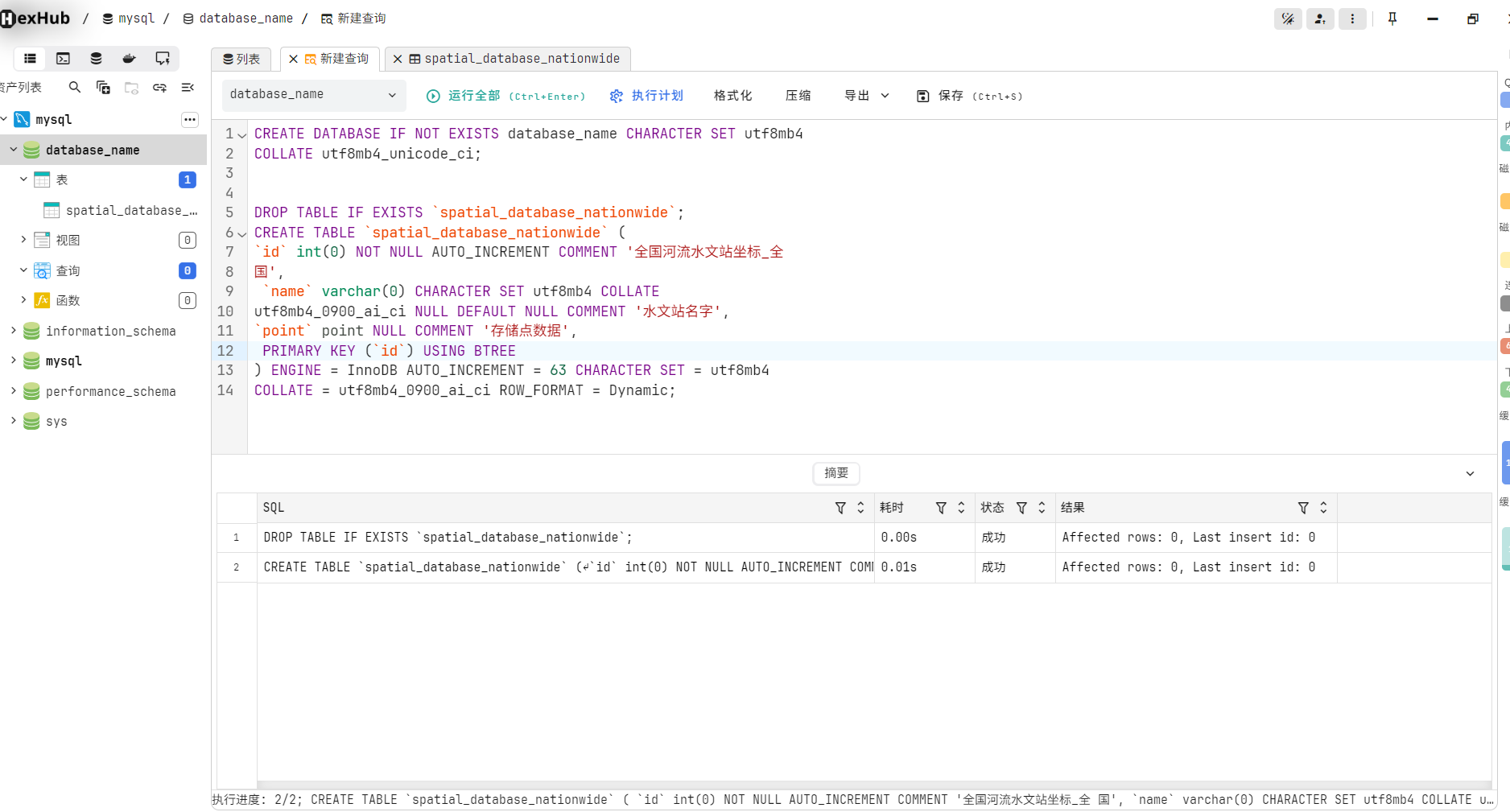

69. MySQL & Python Mapping

1. Install MySQL on Windows.

Package: https://downloads.mysql.com/archives/installer/

Steps: https://blog.csdn.net/mumiandeci/article/details/134520684

Database client: https://www.hexhub.cn/

```sql

CREATE DATABASE IF NOT EXISTS gis_db CHARACTER SET utf8mb4 COLLATE utf8mb4_unicode_ci;

DROP TABLE IF EXISTS `hydrological_stations`;

CREATE TABLE `hydrological_stations` (

`id` INT NOT NULL AUTO_INCREMENT COMMENT 'Station ID',

`name` varchar(255) CHARACTER SET utf8mb4 COLLATE utf8mb4_unicode_ci NULL DEFAULT NULL COMMENT 'Station name',

`location` point NULL COMMENT 'Point geometry',

PRIMARY KEY (`id`) USING BTREE

) ENGINE = InnoDB AUTO_INCREMENT = 1 CHARACTER SET = utf8mb4 COLLATE = utf8mb4_unicode_ci ROW_FORMAT = Dynamic;

- Import Sichuan hydrology Excel into MySQL spatial database.

- Visualize Sichuan hydrological stations.

70. GIS and Empirical Reasoning

Detailed Steps

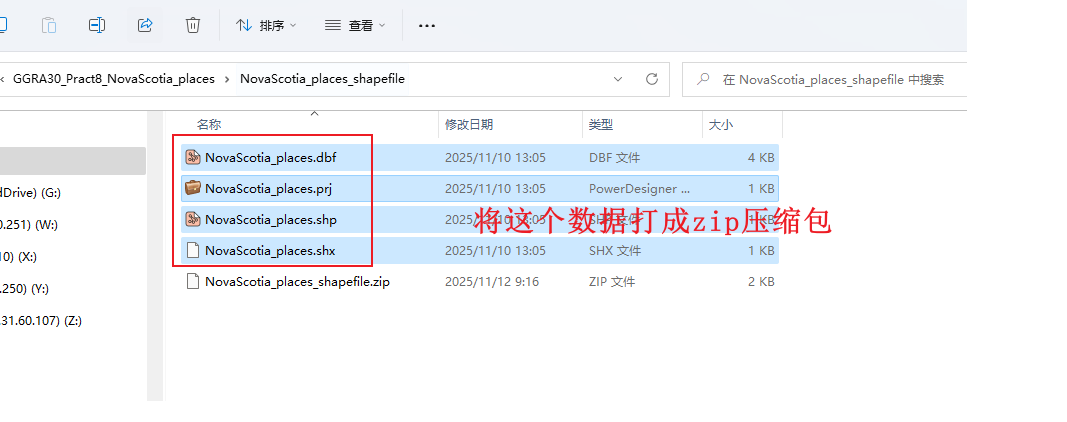

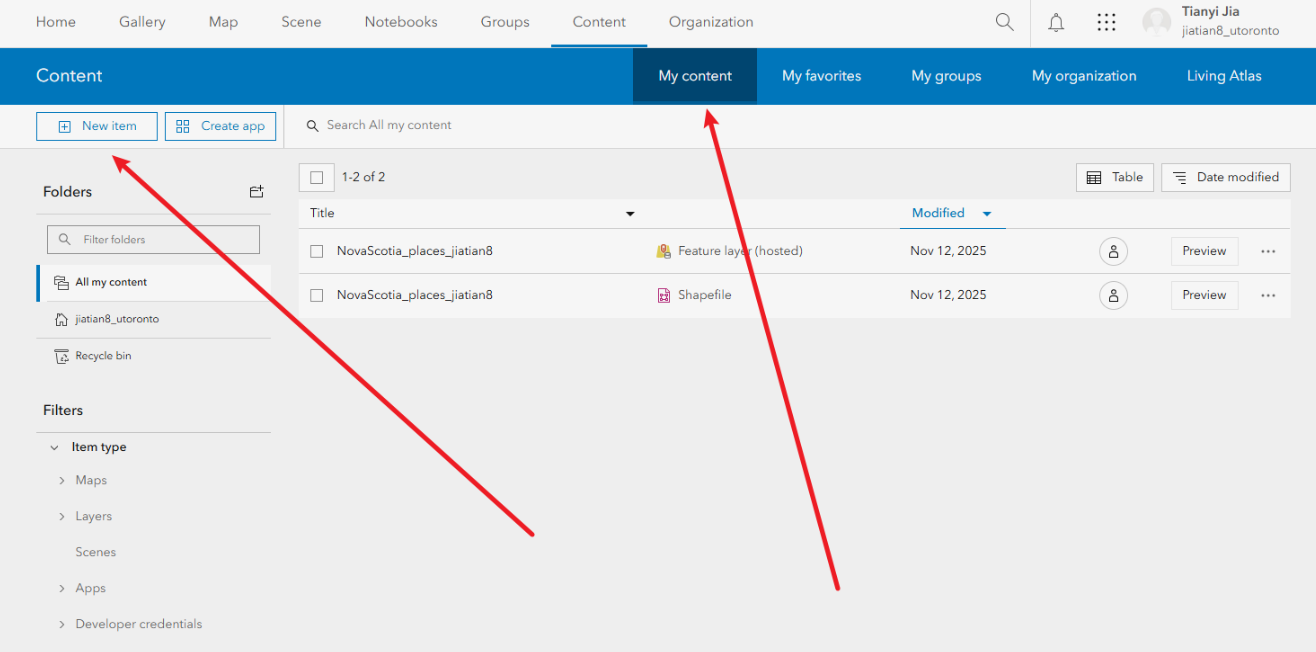

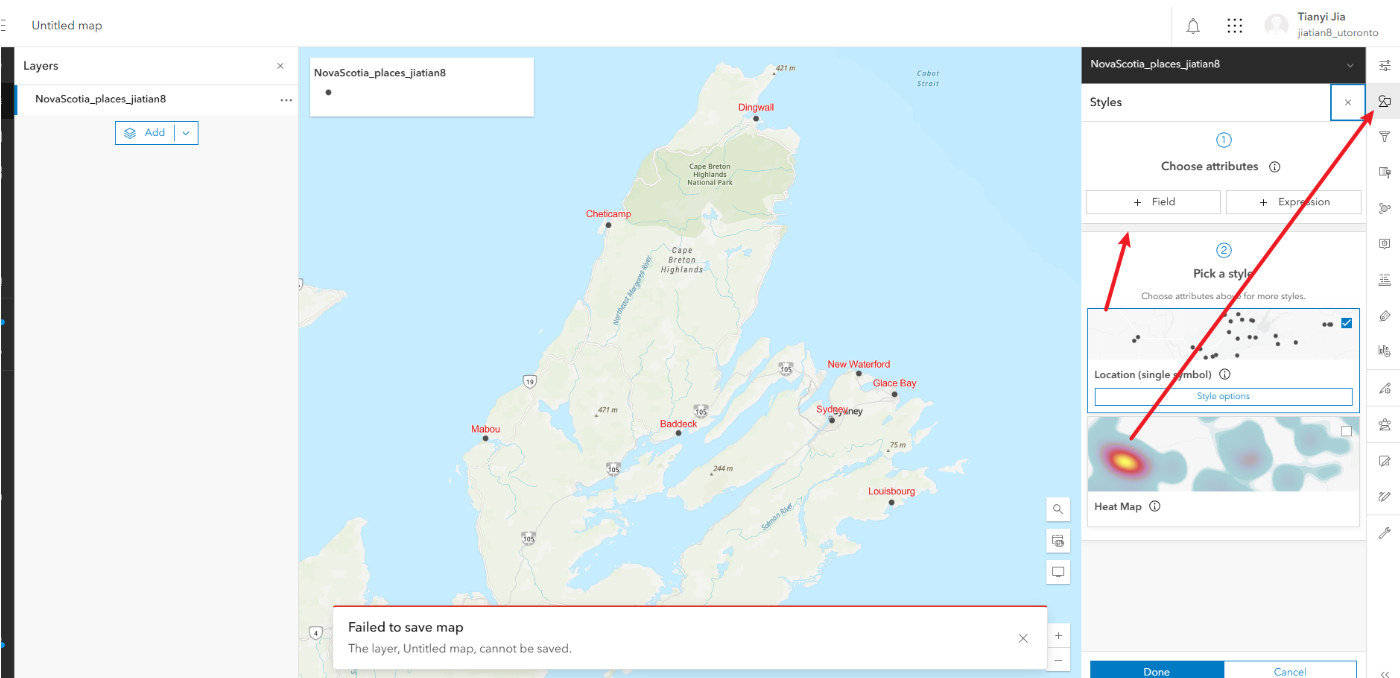

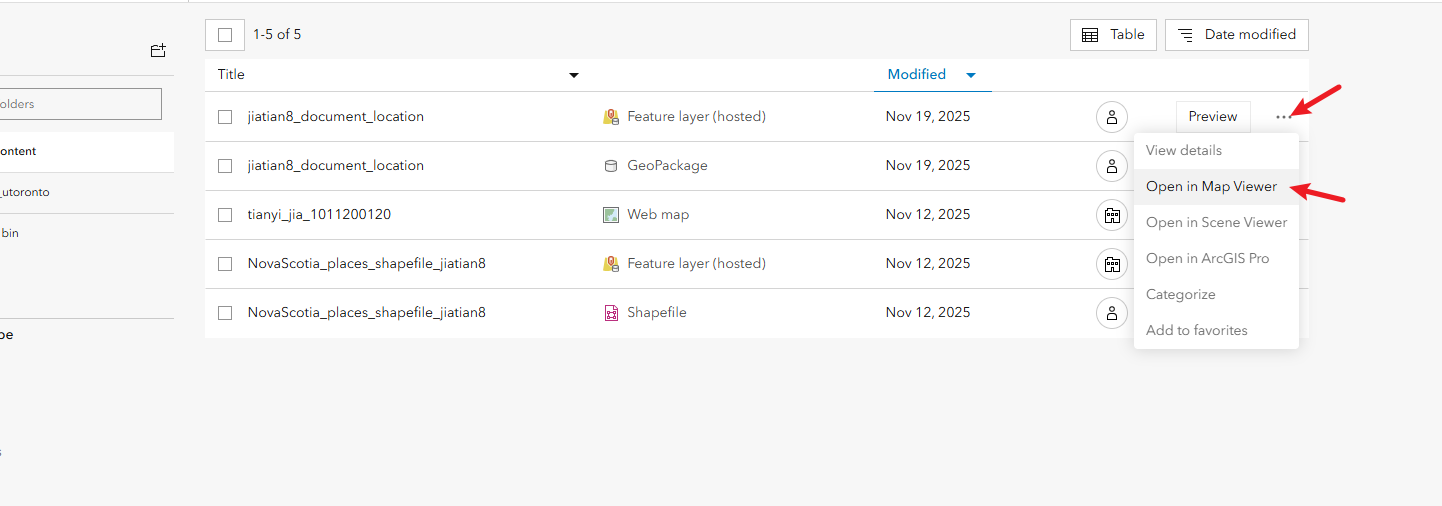

1. Login and Upload Data

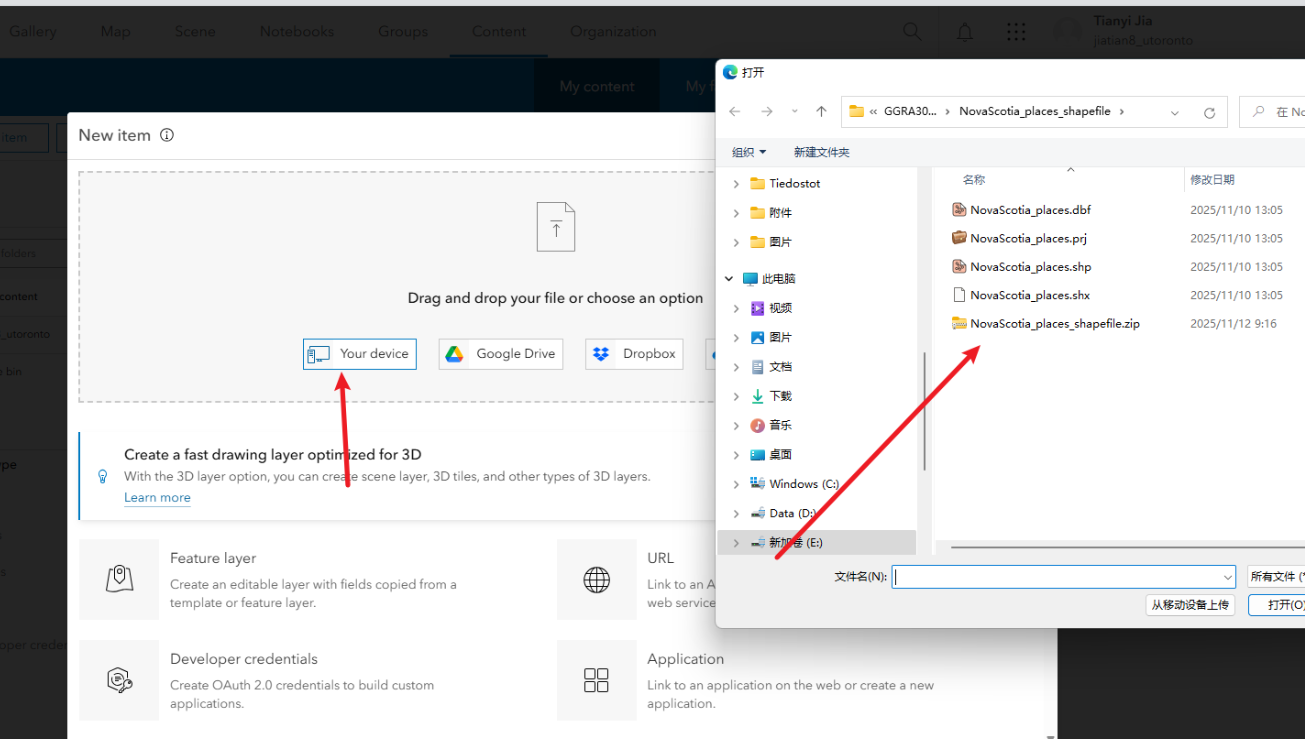

1.1 Login at https://utoronto.maps.arcgis.com/home/index.html

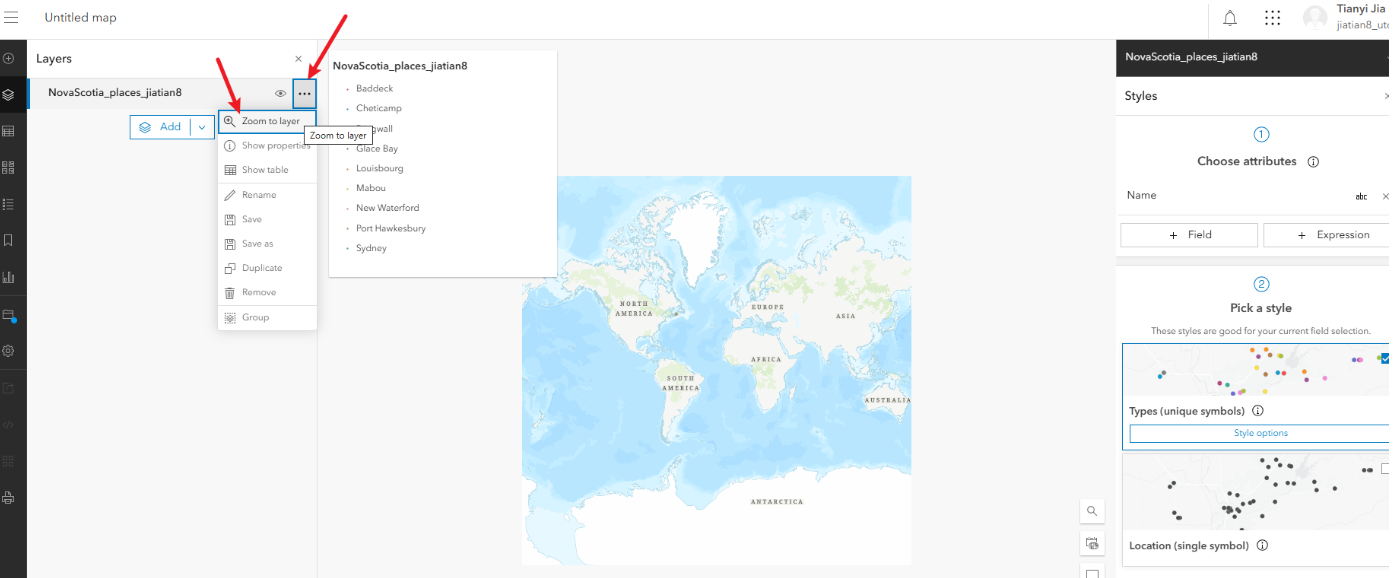

1.2 Upload zip of NovaScotia_places.shp. Click Content > New Item > Your Device. Choose hosted feature layer. Title: NovaScotia_places_Name.

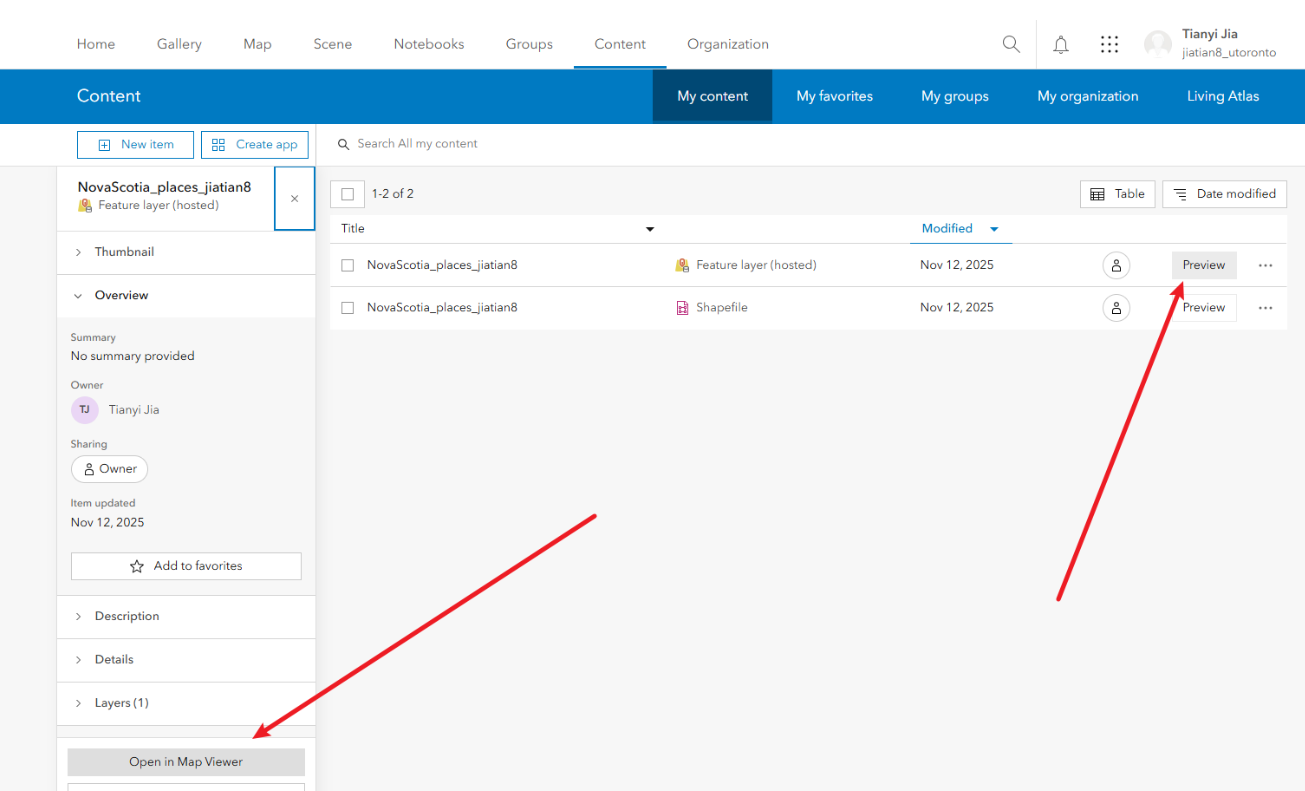

2. Open in Map Viewer

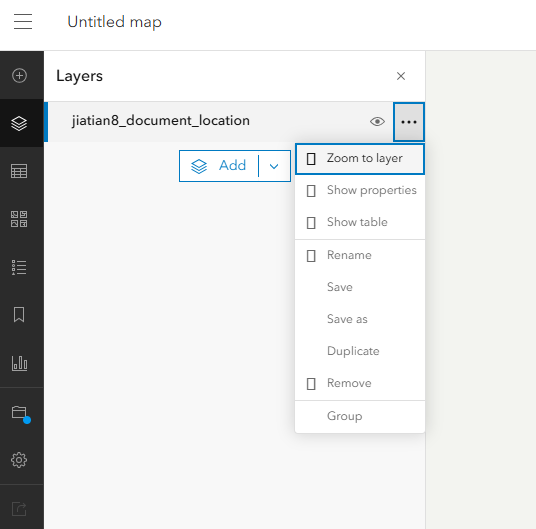

2.1 Click Preview > Open in Map Viewer.

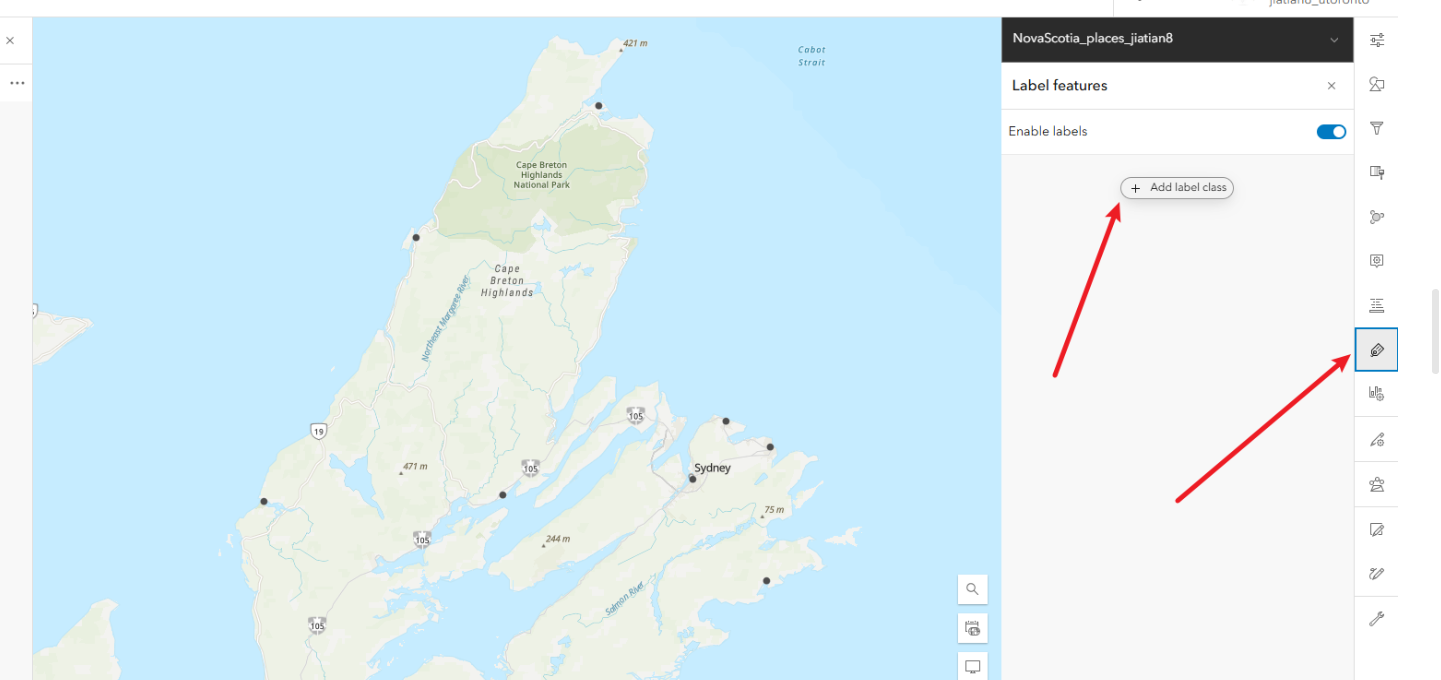

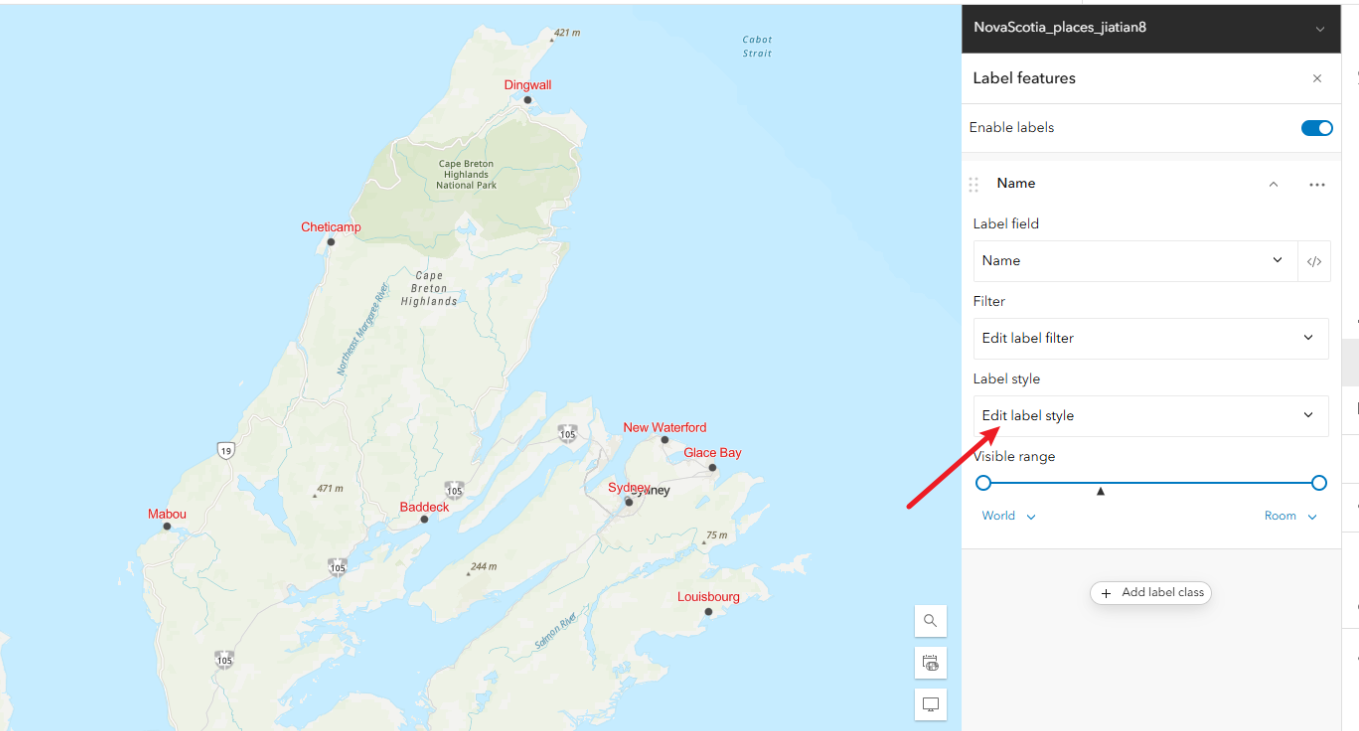

3. Configure Labels

3.1 Click Labels on right. Add new label.

3.2 Use 'name' field.

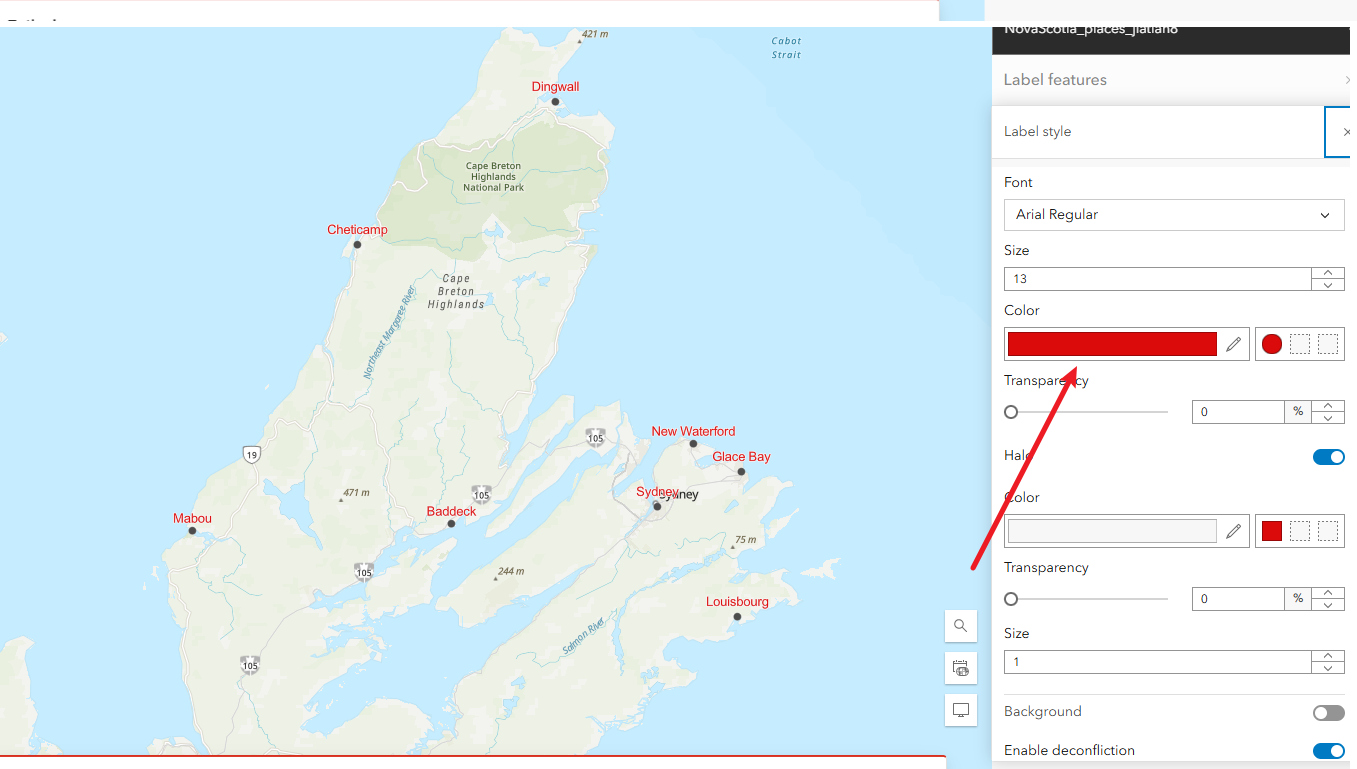

3.3 Zoom to layer.

3.4 Change label color for visibility.

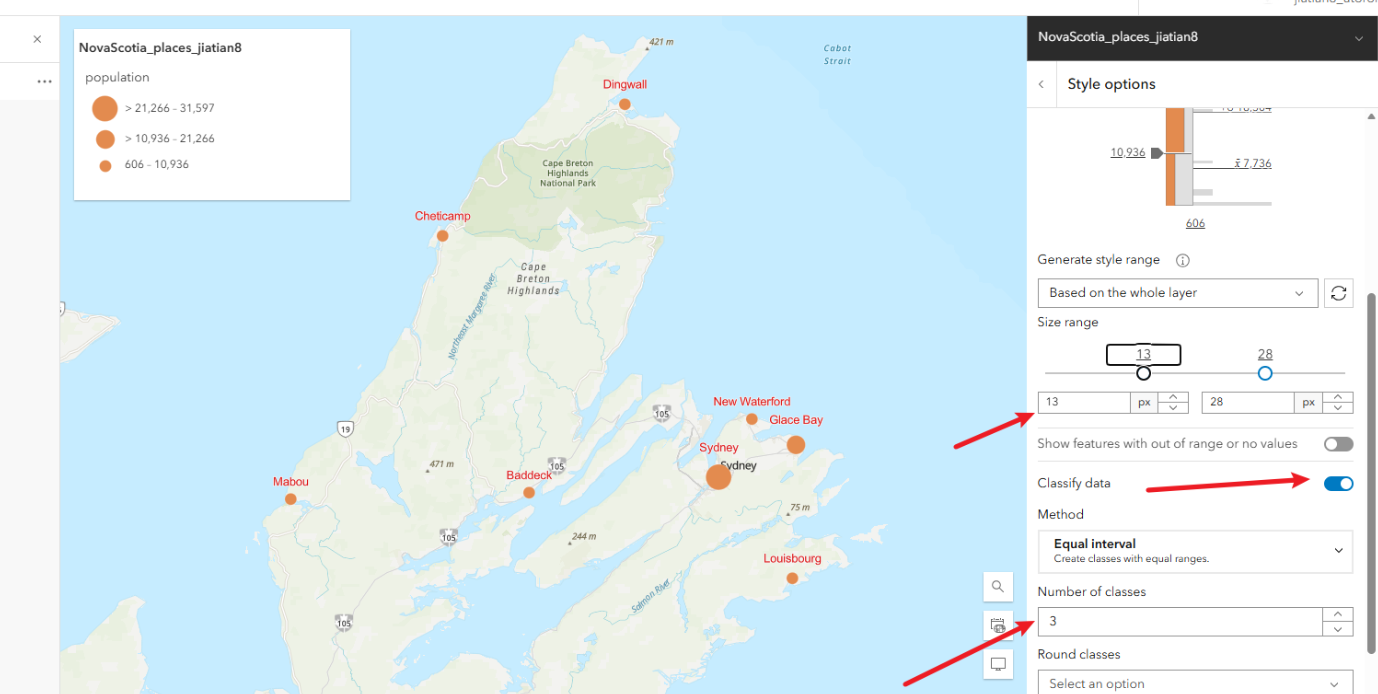

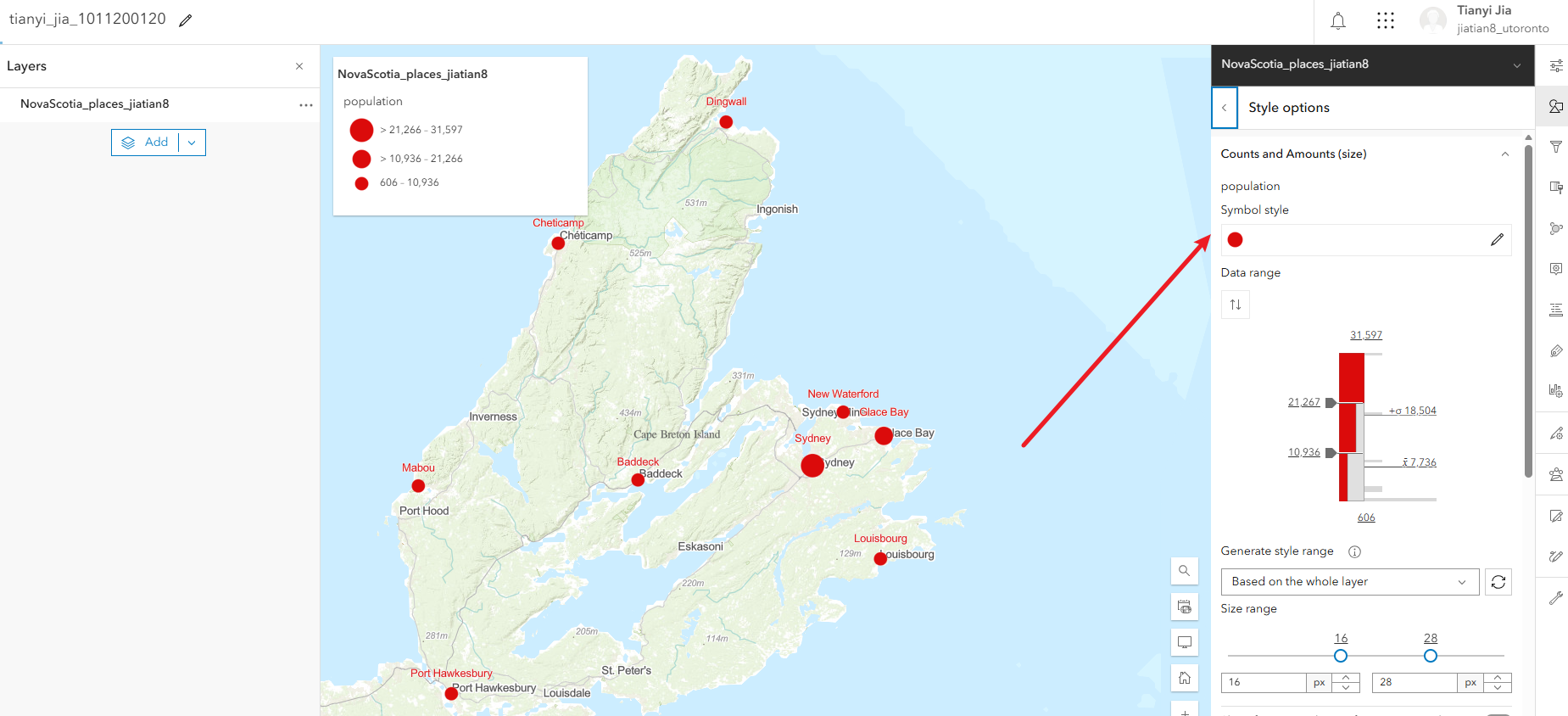

4. Configure Symbols

4.1 Click Styles.

4.2 Symbolize by 'pop' field.

4.3 Click Style options. Classify data, set number of classes to 3, increase minimum size for small values.

4.4 Change symbol color.

4.5 Click Done.

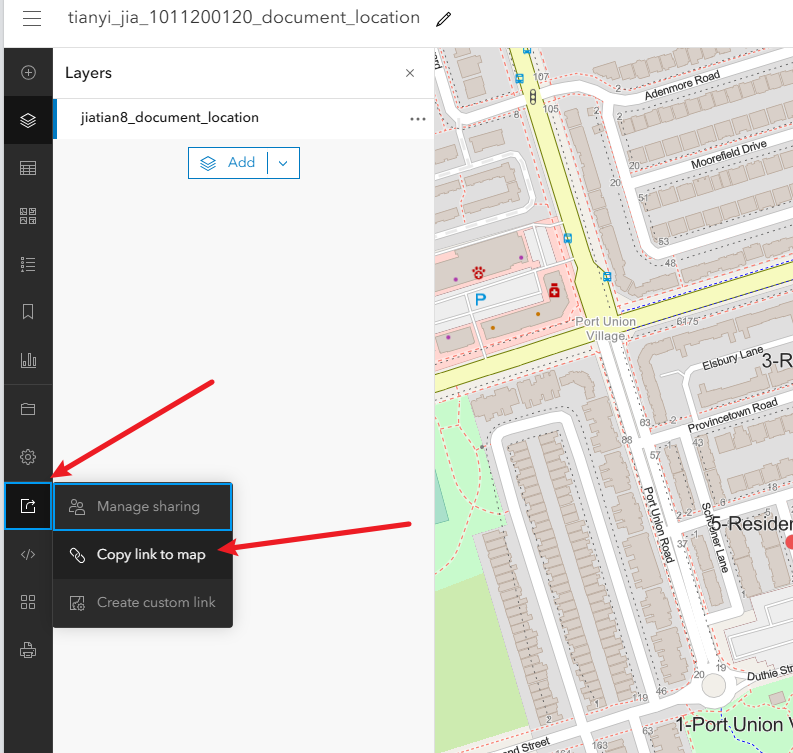

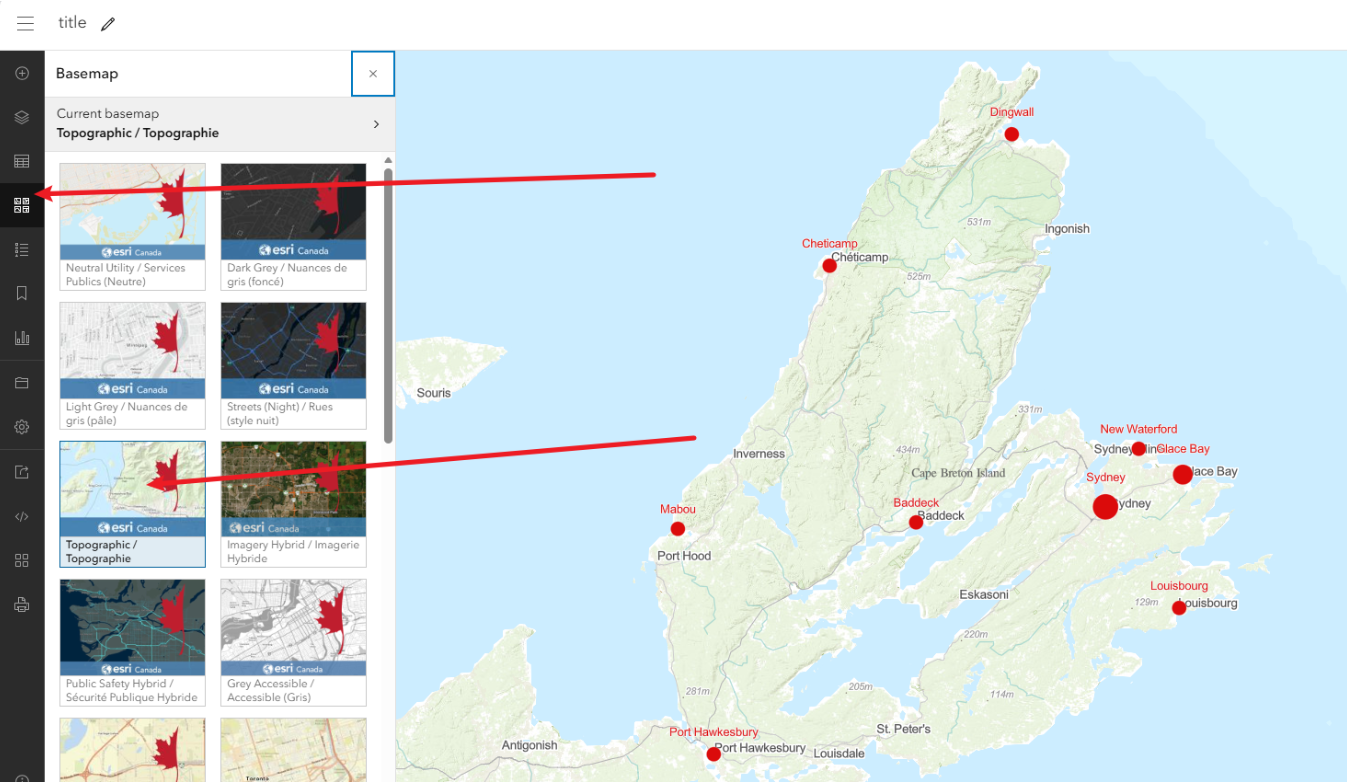

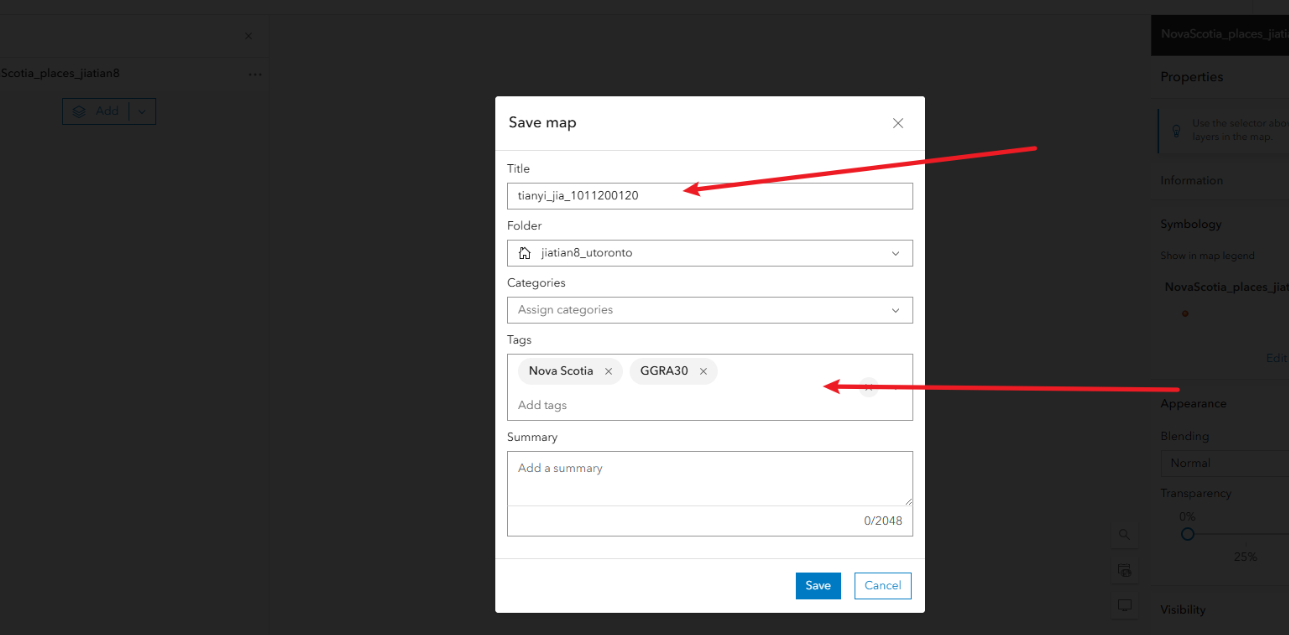

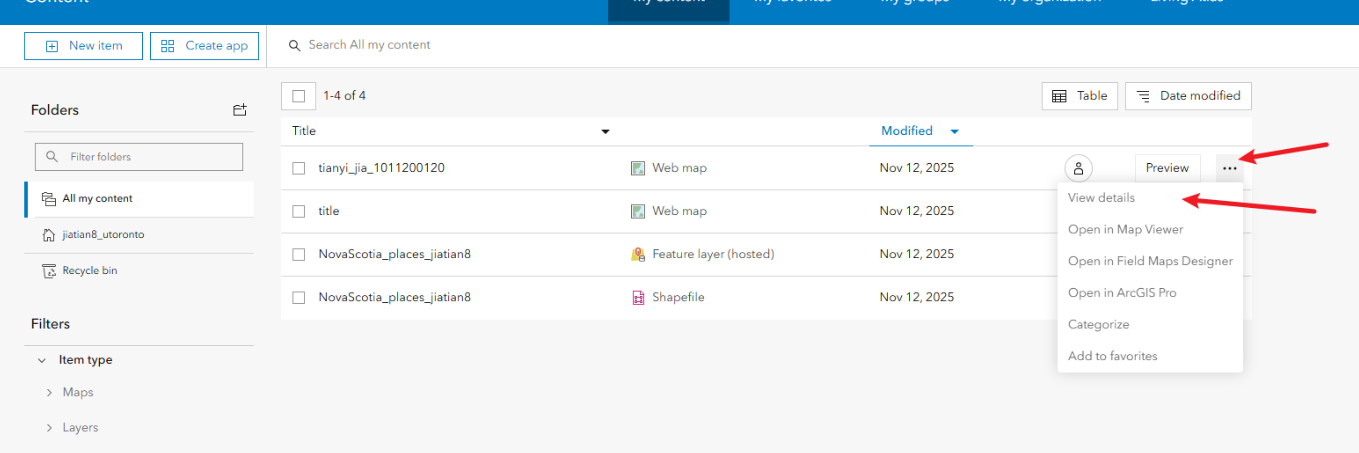

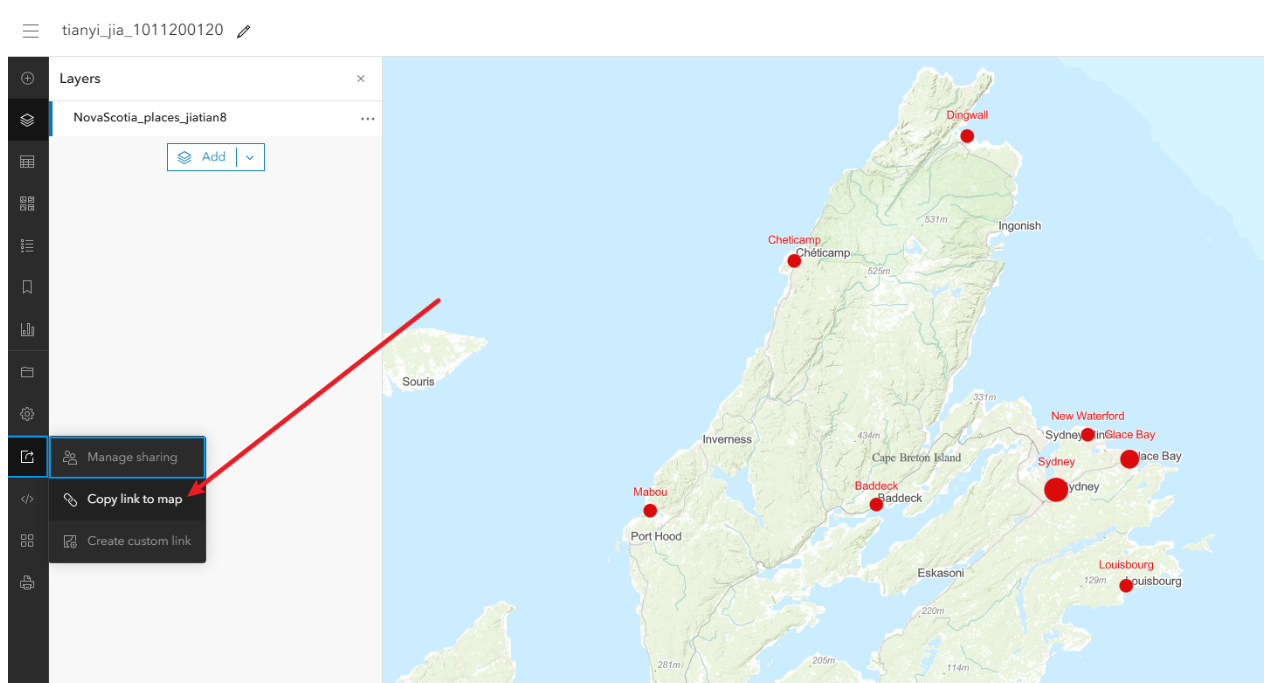

5. Change Basemap, Save As, Share

5.1 Click Basemap and change.

5.2 Save As: title with Name_StudentID, tags "Nova Scotia, GGRA30".

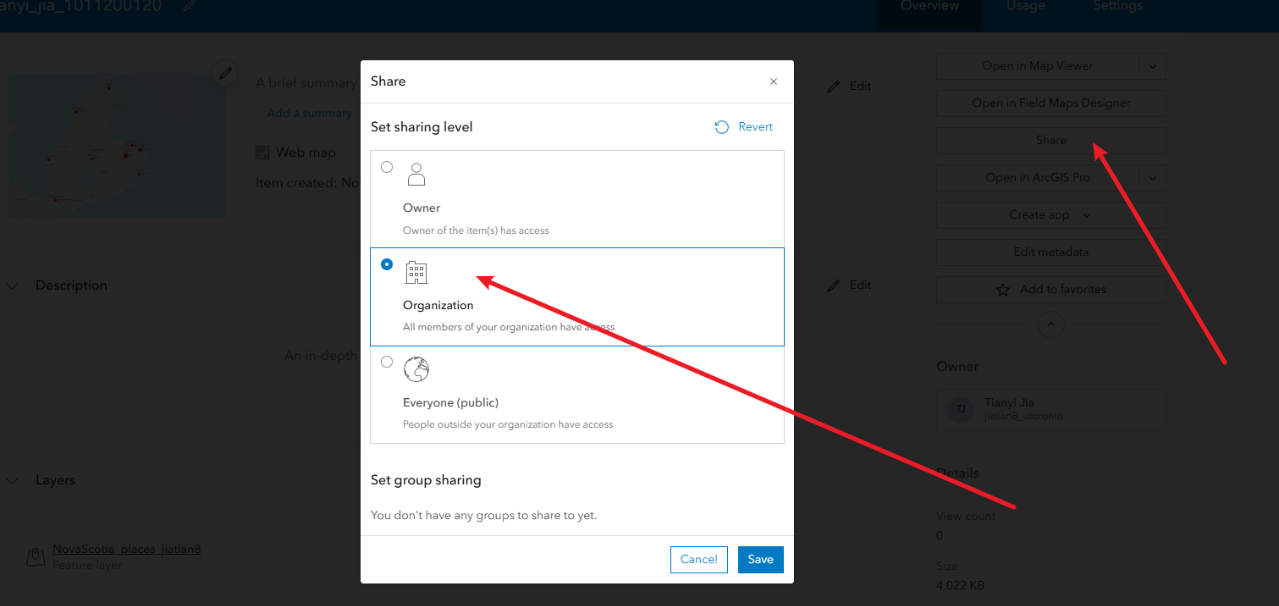

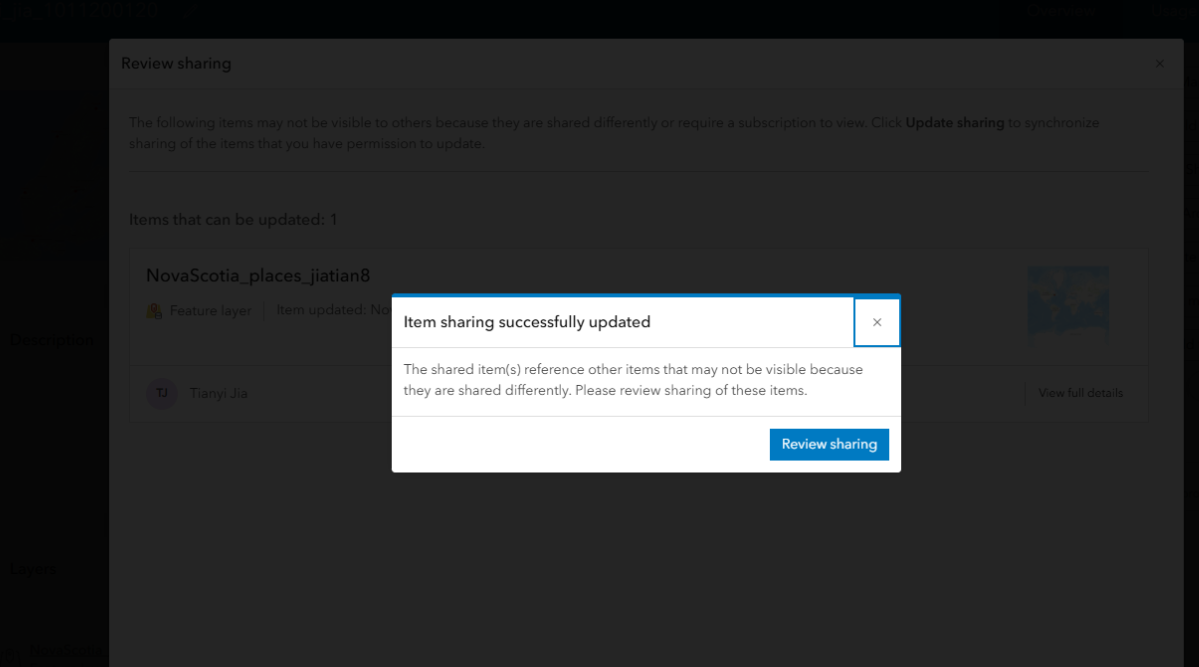

5.3 Share the feature layer to organization.

5.4 Share the map. Copy link.

Final link: https://arcg.is/1TiOTn4

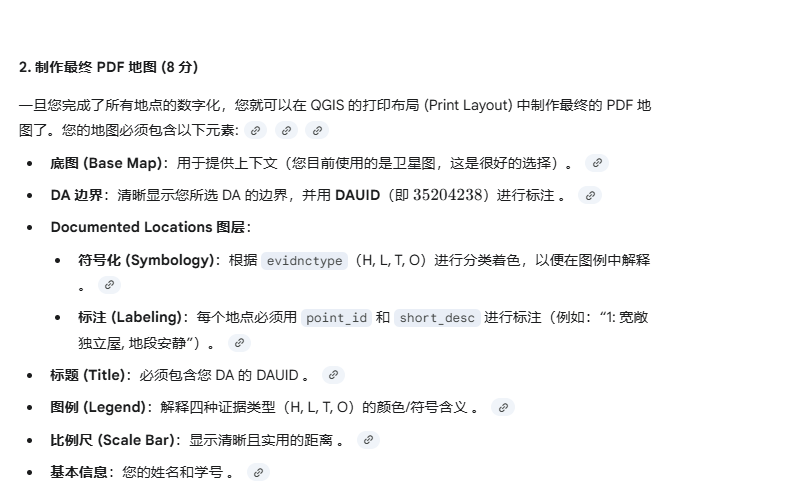

82. GGRA30_Assign4

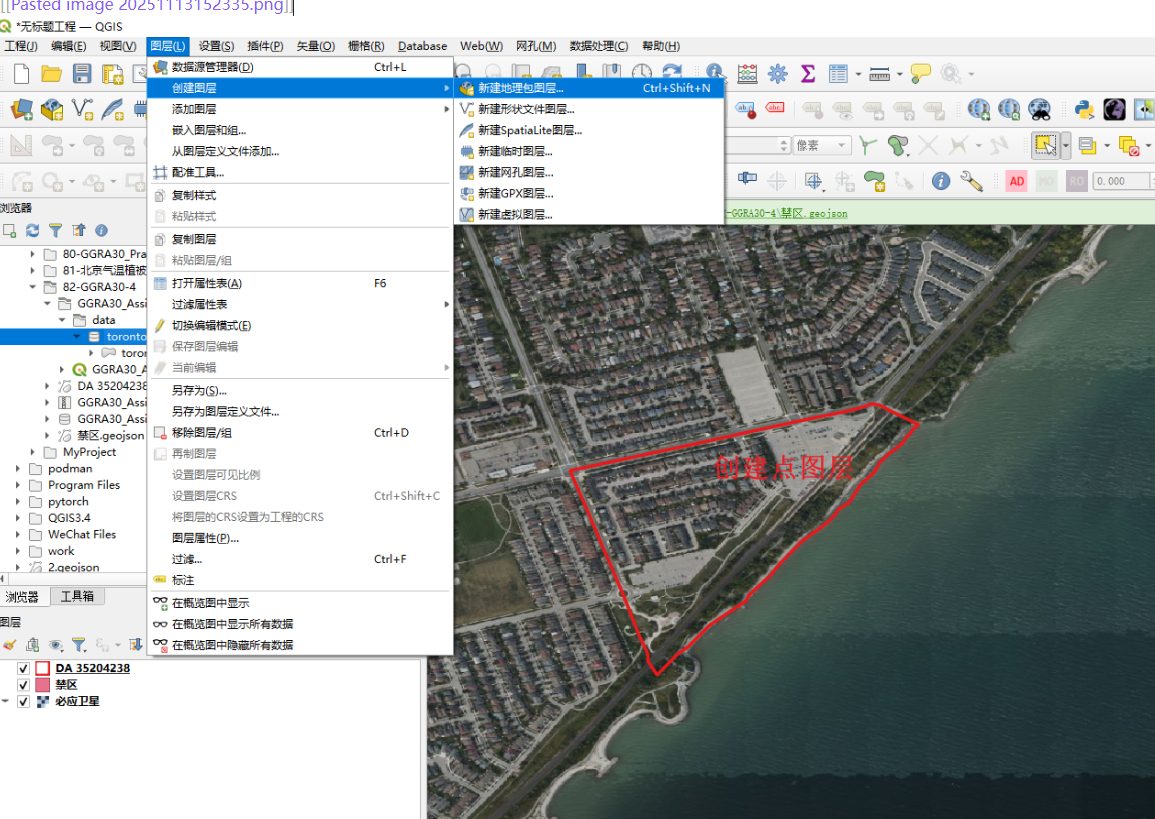

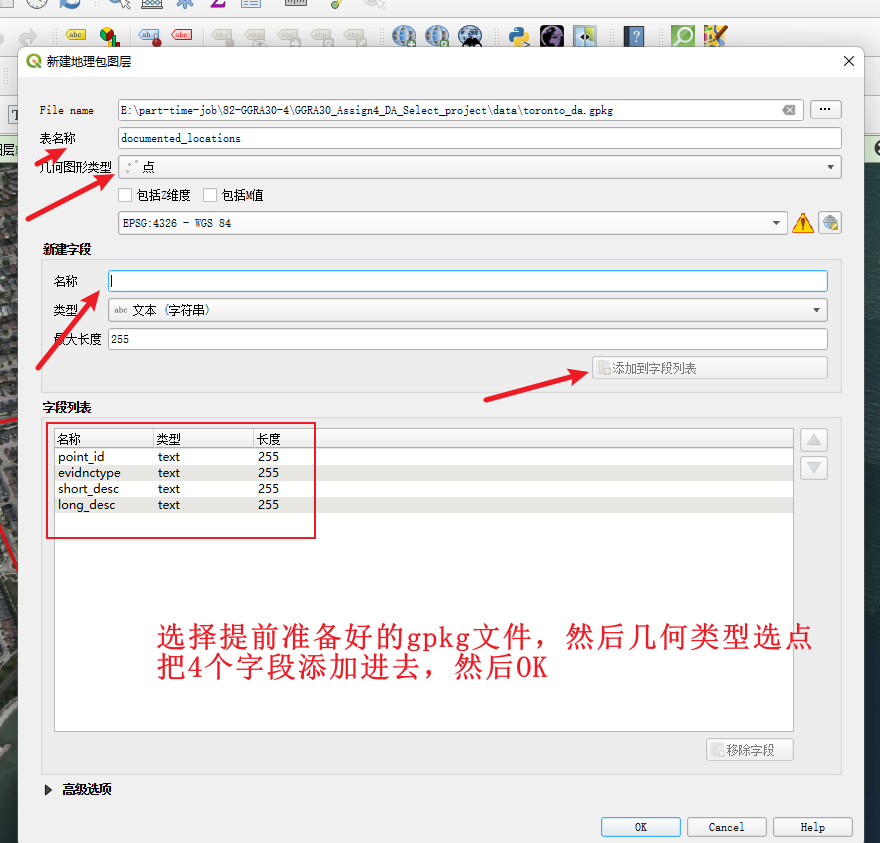

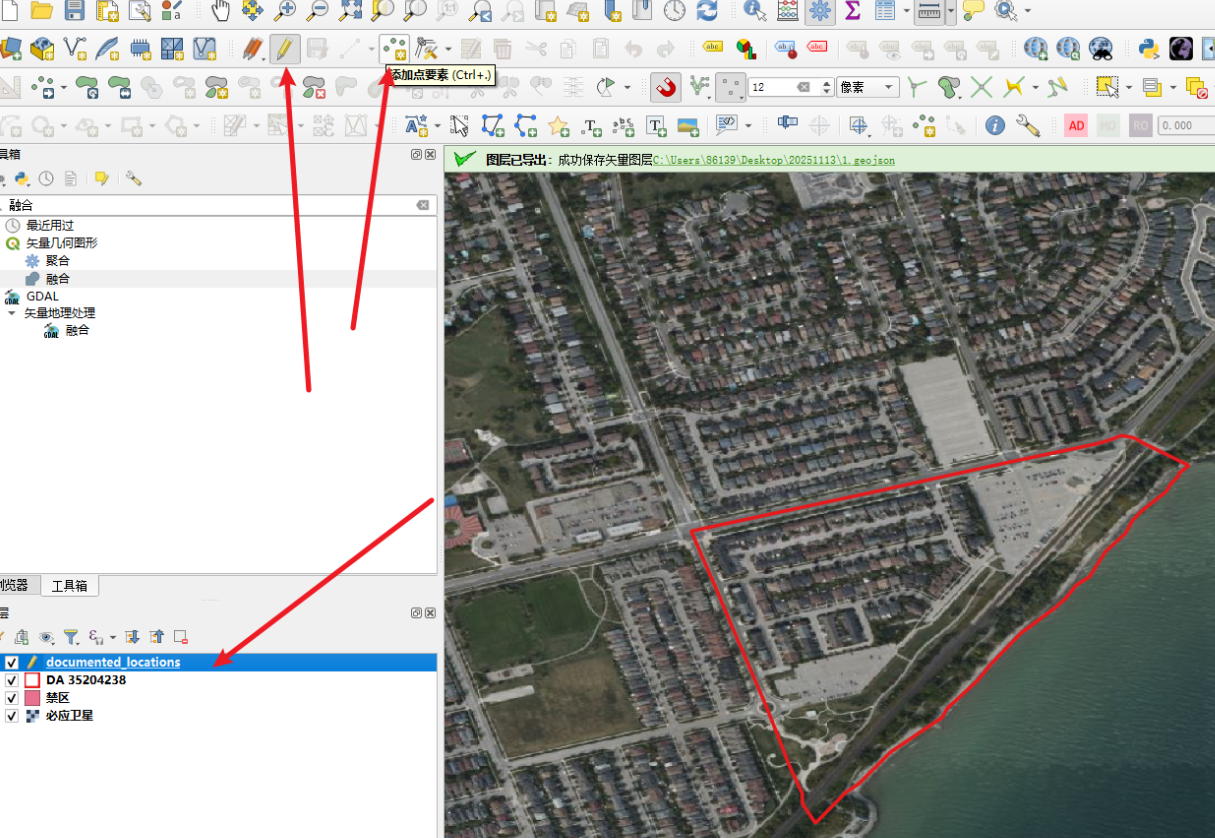

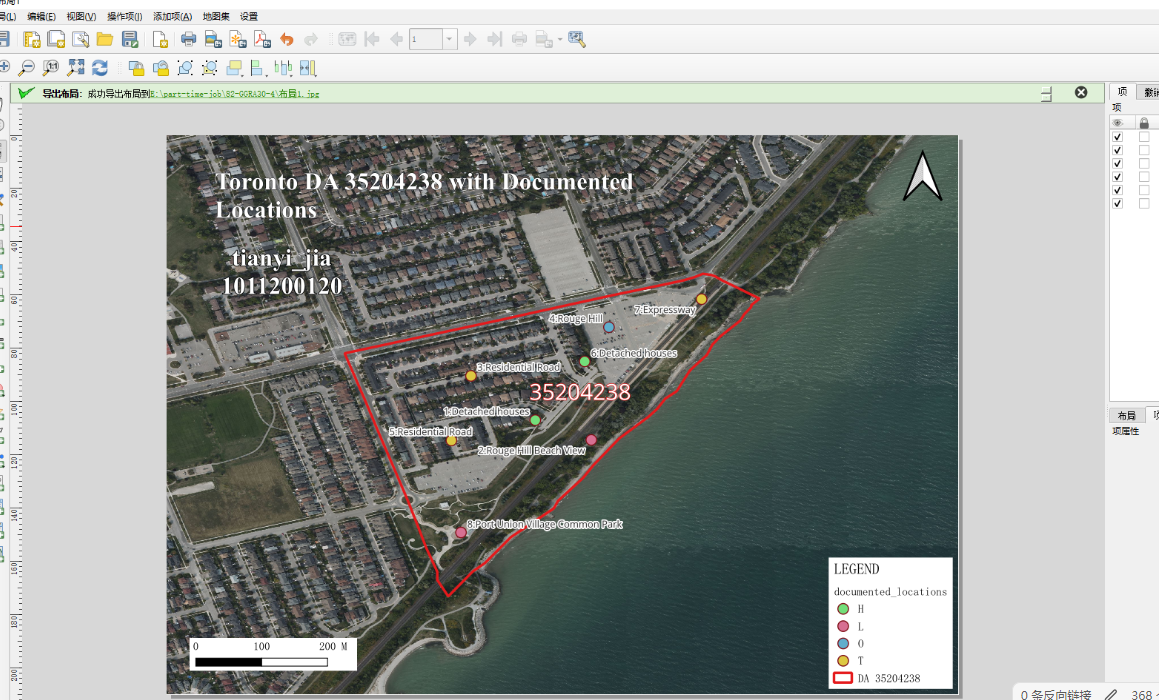

Step 1: Select DA and Create Point Layer

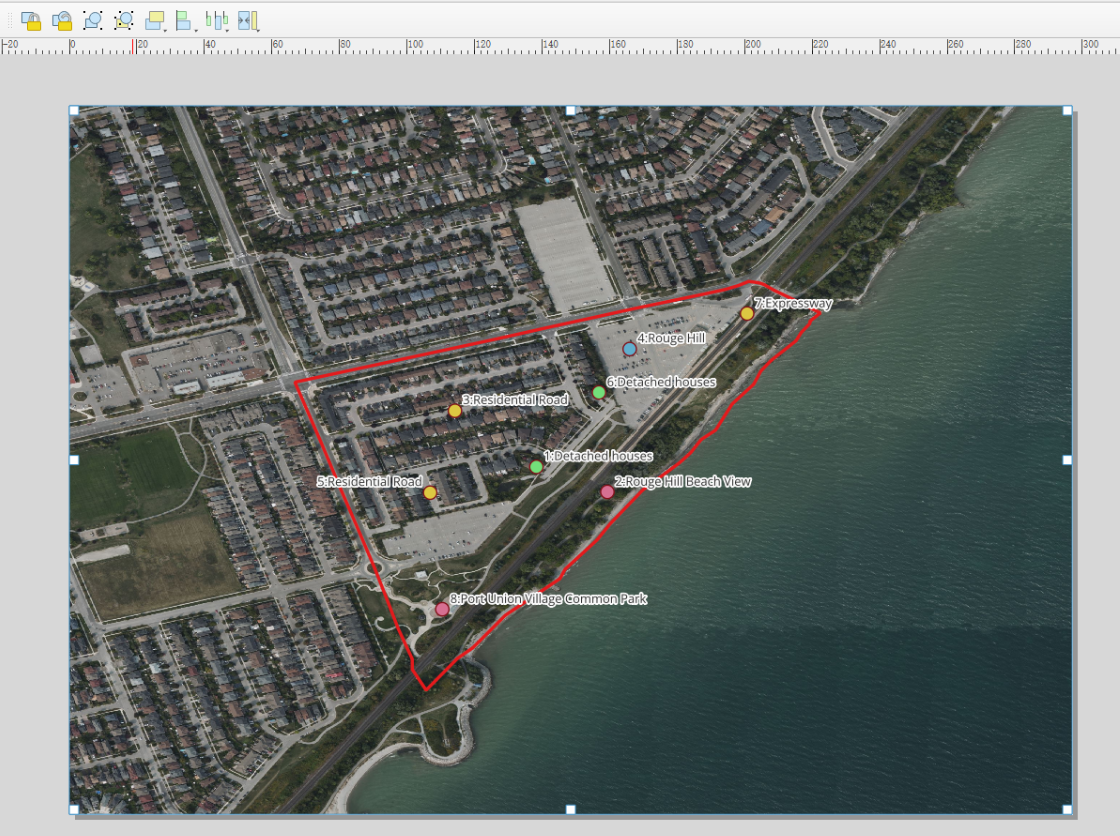

1. Select a DA with clear boundaries and diverse evidence types (residential density, land use, transportation). Note DAUID (e.g., 35204238).

2. Open documented_locations.gpkg and create a documented_locations point layer.

Step 2: Create Points and Edit Attributes

3. Select the layer, click pencil to add points.

point_id: sequential from 1

evidnctype: H (housing), L (land use), T (transport), O (other)

short_desc: short label (e.g., place name)

long_desc: longer description

4. Add 8-12 points with selfies.

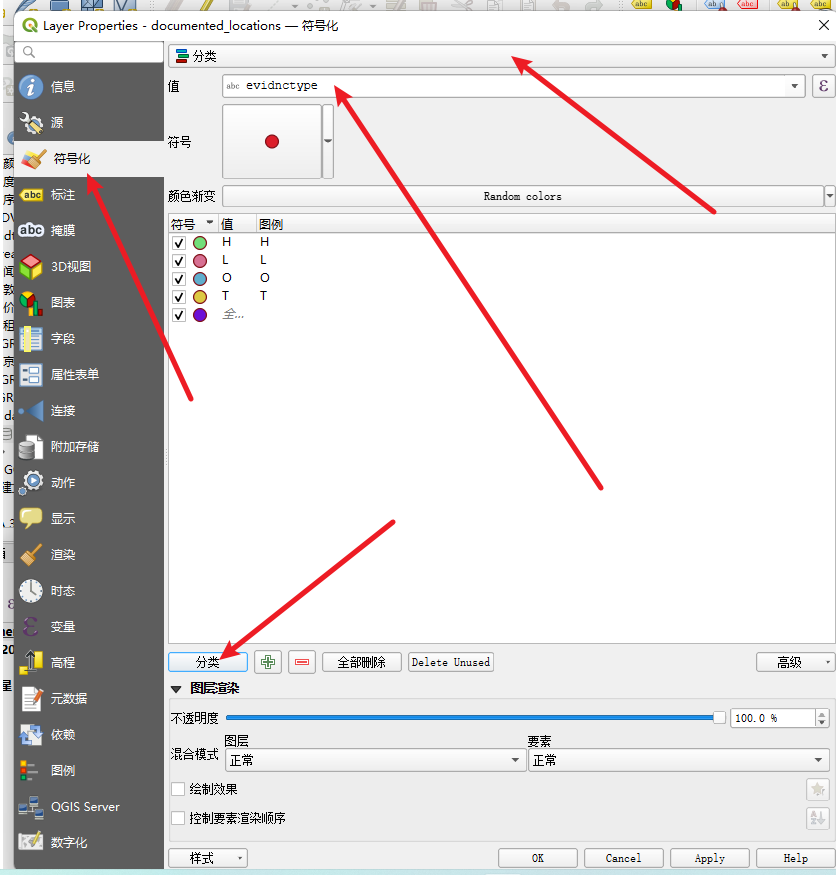

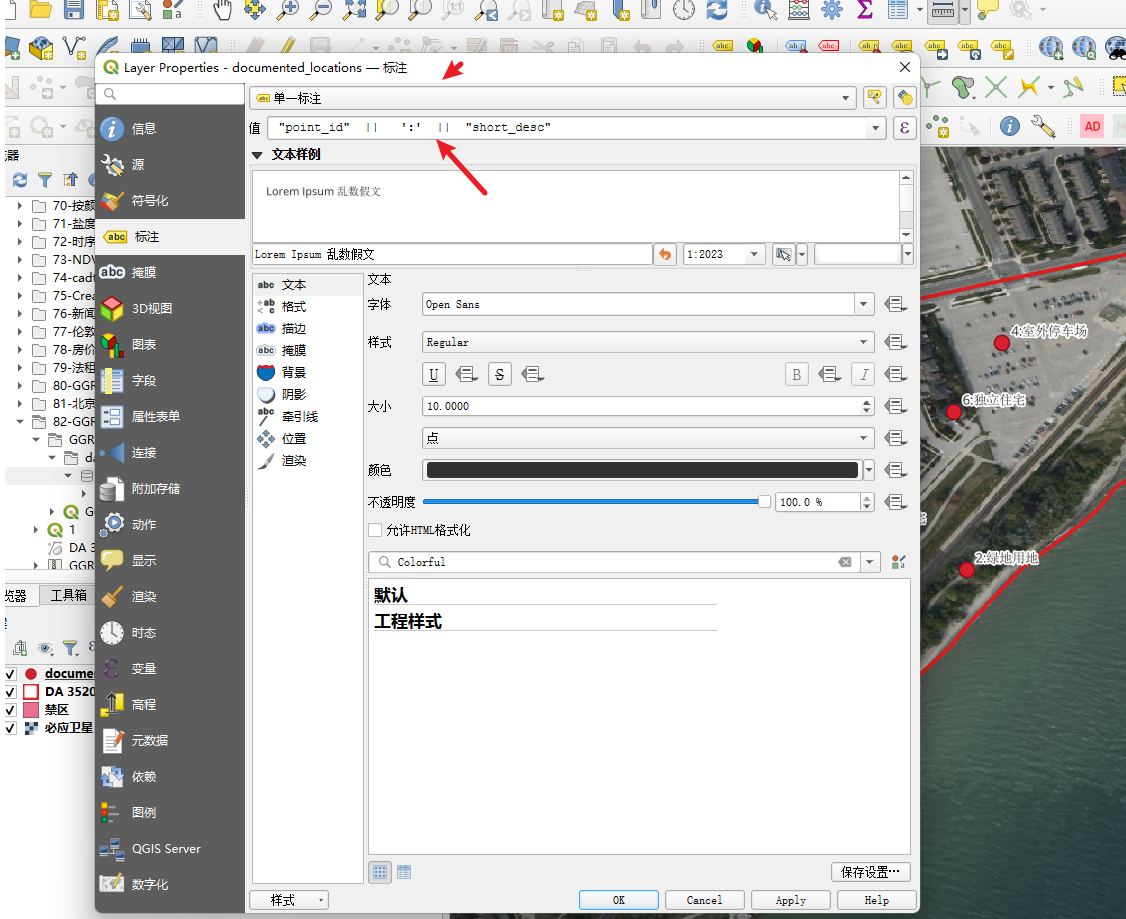

5. Symbolize by evidnctype (different colors) and label with "point_id: short_desc".

Step 3: Map Layout

Reference project template.

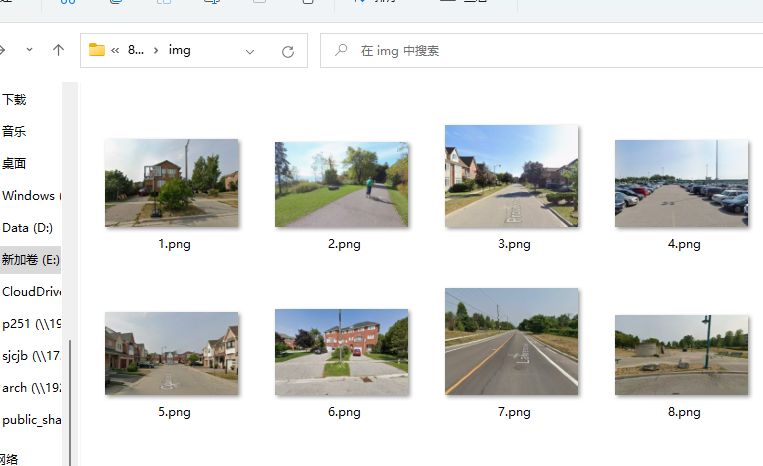

Step 4: Photographs

Name photos with point_id and short_desc.

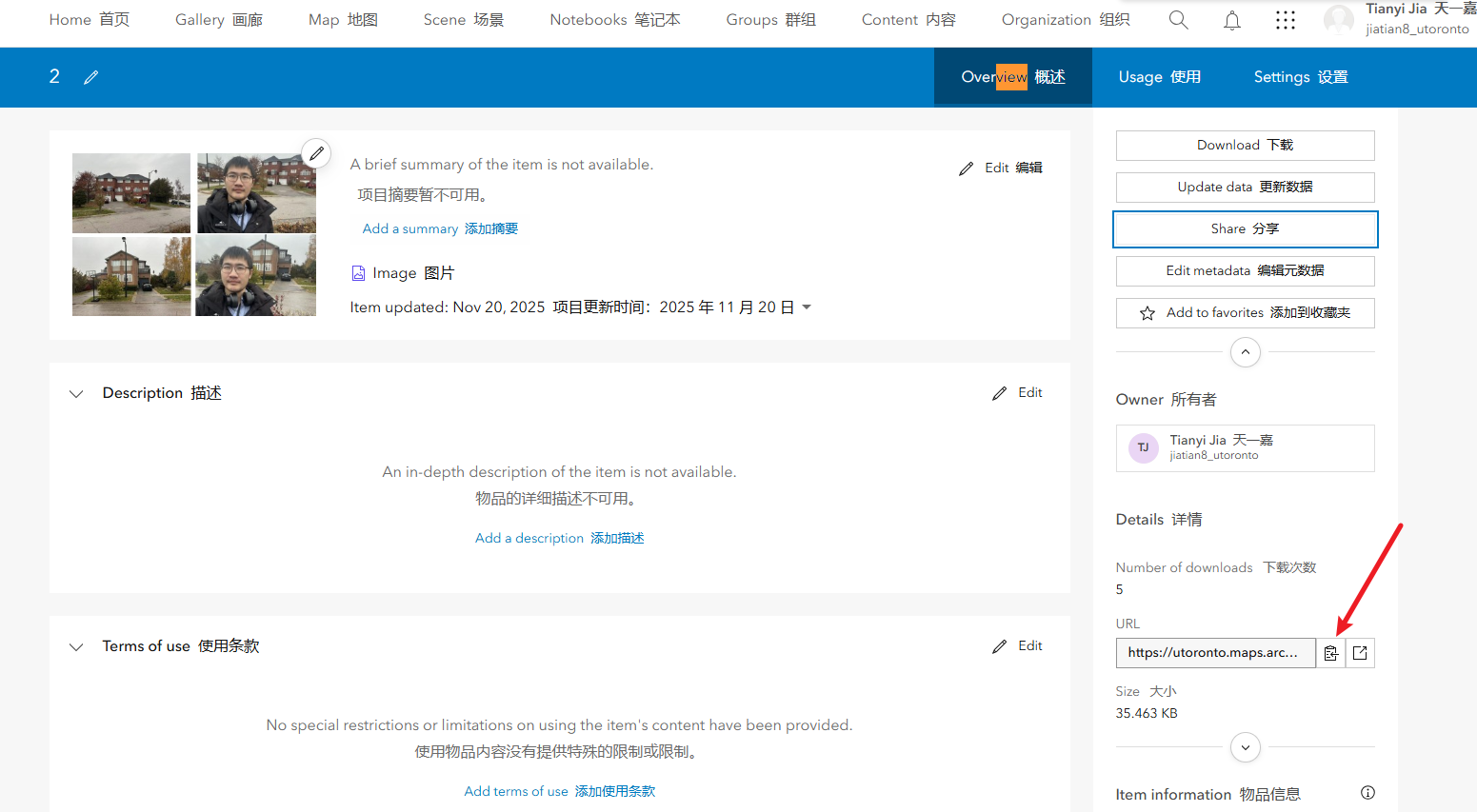

83. GGRA30_Assign5_IntroArcGISOnline

Step 1: Login

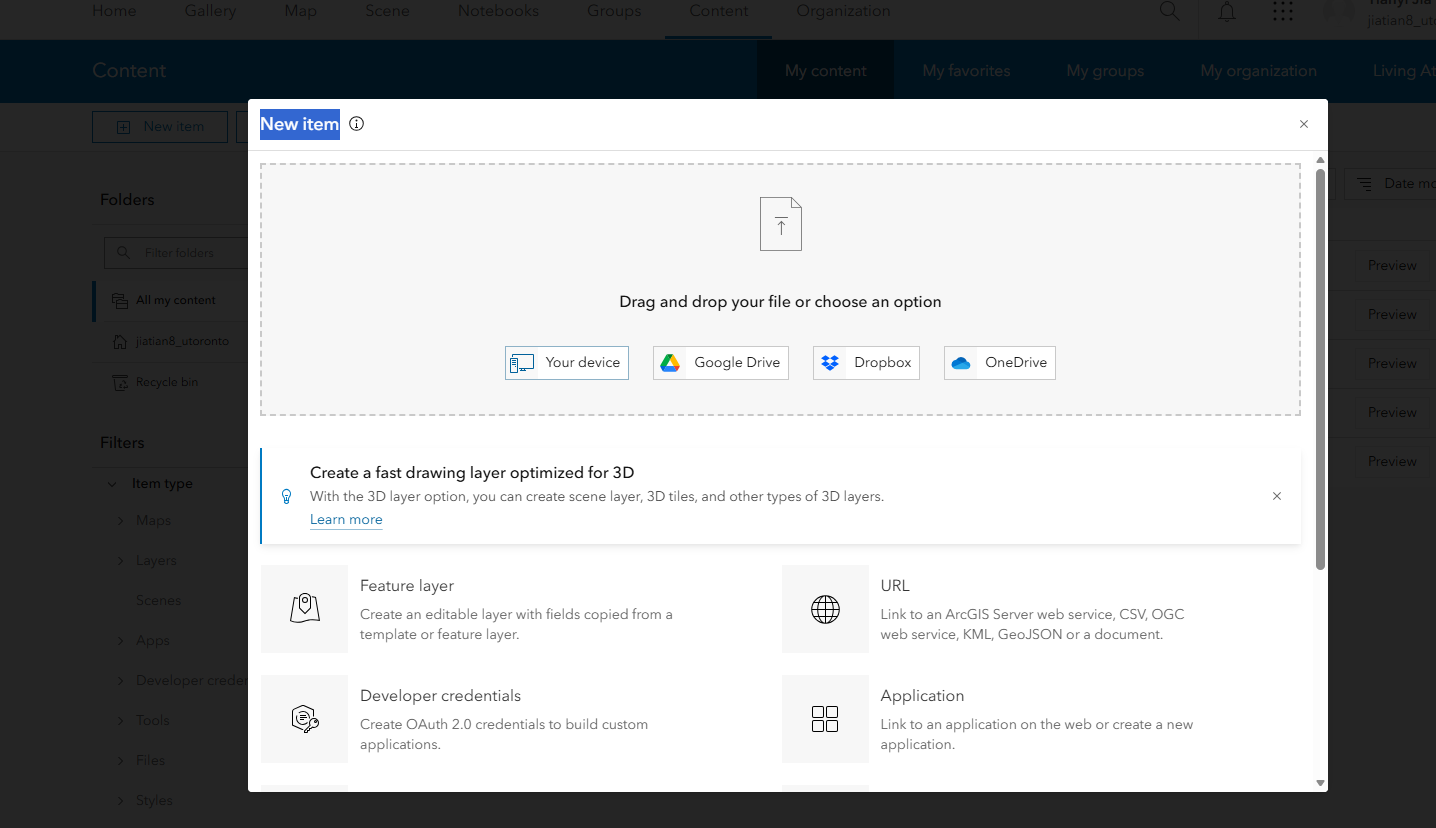

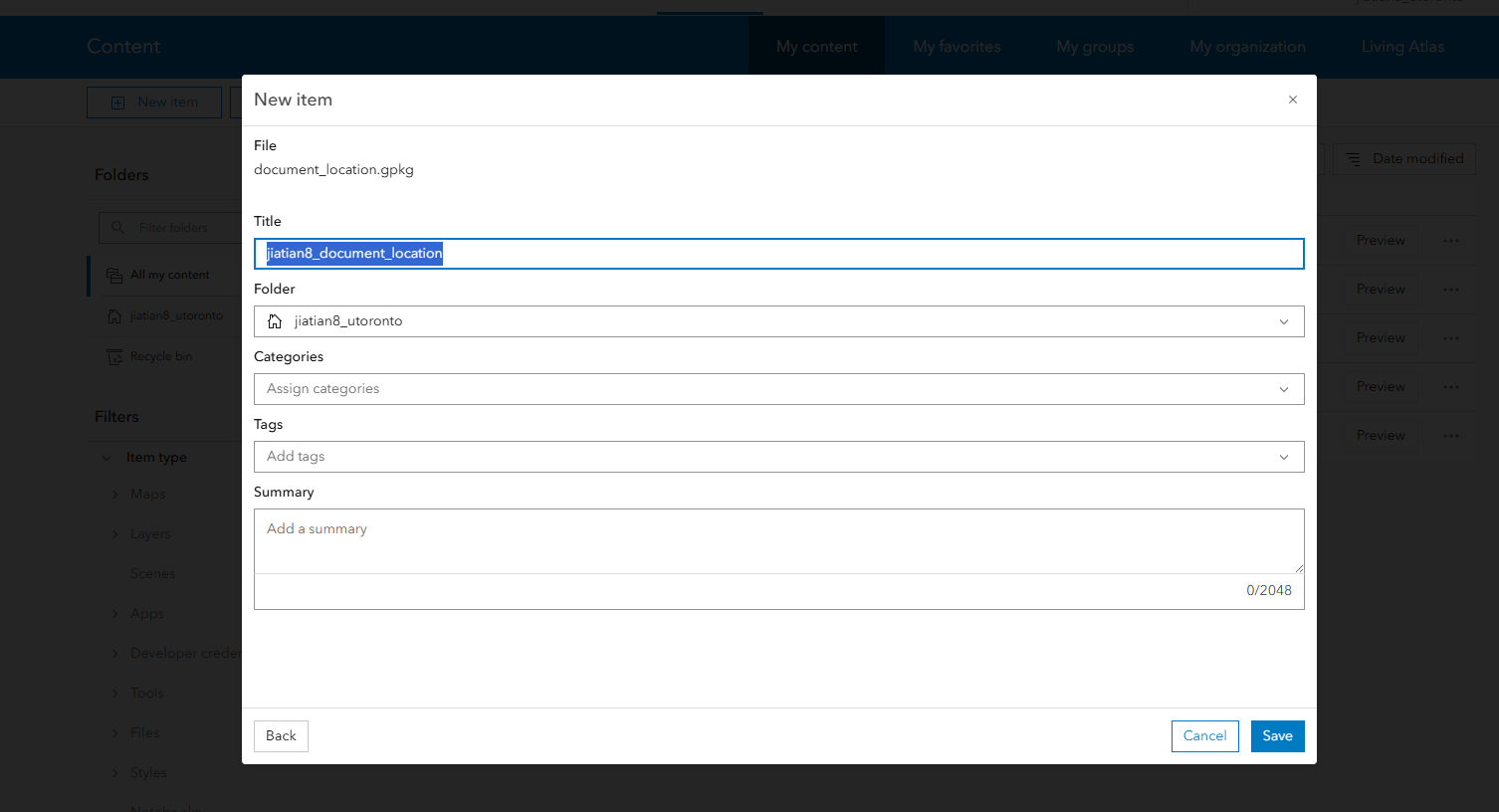

1. Login with school account at https://utoronto.maps.arcgis.com/ .

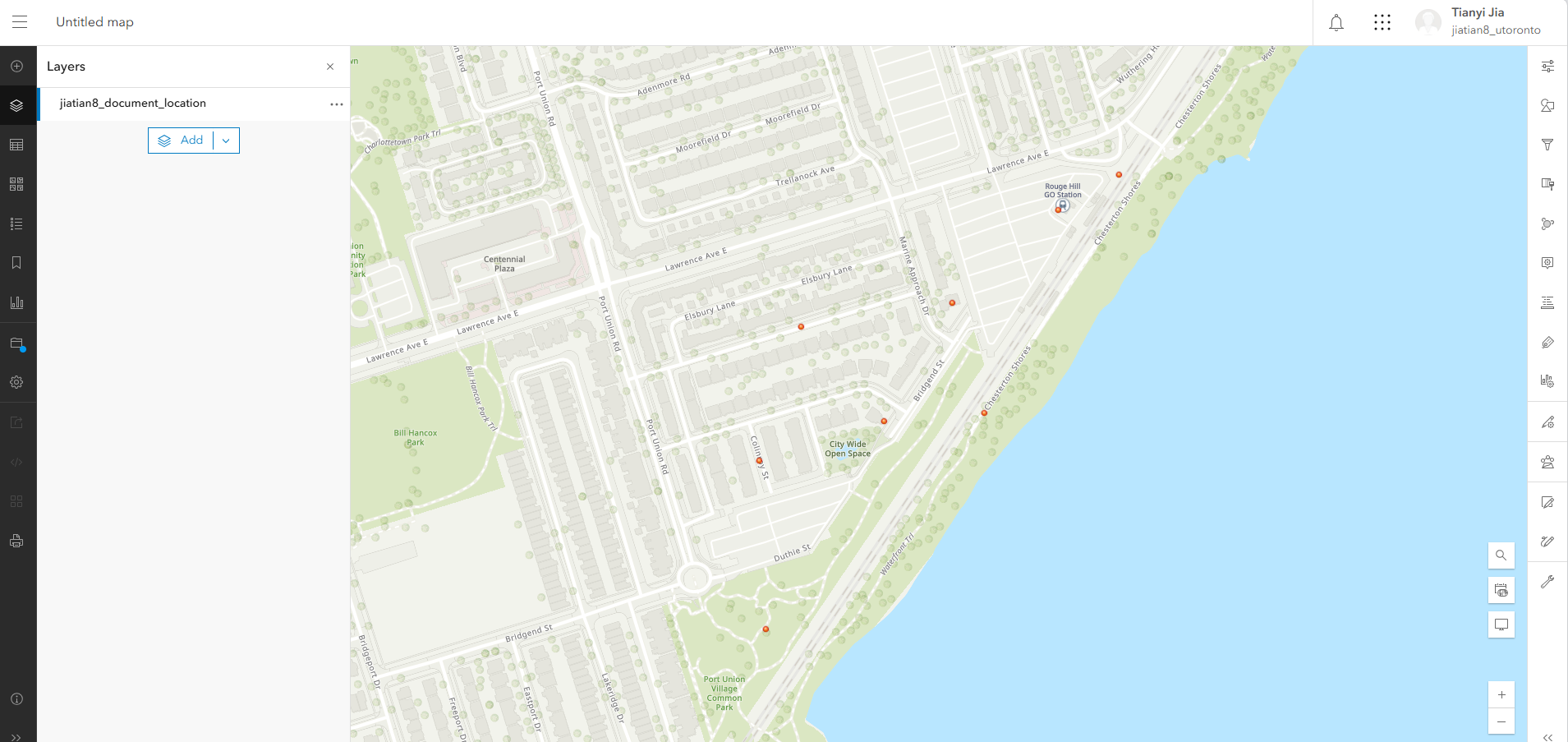

2. Upload documented_locations.gpkg as a hosted feature layer, name includes UTORID.

3. Upload all evidecne photos and selfies.

4. Get URLs of images.

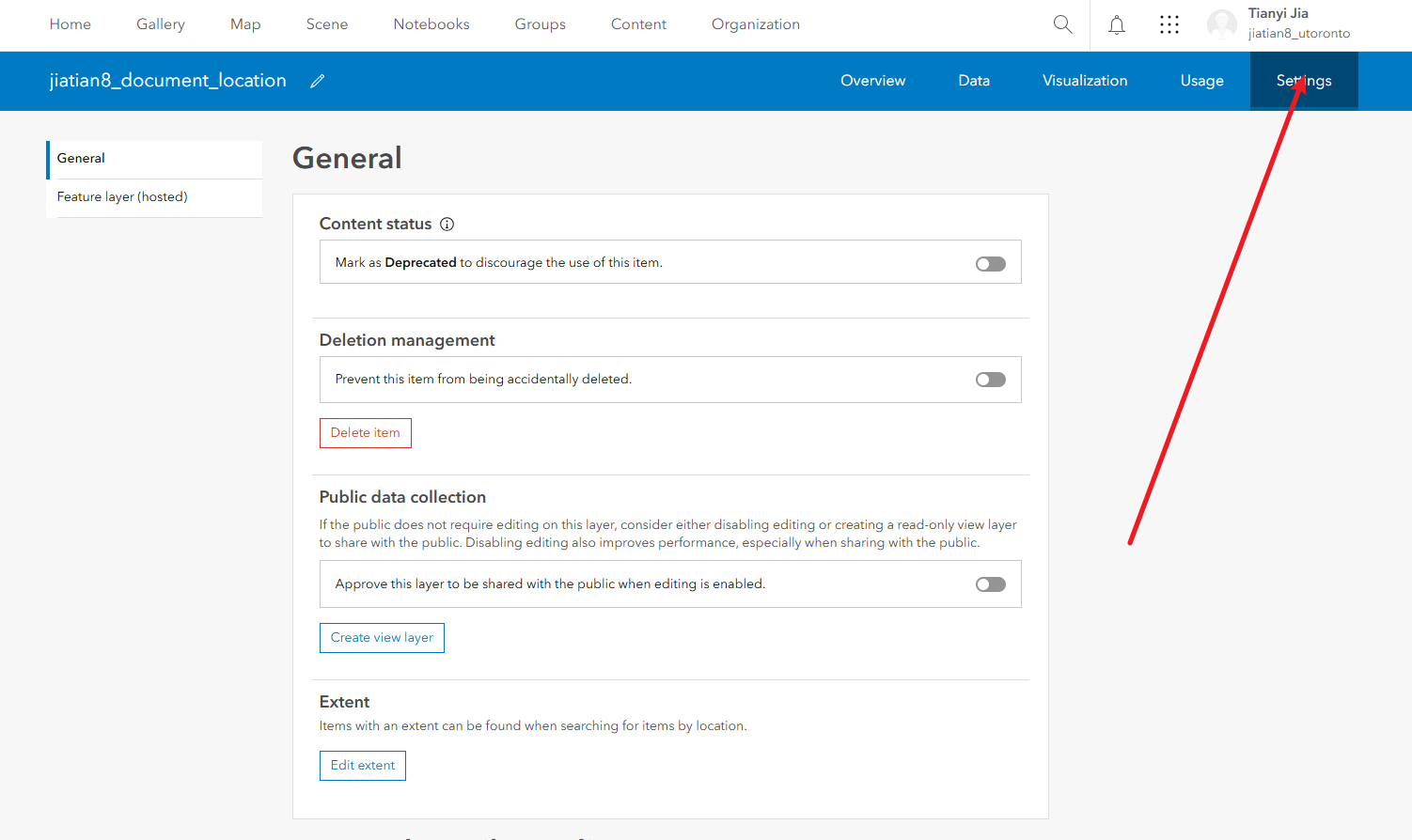

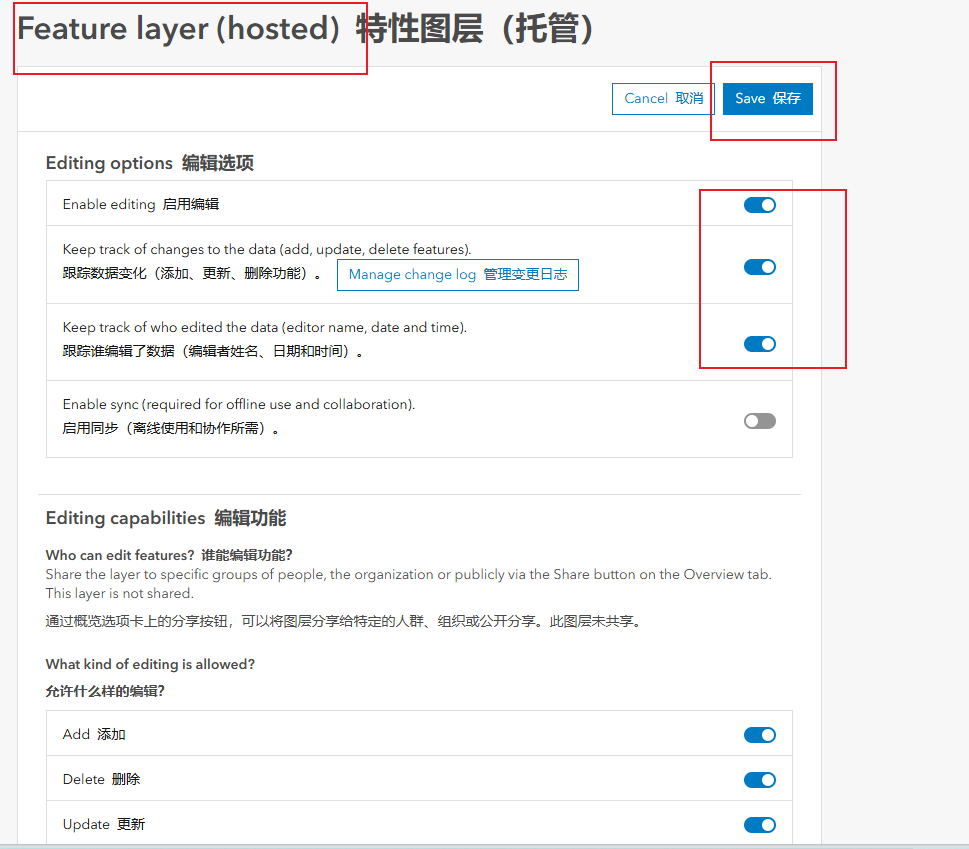

5. Go to layer details > Settings > Feature layer > enable editing, save.

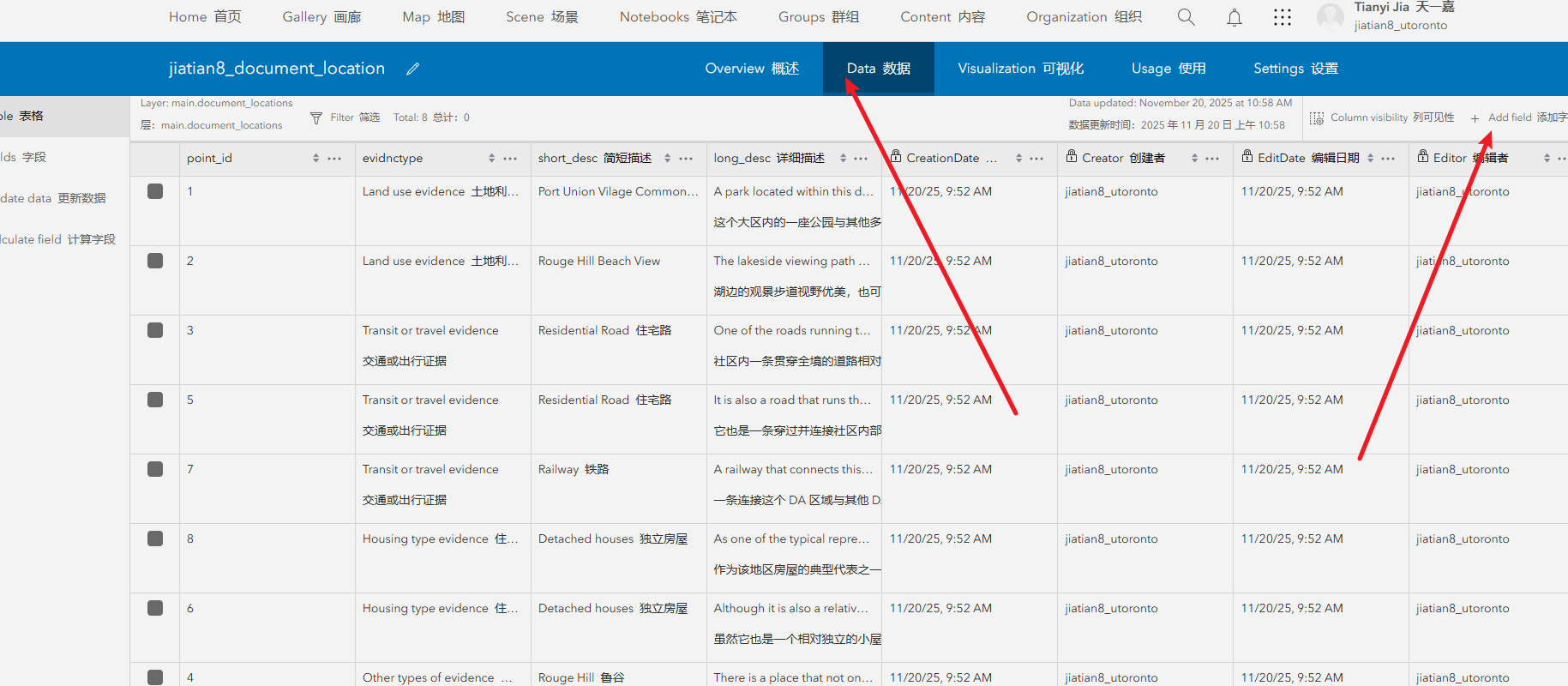

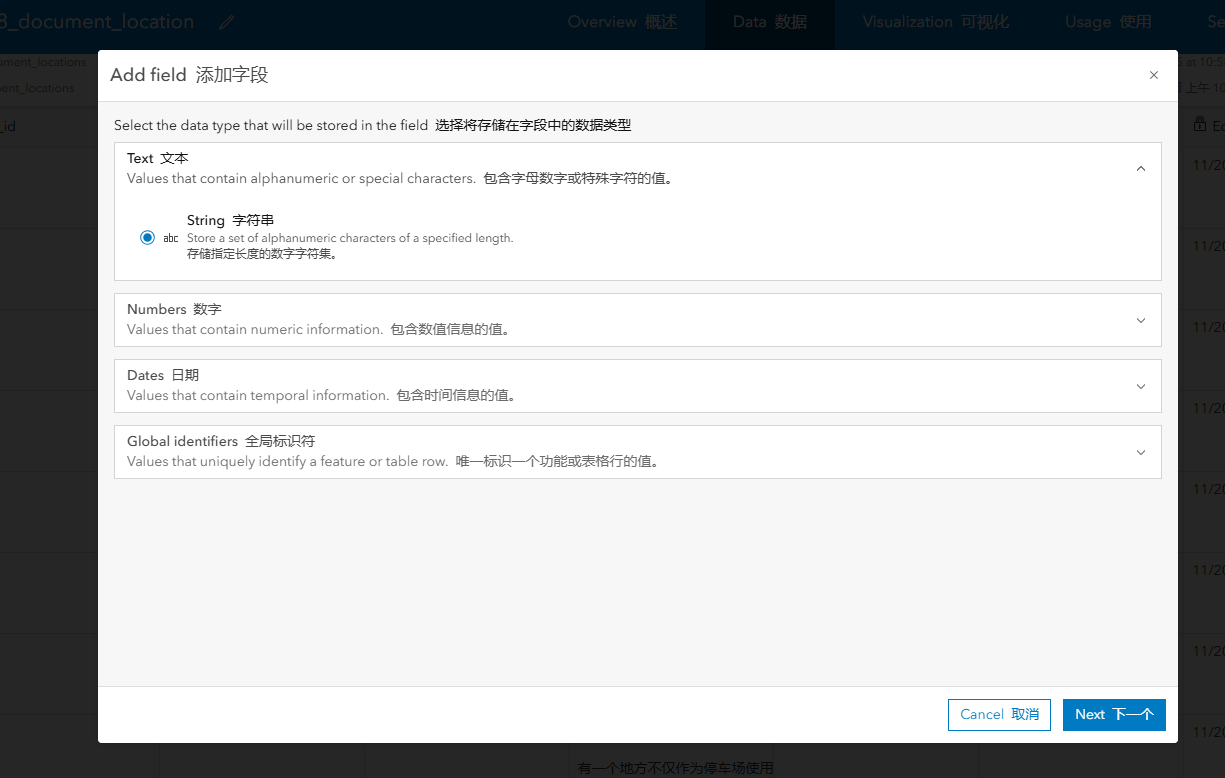

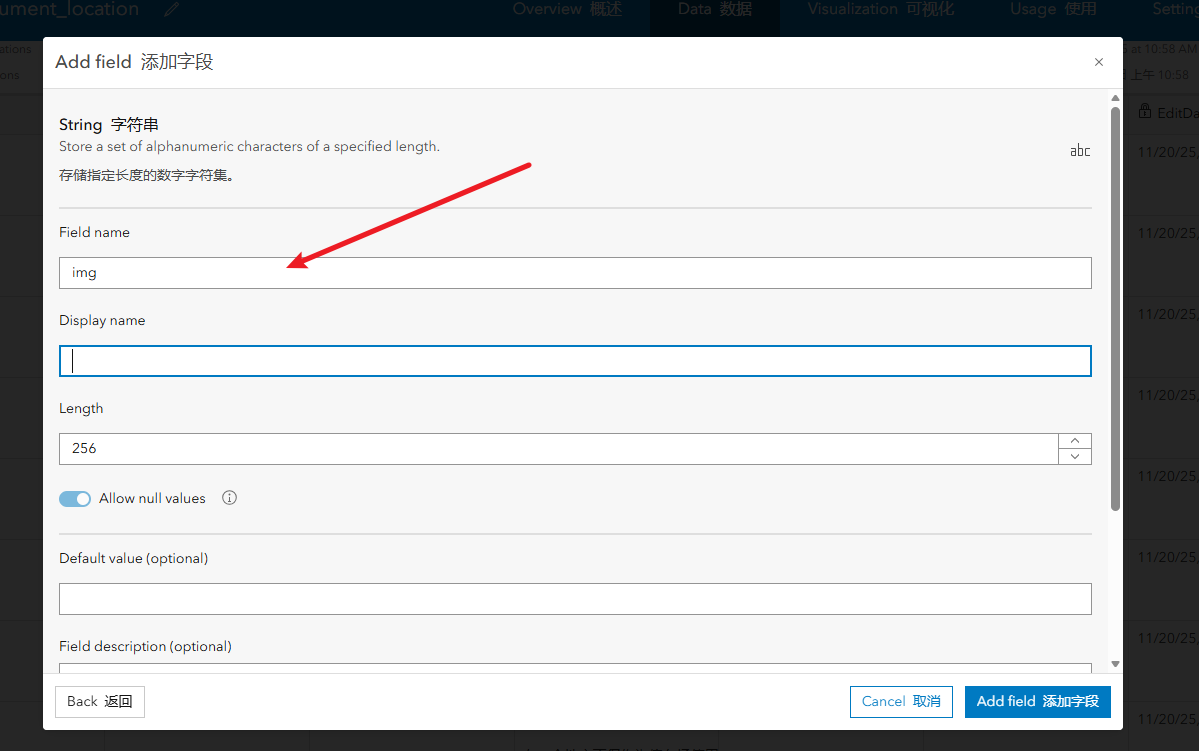

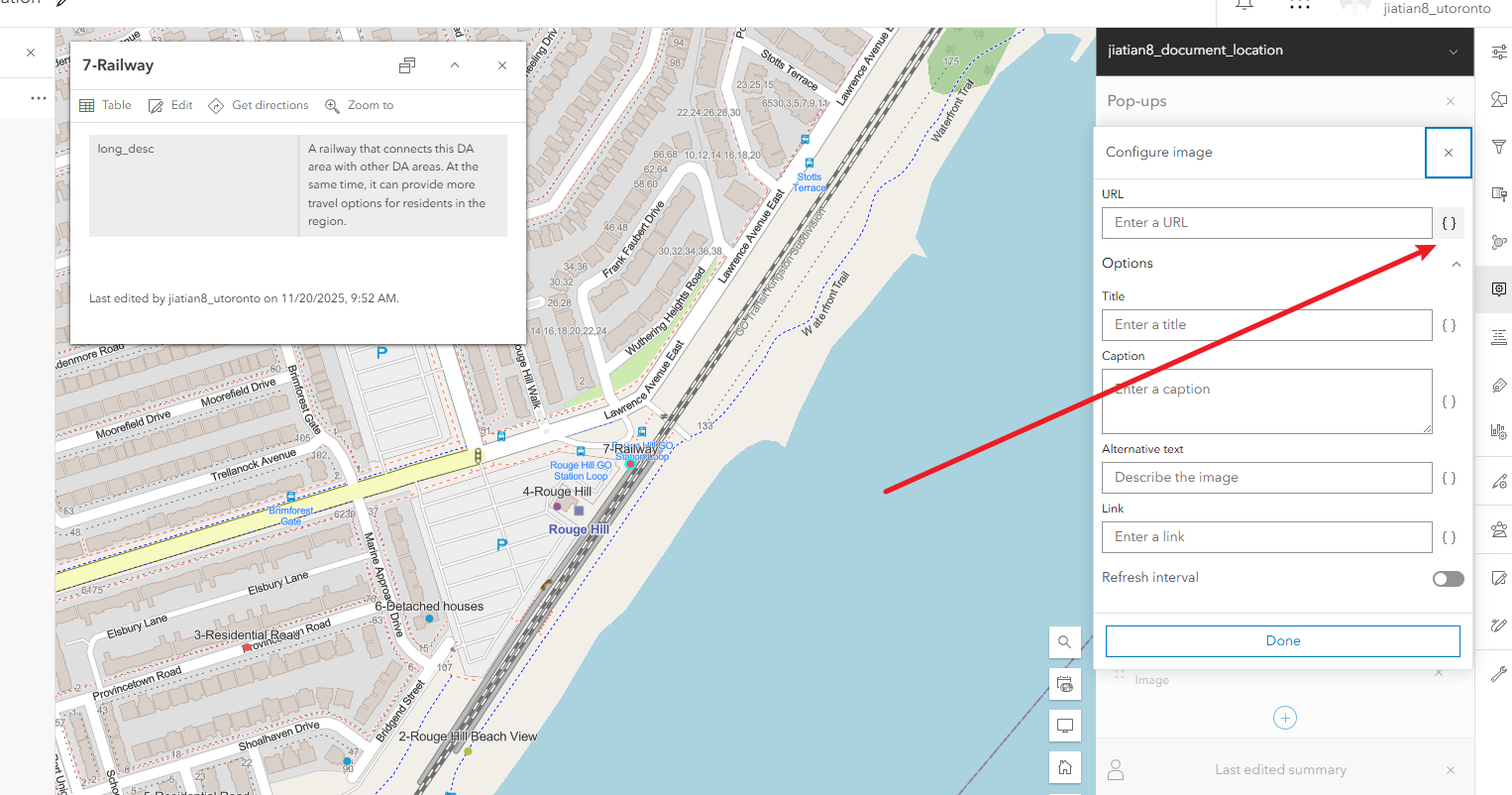

6. Go to Data tab, add a new text field for image URL.

Get Image URLs

Modify Layer Settings to Allow Field Editing

Add 'img' Field and Fill URLs

Step 2: Configure Layer

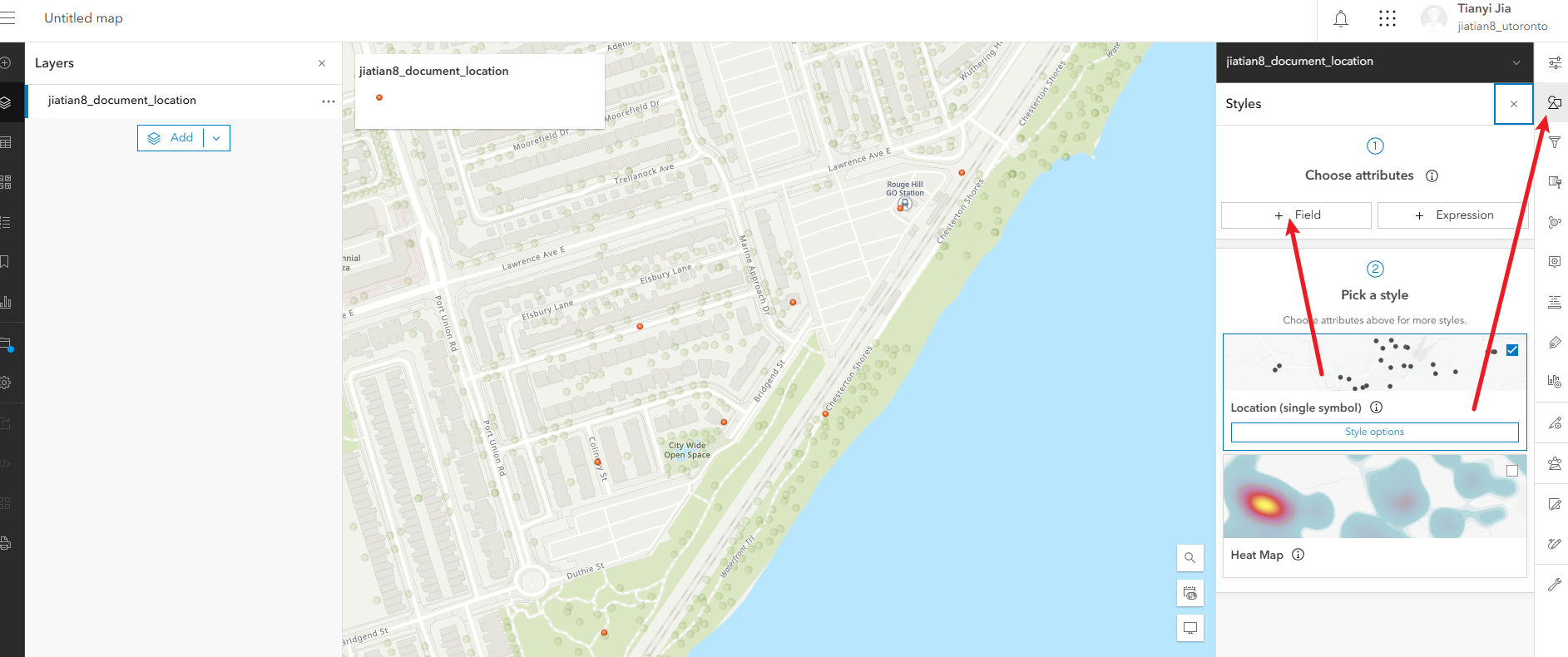

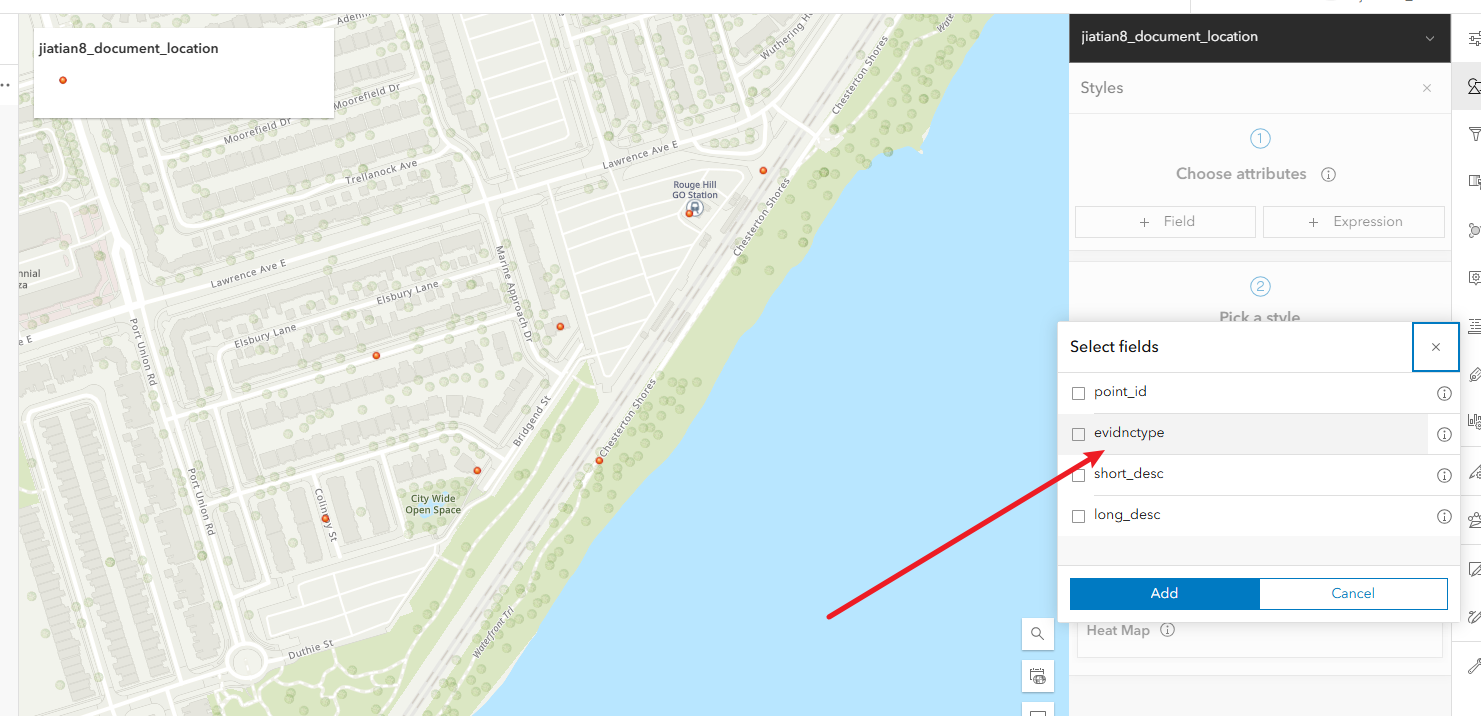

1. Style: By evidnctype.

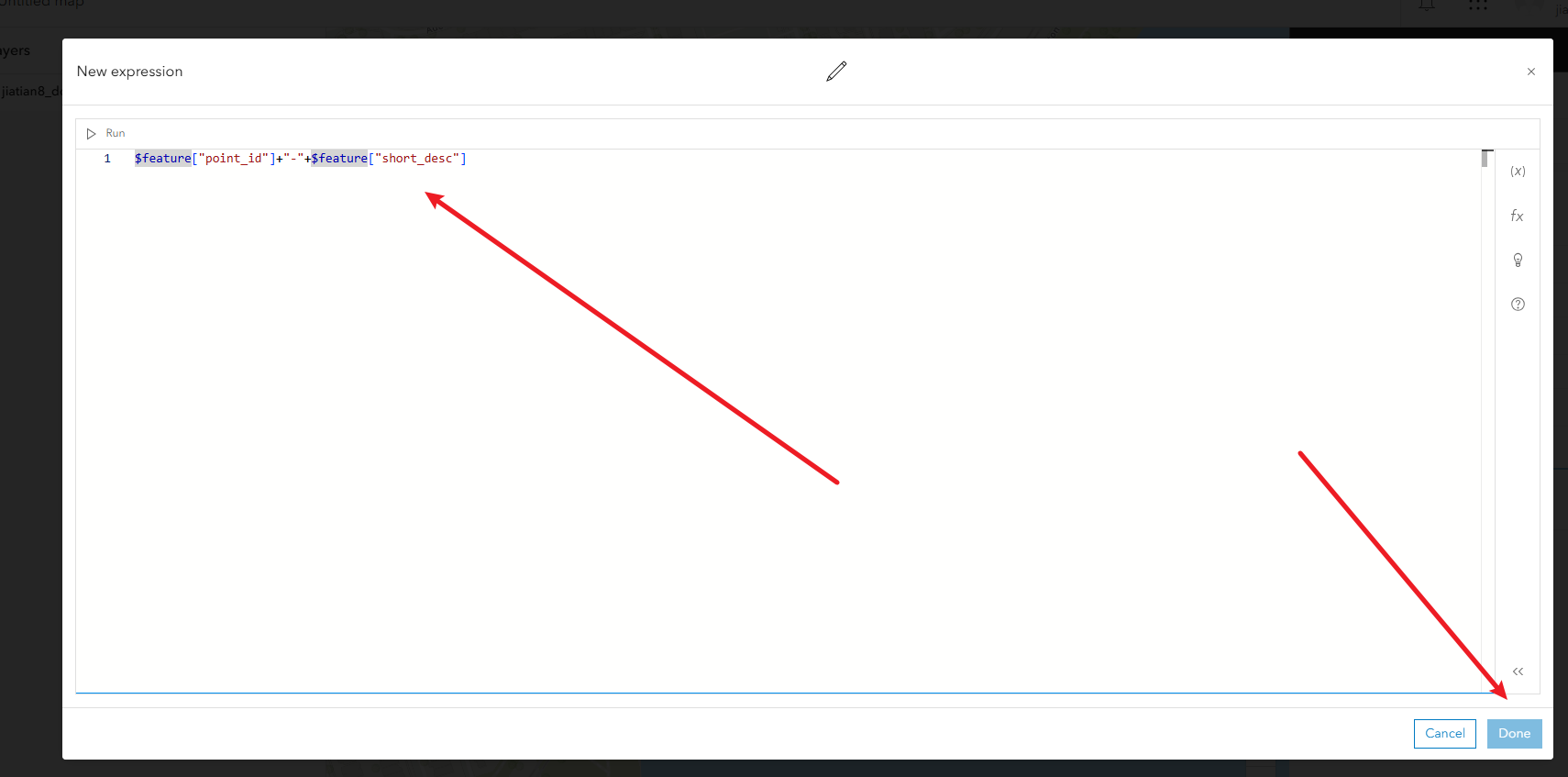

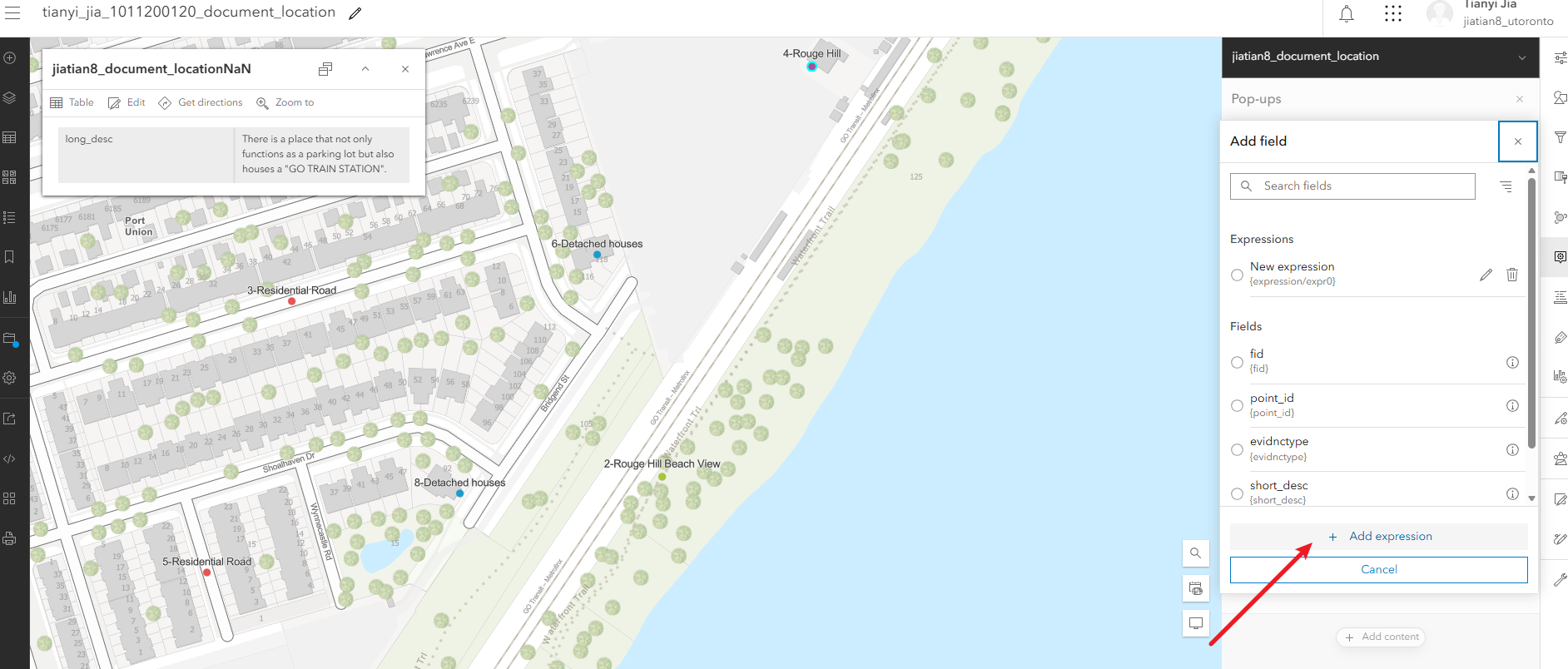

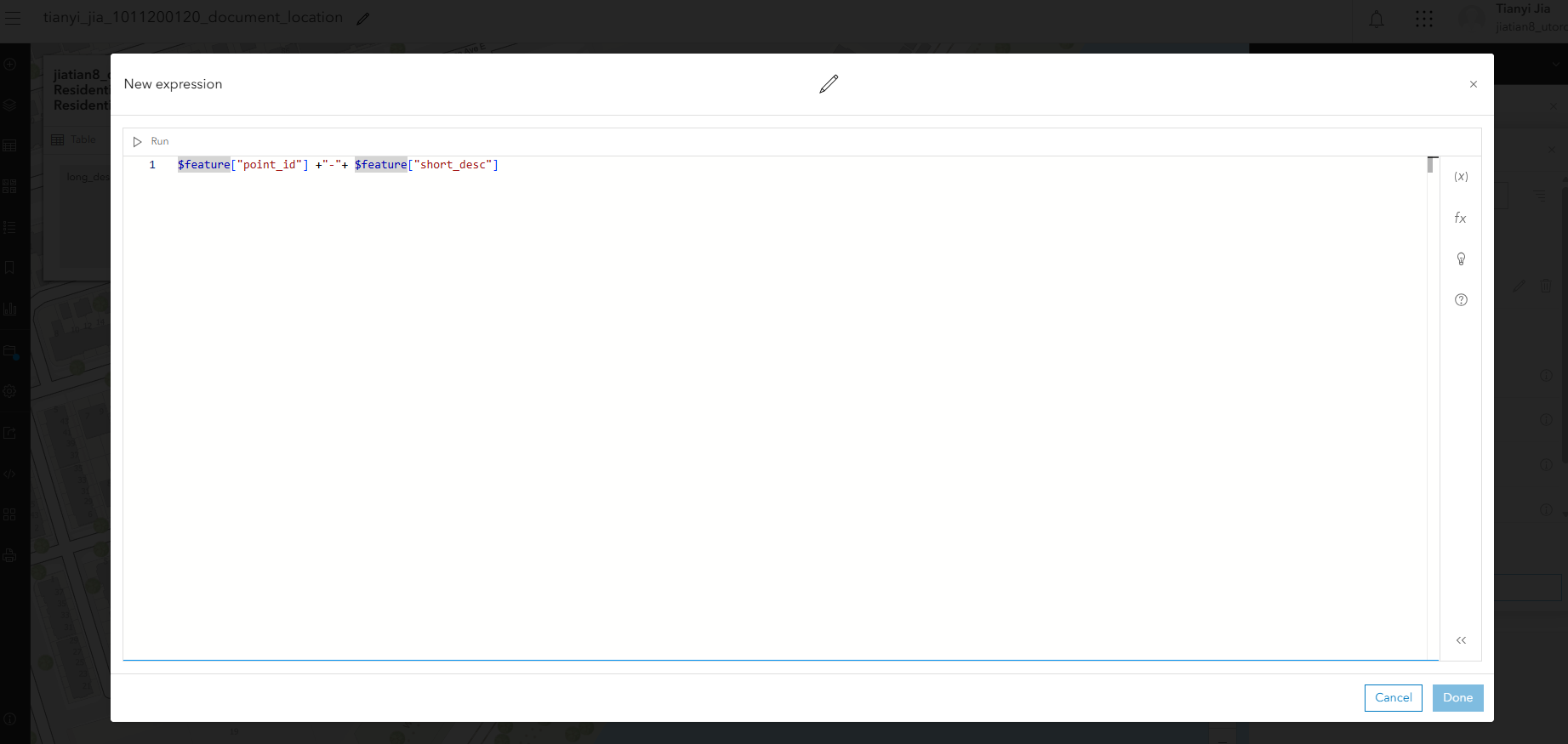

2. Labels: Expression $feature["point_id"] + "-" + $feature["short_desc"]

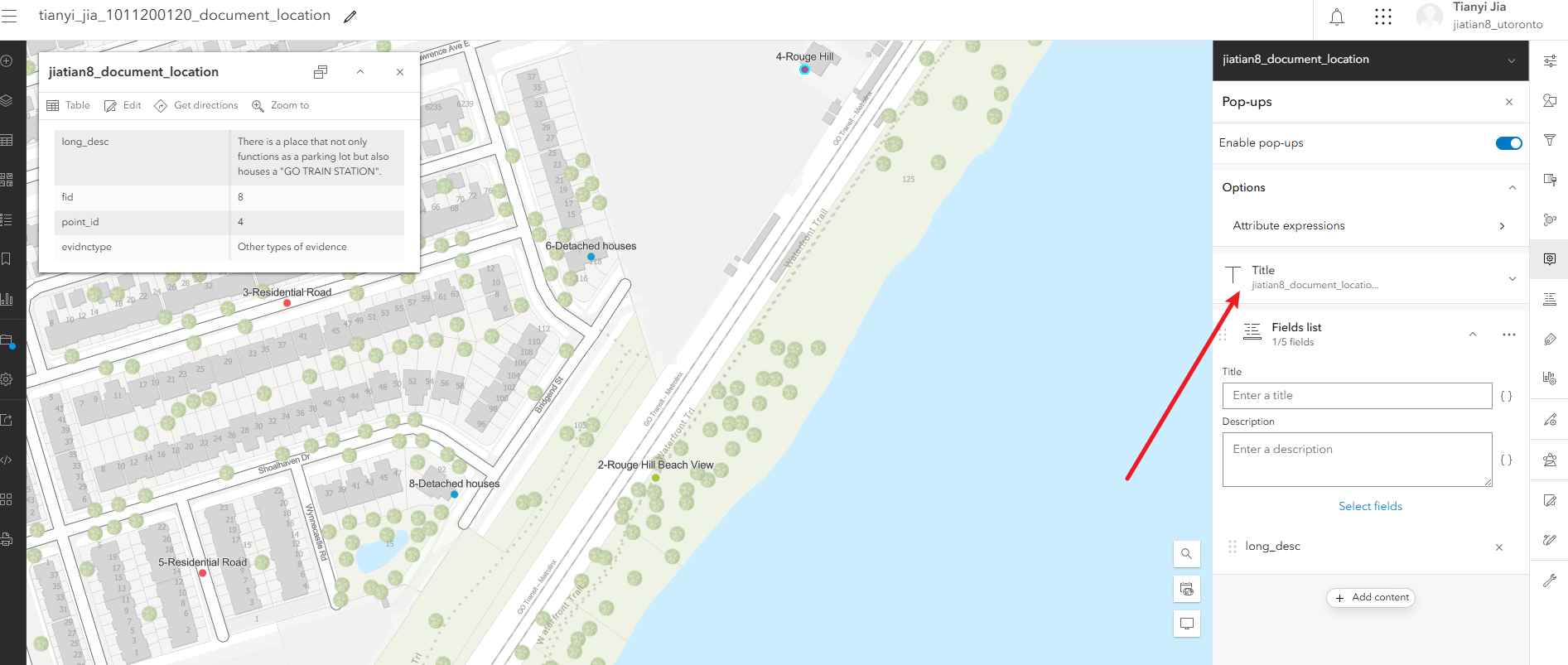

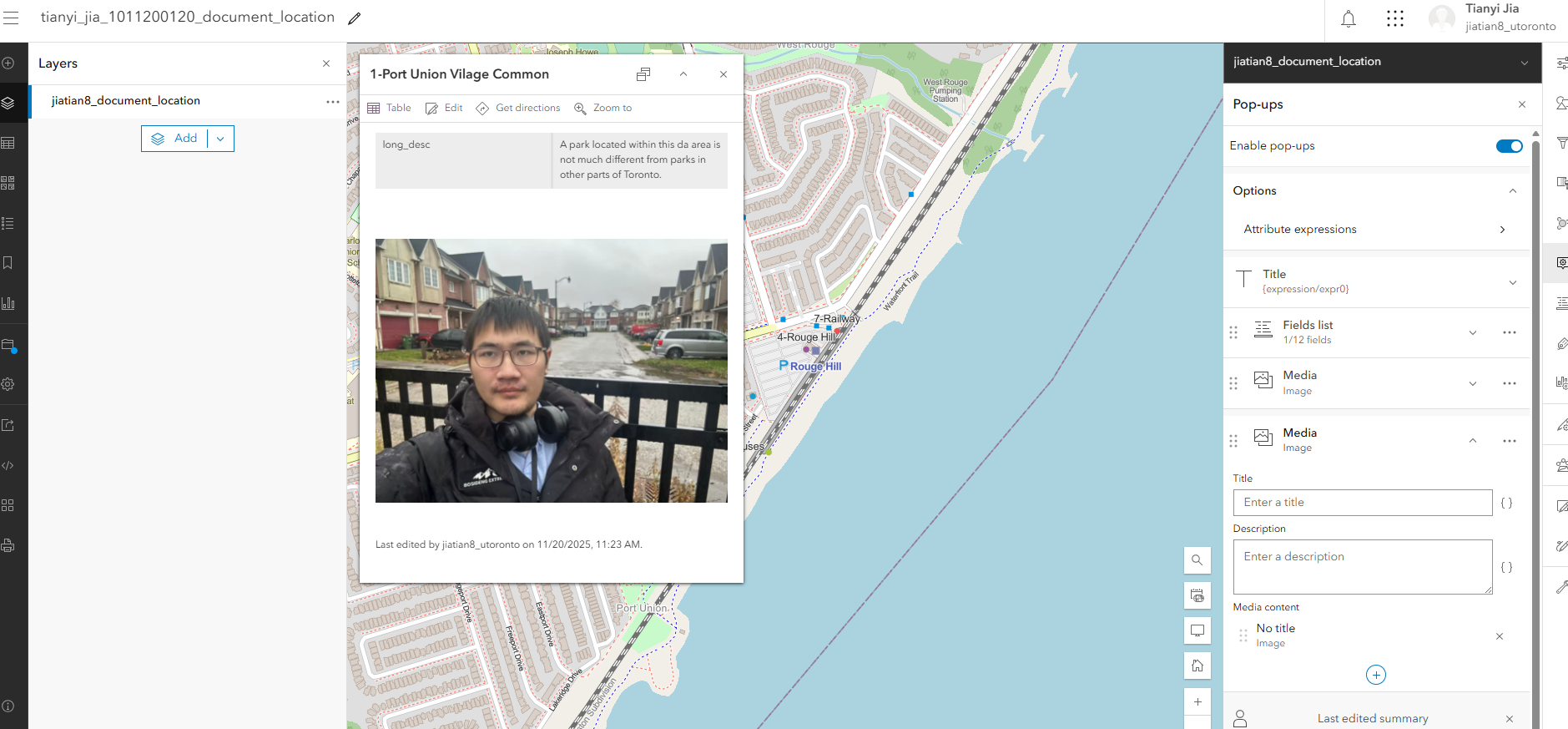

3. Pop-ups: Remove fields except long_desc. Title: $feature["point_id"] + "-" + $feature["short_desc"]

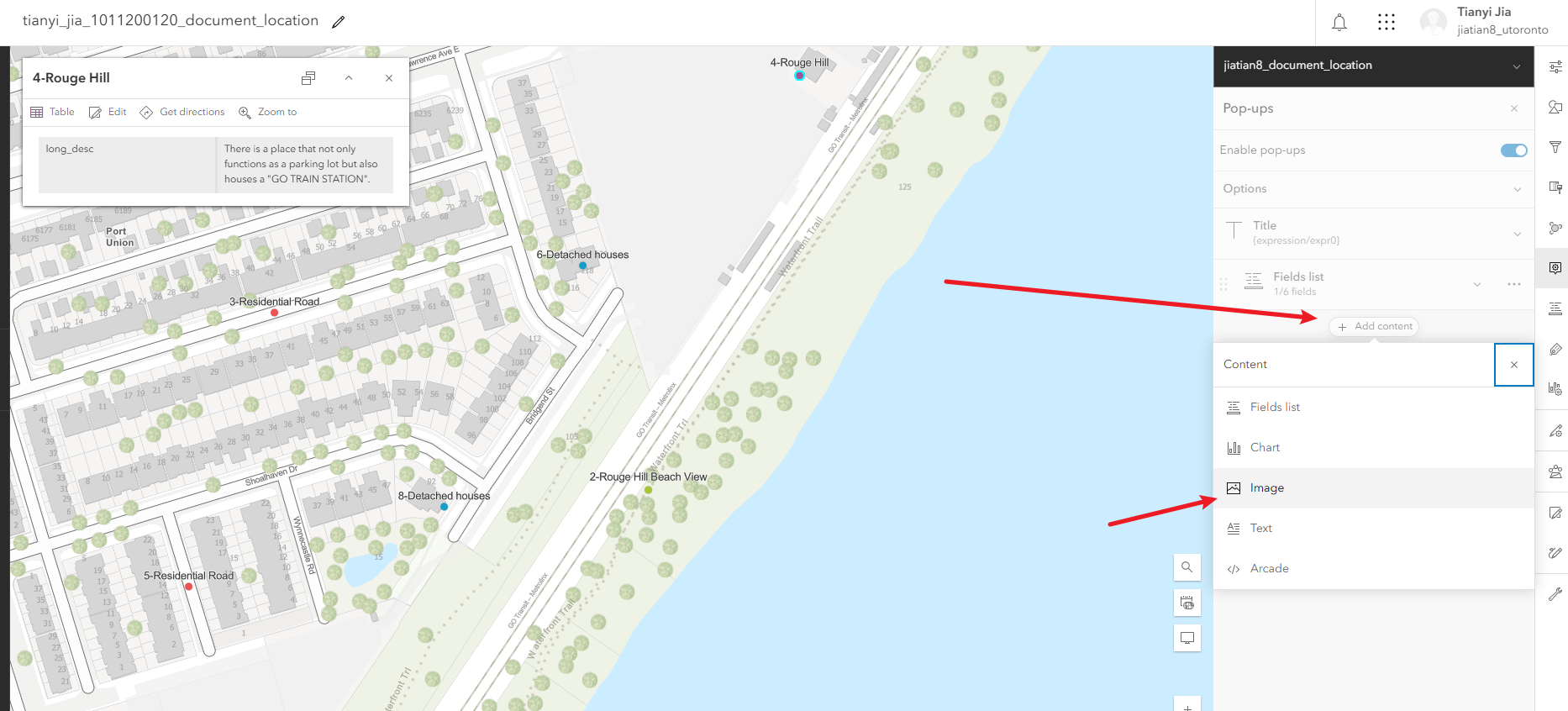

4. Show image in pop-up using the img field.

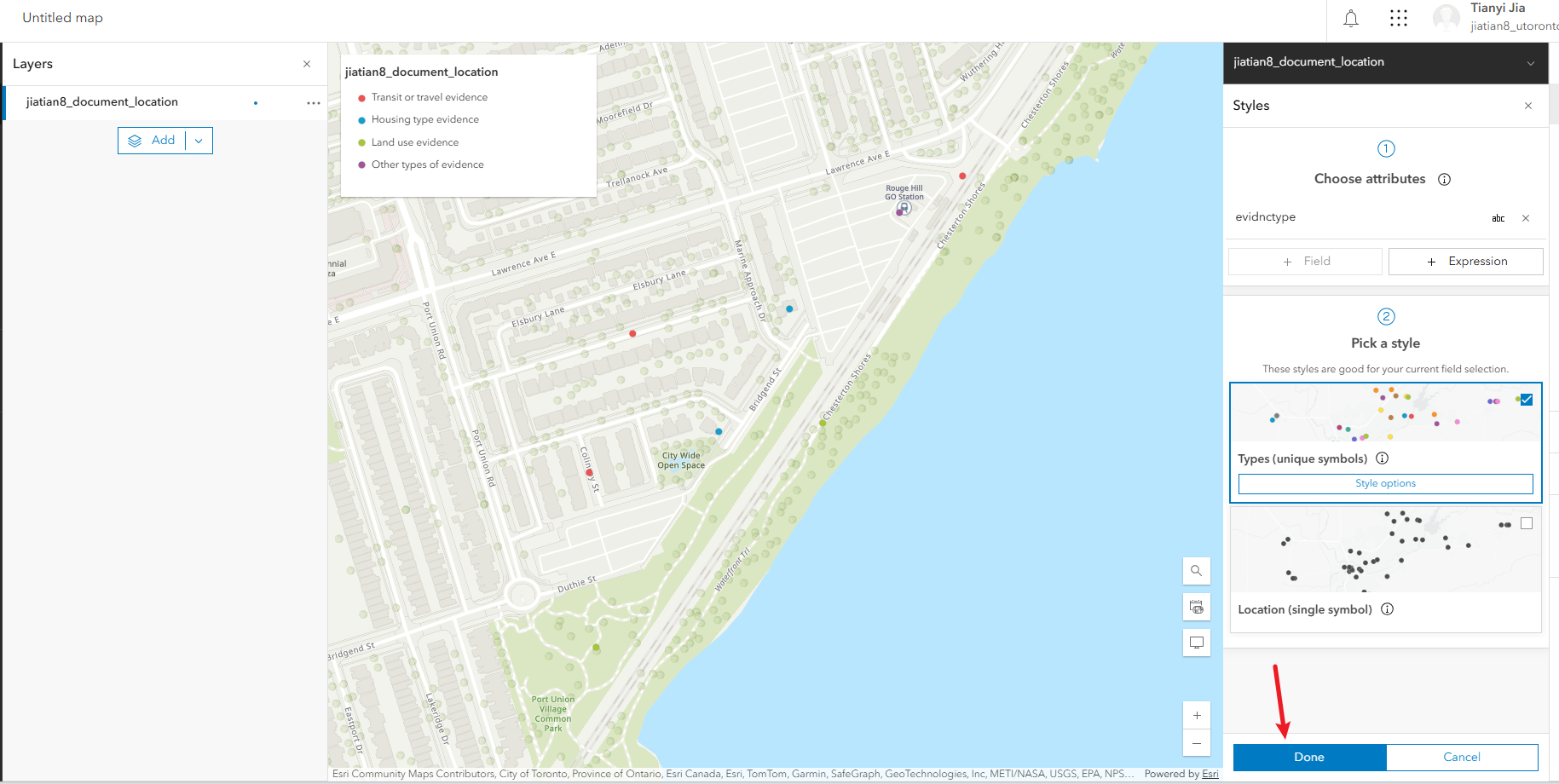

Configure Symbols

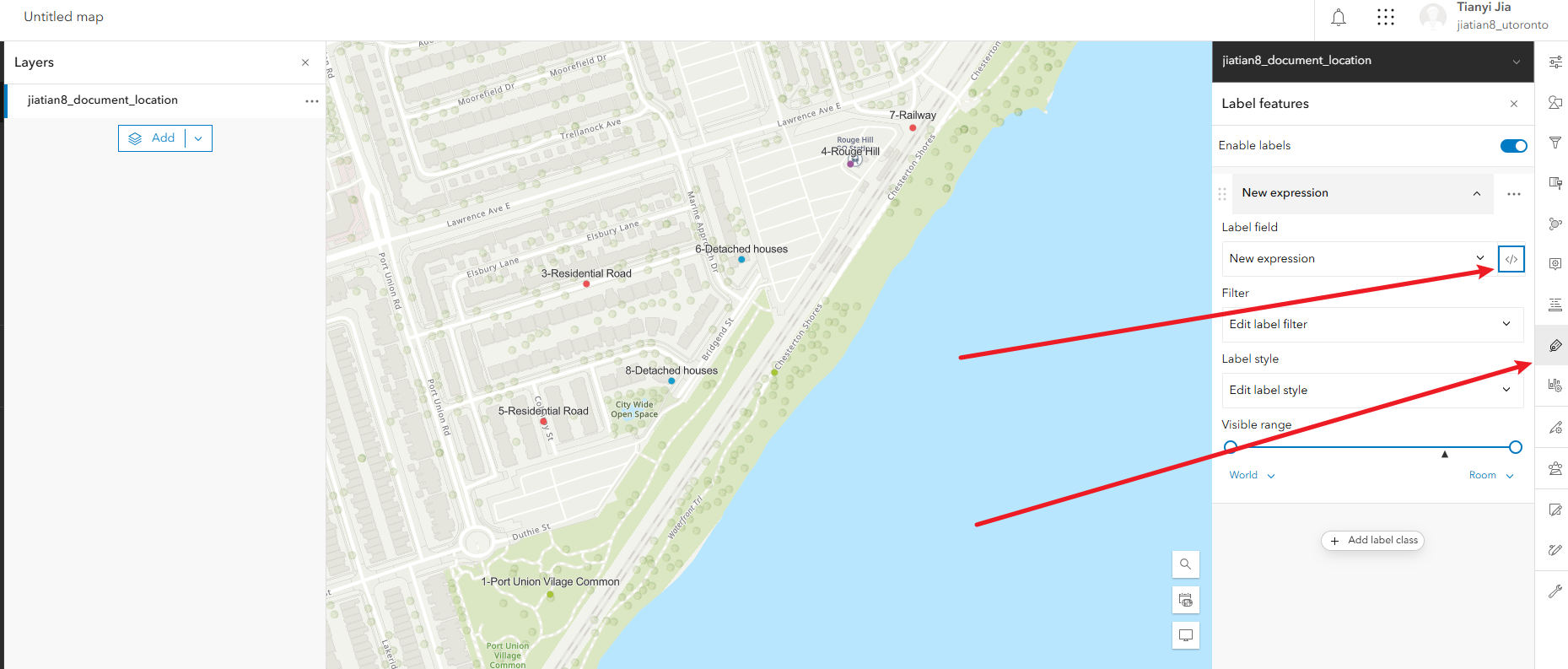

Configure Labels

Configure Attributes

Configure Image in Pop-up

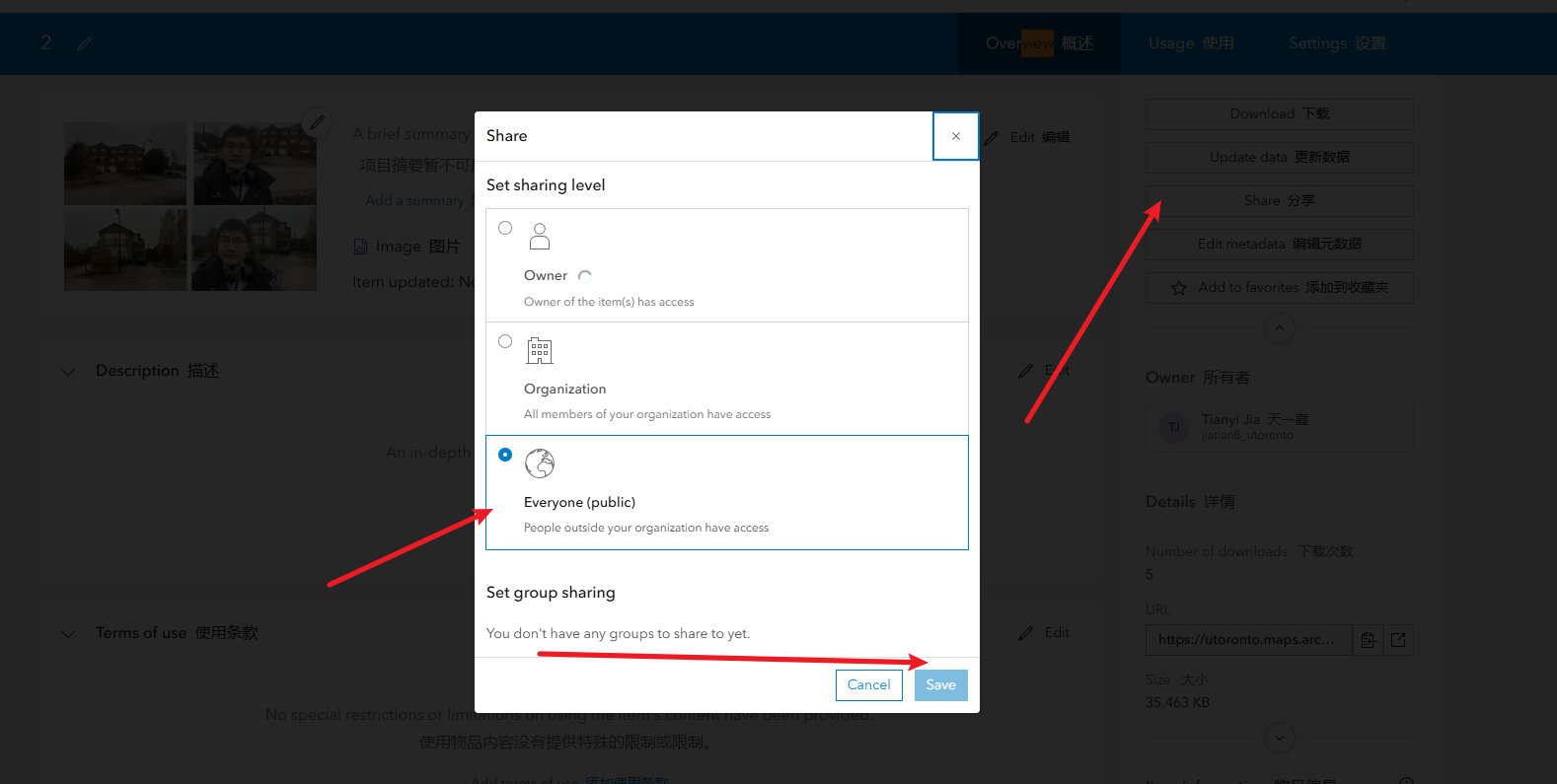

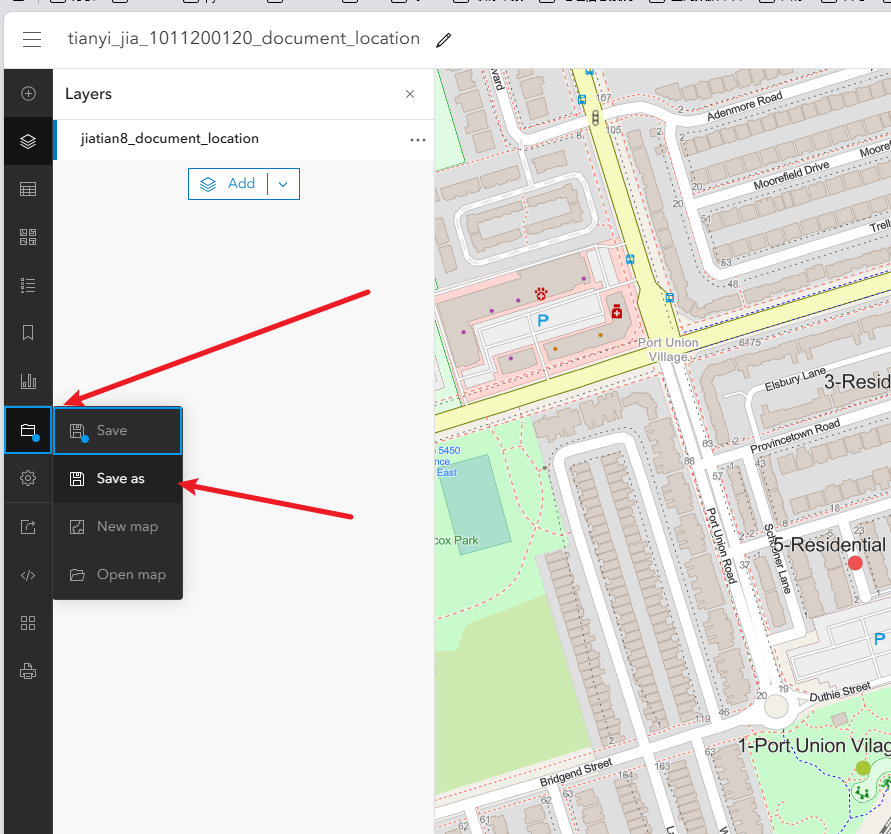

Step 3: Save Map, Share Layer, Share Map

1. Save map.

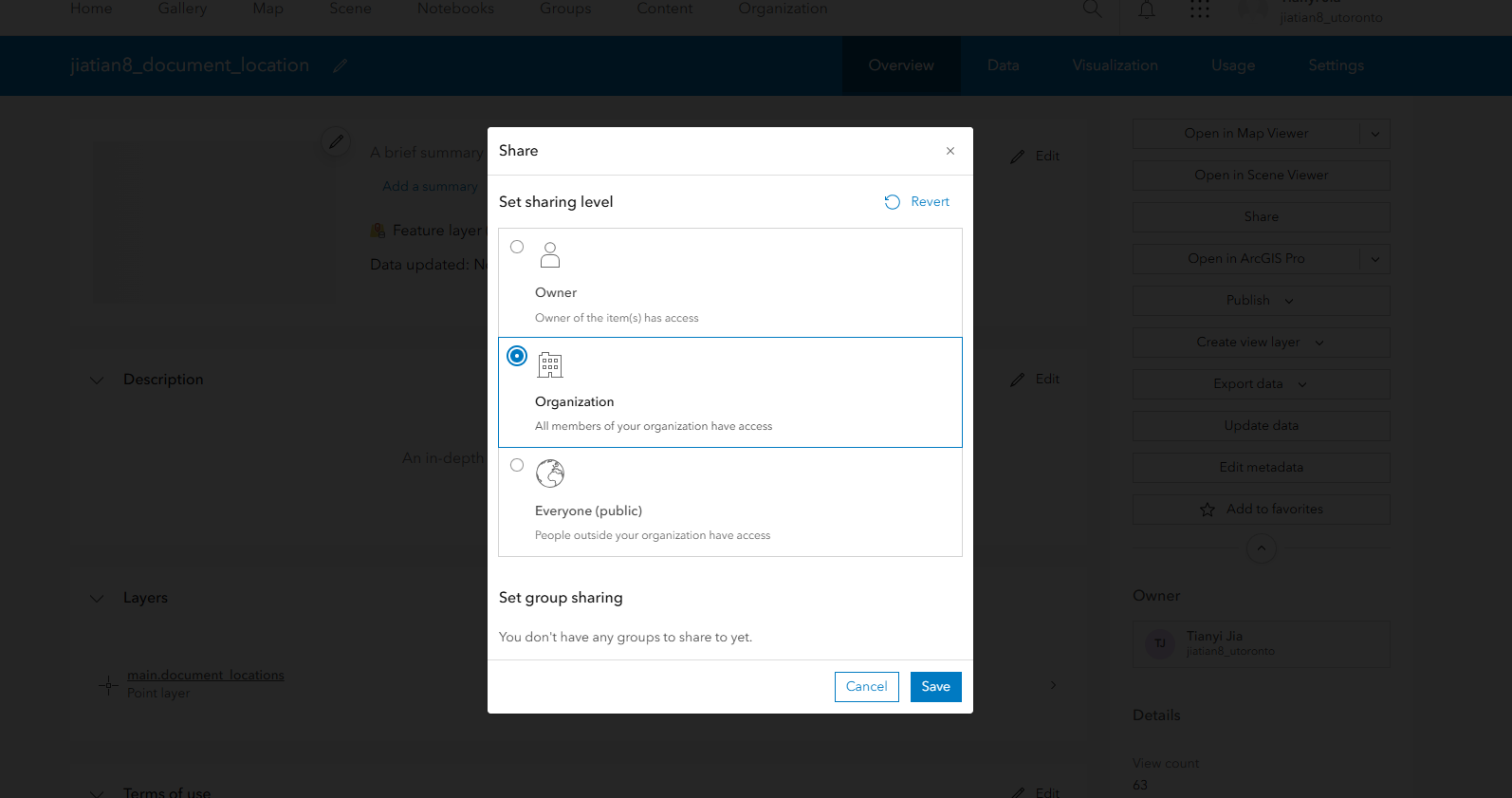

2. Share feature layer to Organization.

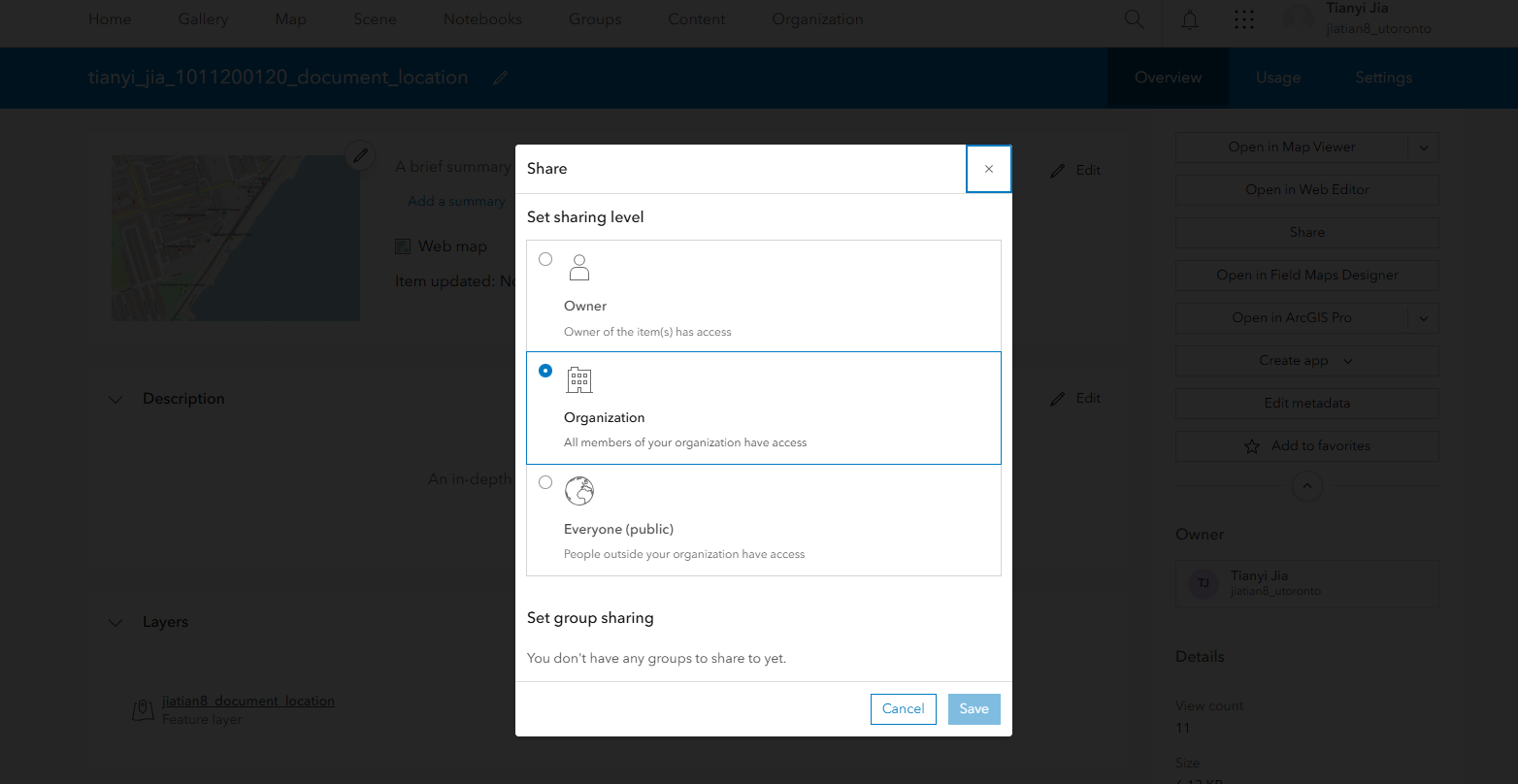

3. Share map and copy link.

Final link: https://arcg.is/1K99KX1

Share Feature Layer

Share Map

Share Map URL