ChatGLM3-6B Local API Invocation Method

Starting the Local ChatGLM3-6B Model

1. Create a New Conda Environment

Execute the following commands to create a new Conda environment:

conda create -n chatglm3-demo python=3.11

conda activate chatglm3-demo

2. Navigate to the openai_api_demo Module and Run the Following Code:

python api_server.py

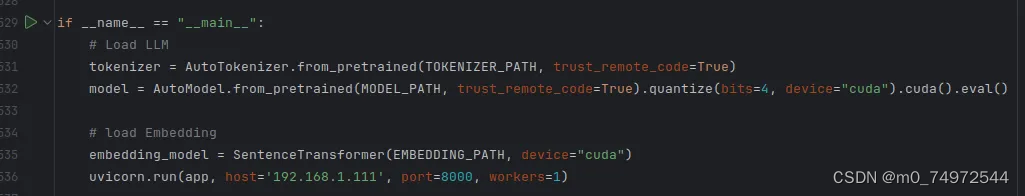

If you encounter out-of-memory issues, consider quantizing the model. In the main code of api_server.py, you can adjust the model loading configuration.

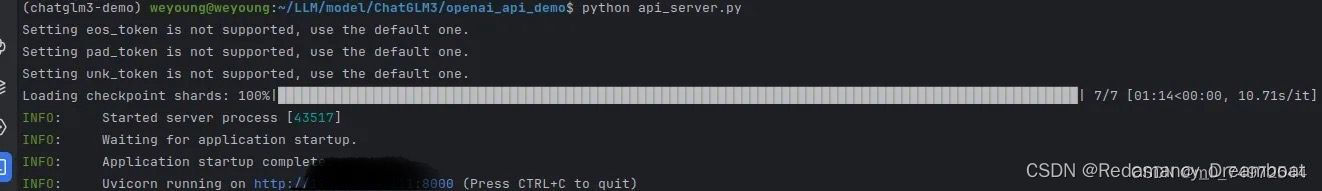

Upon successful startup, the interface will display:

This interface includes the local IP address and port number.

This interface includes the local IP address and port number.

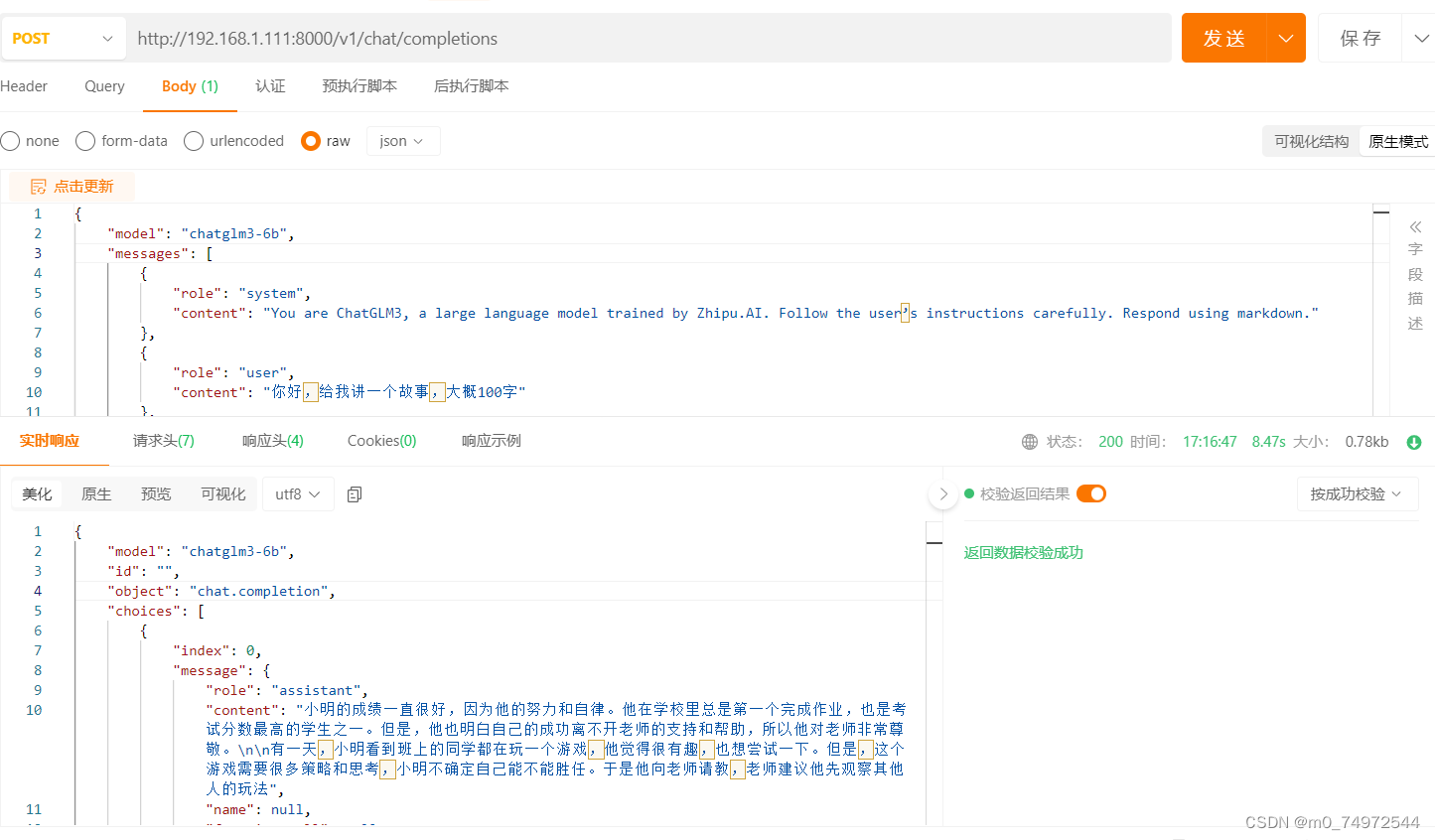

3. Postman Test Call (Recommended): Use POST Method

Parameters to Pass: This format supports multi-turn conversations by appending messages to the messages list.

{

"model": "chatglm3-6b",

"messages": [

{

"role": "system",

"content": "You are ChatGLM3, a large language model trained by Zhipu.AI. Follow the user’s instructions carefully. Respond using markdown."

},

{

"role": "user",

"content": "Hello, tell me a story of about 100 words"

},

{

"role": "assistant",

"content": "Once upon a time, there was a diligent elementary school graduate named Xiao Ming. He would open his books every morning, reading a passage in English followed by one in Chinese. One day, his teacher told him, 'Xiao Ming, you work so hard; I think you should take a good rest.' Xiao Ming replied, 'Teacher, if I only work half as hard, you would already be satisfied.'"

},

{

"role": "user",

"content": "Continue the story"

},

{

"role": "assistant",

"content": "After hearing his teacher's advice, Xiao Ming thought for a moment and said, 'Teacher, then I won't work hard anymore.' From then on, Xiao Ming often slept in class. The teacher was surprised and asked him why he did this. Xiao Ming replied, 'Teacher, I've already worked half as hard; now I'm resting.' The teacher laughed and told the other students, 'This child has strong self-management skills; we don't need to worry too much.'"

},

{

"role": "user",

"content": "Continue the story"

}

],

"stream": false,

"max_tokens": 100,

"temperature": 0.5,

"top_p": 0.5

}

If using Postman results in a connection timeout, ensure that port 8000 is open.

Recommended Hyperparameter Settings

- max_length: The total token limit for the model, including input and output tokens.

- temperature: Adjusts the probability distribution of words. Lower values make the model more deterministic, while higher values increase randomness.

- top_p: A sampling strategy parameter that selects from the smallest set of words whose cumulative probability exceeds a thresshold p, ignoring low-probability words. This focuses on the core of the probability distribution.

Paramter Settings for Different Scenarios

| Use Case | temperature | top_p | Description |

|---|---|---|---|

| Code Generation | 0.2 | 0.1 | Generates code adhering to established patterns and conventions. Output is more deterministic and focused, aiding in syntactically correct code. |

| Creative Writing | 0.7 | 0.8 | Produces creative and diverse text for storytelling. Output is more exploratory and less constrained by patteerns. |

| Chatbot Responses | 0.5 | 0.5 | Generates dialogue responses balancing consistency and diversity. Output is more natural and engaging. |

| Tool Invocation and Response | 0.0 | 0.7 | Provides concise responses based on given content, addressing user queries directly. |

| Code Comment Generation | 0.1 | 0.2 | Generates concise and relevant code comments. Output is more deterministic and aligned with conventions. |

| Data Analysis Scripts | 0.2 | 0.1 | Produces data analysis scripts that are more likely to be correct and efficient. Output is more deterministic and focused. |

| Exploratory Code Writing | 0.6 | 0.7 | Generates code exploring alternative solutions and creative approaches. Output is less restricted by established patterns. |