Neural network vectorization refers to converting input data into vector form to facilitate processing by neural networks. The benefits include:

- Improved computational efficiency: Vectorized inputs enable praallel computation, accelerating training and inference.

- Reduced storage: Compresssing raw data into smaller vectors reduces memory usage.

- Simplified data processing: Vectorization transforms complex inputs into a uniform format, making pattern recognition easier.

- Support for diverse input types: Different data types (images, text, audio) can be converted into vectors, allowing neural networks to handle various inputs.

- Enhanced expressiveness: Vectorization extracts features, improving model performance and accuracy.

Let's dive in to the vectorized implementation of neural networks.

Matrix Multiplication in Numpy

Define a matrix A:

A = np.array([[1, -1, 0.1],

[2, -2, 0.2]])

Its transpose AT:

AT = A.T

Define a matrix W:

W = np.array([[3, 5, 7, 9],

[4, 6, 8, 0]])

Compute Z = AT · W:

Z = np.matmul(AT, W)

Or equivalently:

Z = AT @ W

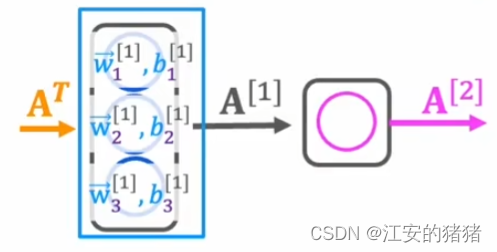

Vectorized Forward Propagation

Using the coffee roasting example (200 degrees, 17 minutes):

Initialize variables:

AT = np.array([[200, 17]])

W = np.array([[1, -3, 5],

[-2, 4, 6]])

b = np.array([[-1, 1, 2]])

Define a dense layer:

def dense(AT, W, b, g):

z = np.matmul(AT, W) + b

a_out = g(z)

return a_out # a_out == [[1, 0, 1]]

This implementation demonstrates how vectorization streamlines neural network computations.